Llama-2 single-handedly jump-started the open weight AI industry. Literally thousands of AI startups would not have existed without it.

LLMS

-

InfoTok: Efficient Long Video Processing Using Information Theory

By

–

How do we process long videos efficiently without losing crucial information? NVIDIA, Stanford University, and National University of Singapore have an answer! They introduce InfoTok, a breakthrough method inspired by Shannon's information theory. It intelligently allocates

-

OpenClaw-RL: Reinforcement Learning for Agent Model Weights

By

–

OpenClaw meets RL!

— Akshay 🚀 (@akshay_pachaar) 12 avril 2026

OpenClaw Agents adapt through memory files and skills, but the base model weights never actually change.

OpenClaw-RL solves this!

It wraps a self-hosted model as an OpenAI-compatible API, intercepts live conversations from OpenClaw, and trains the policy in… pic.twitter.com/4kxY1b2wSCOpenClaw meets RL! OpenClaw Agents adapt through memory files and skills, but the base model weights never actually change. OpenClaw-RL solves this! It wraps a self-hosted model as an OpenAI-compatible API, intercepts live conversations from OpenClaw, and trains the policy in the background using RL. The architecture is fully async. This means serving, reward scoring, and training all run in parallel. Once done, weights get hot-swapped after every batch while the agent keeps responding. Currently, it has two training modes: – Binary RL (GRPO): A process reward model scores each turn as good, bad, or neutral. That scalar reward drives policy updates via a PPO-style clipped objective. – On-Policy Distillation: When concrete corrections come in like "you should have checked that file first," it uses that feedback as a richer, directional training signal at the token level. When to use OpenClaw-RL? To be fair, a lot of agent behavior can already be improved through better memory and skill design. OpenClaw's existing skill ecosystem and community-built self-improvement skills handle a wide range of use cases without touching model weights at all. If the agent keeps forgetting preferences, that's a memory problem. And if it doesn't know how to handle a specific workflow, that's a skill problem. Both are solvable at the prompt and context layer. Where RL becomes interesting is when the failure pattern lives deeper in the model's reasoning itself. Things like consistently poor tool selection order, weak multi-step planning, or failing to interpret ambiguous instructions the way a specific user intends. Research on agentic RL (like ARTIST and Agent-R1) has shown that these behavioral patterns hit a ceiling with prompt-based approaches alone, especially in complex multi-turn tasks where the model needs to recover from tool failures or adapt its strategy mid-execution. That's the layer OpenClaw-RL targets, and it's a meaningful distinction from what OpenClaw offers. I have shared the repo in the replies!

→ View original post on X — @akshay_pachaar, 2026-04-12 13:35 UTC

-

Hermes Agent: Self-Improving AI with Cross-Session Memory

By

–

The self-improving AI agent from Nous Research! Hermes Agent is a self-improving AI agent that builds skills from your work, improves them over time, and remembers across sessions. Most AI agents reset every conversation. You teach them your codebase structure, they forget. You

-

Analyzing Claude’s Deep and Distinctive Personality Traits

By

–

Lisez bien. Je pense avoir cerné la personnalité profonde et très particulière de Claude.

-

Hassabis vs LeCun: Major AI Researchers Clash on LLM Future

By

–

The CEO of Google DeepMind just went on record saying he disagrees with one of the most respected AI researchers in the world.

— Milk Road AI (@MilkRoadAI) 12 avril 2026

Demis Hassabis, the man behind AlphaFold, AlphaGo, and Google's entire AI operation publicly pushed back against Yann LeCun's claim that large language… pic.twitter.com/qmrLXNEqXUThe CEO of Google DeepMind just went on record saying he disagrees with one of the most respected AI researchers in the world. Demis Hassabis, the man behind AlphaFold, AlphaGo, and Google's entire AI operation publicly pushed back against Yann LeCun's claim that large language models are a dead end for artificial intelligence. LeCun, who left Meta earlier this year to start his own AI lab, has been saying for years that LLMs cannot reason, cannot plan, and will never get us to human-level intelligence. Hassabis disagrees, and he said so directly. His position is that scaling laws are still working, foundation models are still getting more capable, and whatever AGI ends up looking like, LLMs will be a central part of it, not something that gets replaced. He does say there is roughly a 50/50 chance that one or two additional breakthroughs will be needed beyond scaling alone, things like better memory, long-term planning, and world models. But the core disagreement with LeCun is clear, Hassabis believes the current architecture is sound and the current path leads somewhere real. Two Nobel-recognized researchers, two founding figures of modern AI, now publicly on opposite sides of the most important technical question in the industry.

→ View original post on X — @ceobillionaire, 2026-04-12 09:02 UTC

-

MiniMax-M2.7: New Model Now Available on Hugging Face

By

–

huggingface.co/MiniMaxAI/Min… [Translated from EN to English]

→ View original post on X — @kimmonismus, 2026-04-12 08:48 UTC

-

MiniMax M2.7 Open Source Model Achieves Strong Performance in Agent Workflows

By

–

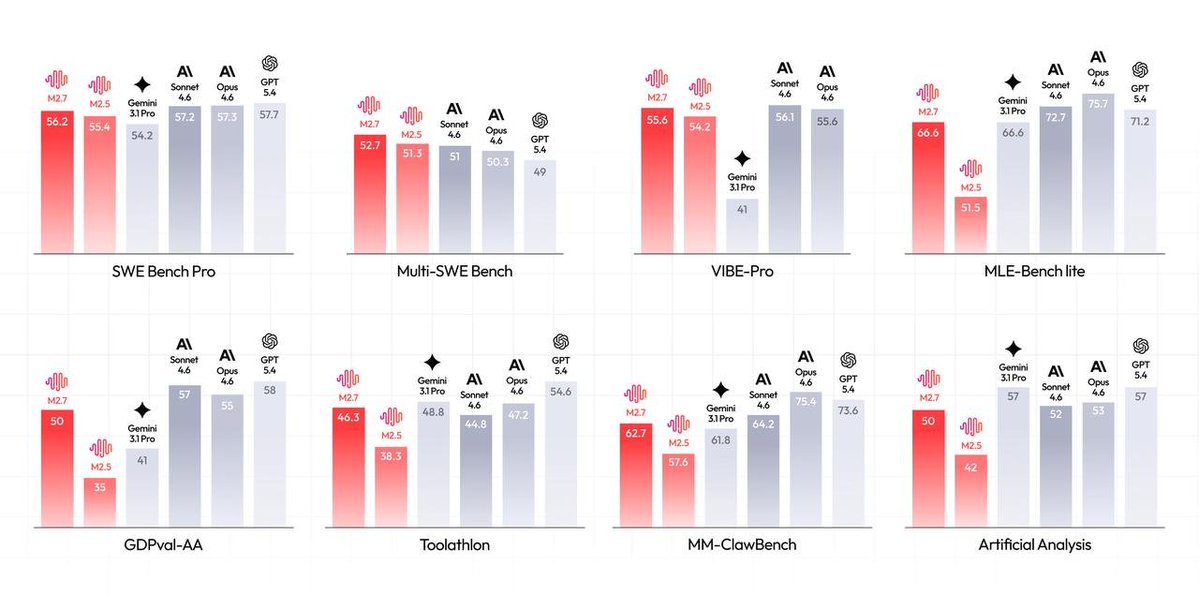

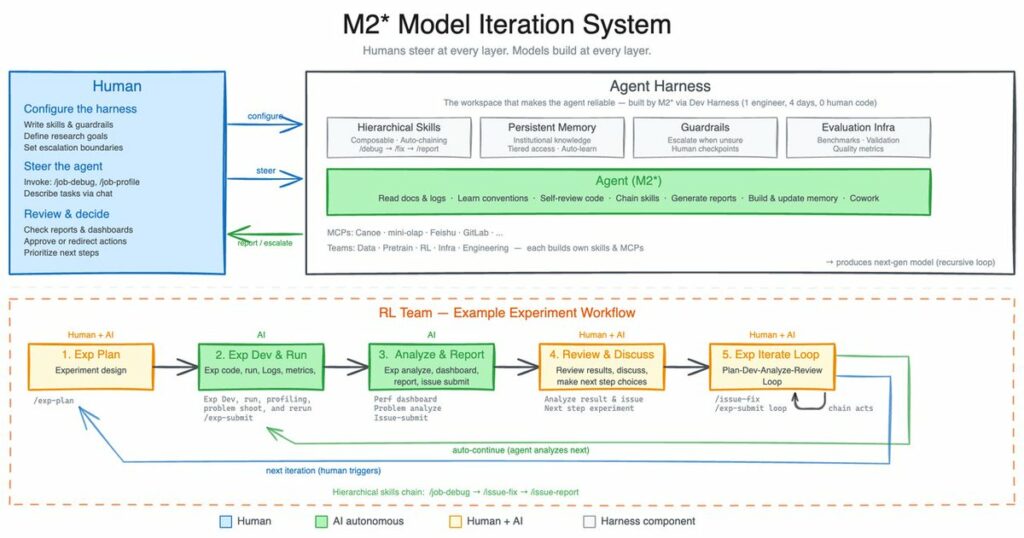

MiniMax has open sourced M2.7, their open-source model designed "for agent-based workflows, complex reasoning, and real-world engineering tasks." It introduces self-evolution capabilities, where the model improves itself through iterative experimentation, achieving 30% performance gains and a 66.6% ML competition medal rate. Honestly, this is more impactful than expected. On the performance side, M2.7 delivers strong software engineering results (56.22% SWE-Pro), near top-tier benchmarks, and excels in multi-agent collaboration, tool use, and productivity tasks like document editing. With high ELO scores, fast incident recovery (<3 min), and 97% skill compliance, it positions itself as one of the most capable open-source AI systems right now.

→ View original post on X — @kimmonismus, 2026-04-12 08:48 UTC

-

Agent Skills: AI Coding Agents with Engineering Best Practices

By

–

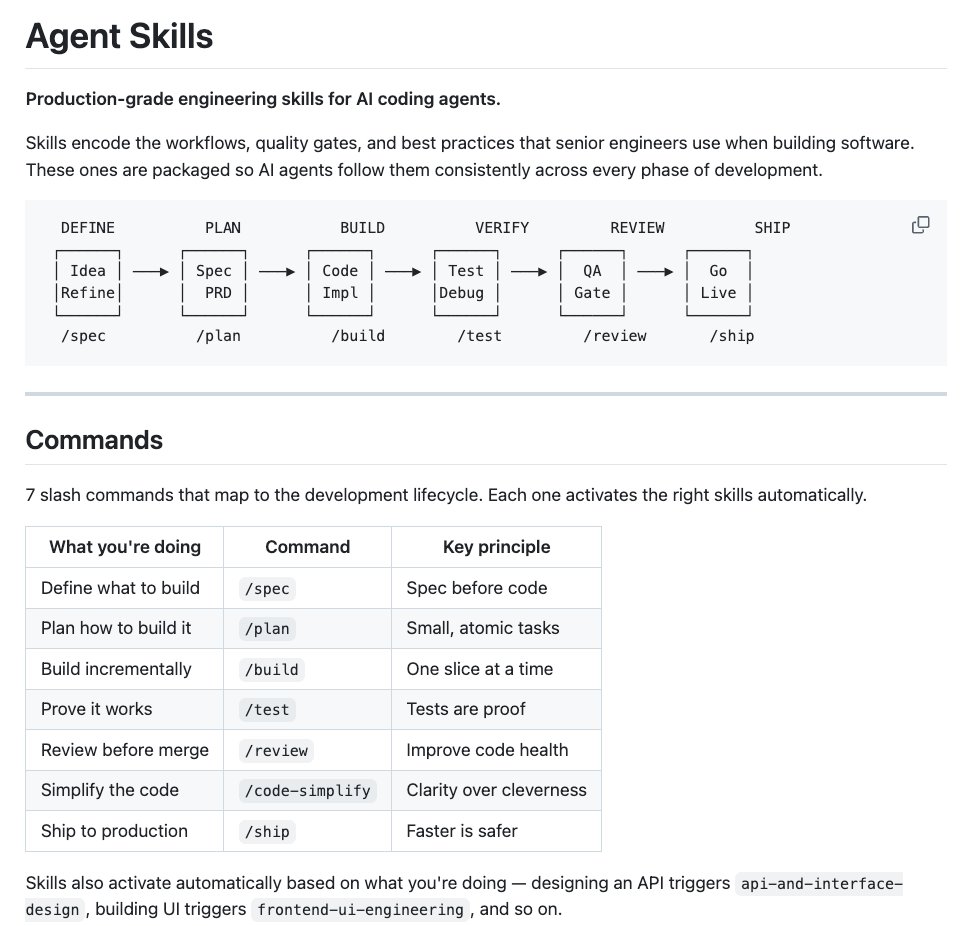

If you found this useful, a like or RT goes a long way 🦾 Follow me → @datachaz for insights on LLMs, AI agents, and data science! Charly Wargnier (@DataChaz) 🚨 ICYMI @addyosmani from Google just dropped his new Agent Skills and it's incredible. It brings 19 engineering skills + 7 commands to AI coding agents, all inspired by Google best practices 🤯 AI coding agents are powerful, but left alone, they take shortcuts. They skip specs, tests, and security reviews, optimizing for "done" over "correct." Addy built this to fix that. Each skill encodes the workflows and quality gates that senior engineers actually use: spec before code, test before merge, measure before optimize. The full lifecycle is covered: → Define – refine ideas, write specs before a single line of code → Plan – decompose into small, verifiable tasks → Build – incremental implementation, context engineering, clean API design → Verify – TDD, browser testing with DevTools, systematic debugging → Review – code quality, security hardening, performance optimization → Ship – git workflow, CI/CD, ADRs, pre-launch checklists Features 7 slash commands: (/spec, /plan, /build, /test, /review, /code-simplify, /ship) that map to this lifecycle. It works with: ✦ Claude Code ✦ Cursor ✦ Antigravity ✦ … and any agent accepting Markdown. Baking in Google-tier engineering culture (Shift Left, Chesterton's Fence, Hyrum's Law) directly into your agent's step-by-step workflow! `npx skills add addyosmani/agent-skills` Free and open-source. Repo link in 🧵↓ — https://nitter.net/DataChaz/status/2043246635996807300#m

-

Claude AI Performance Decline: Reliability Issues and User Frustration

By

–

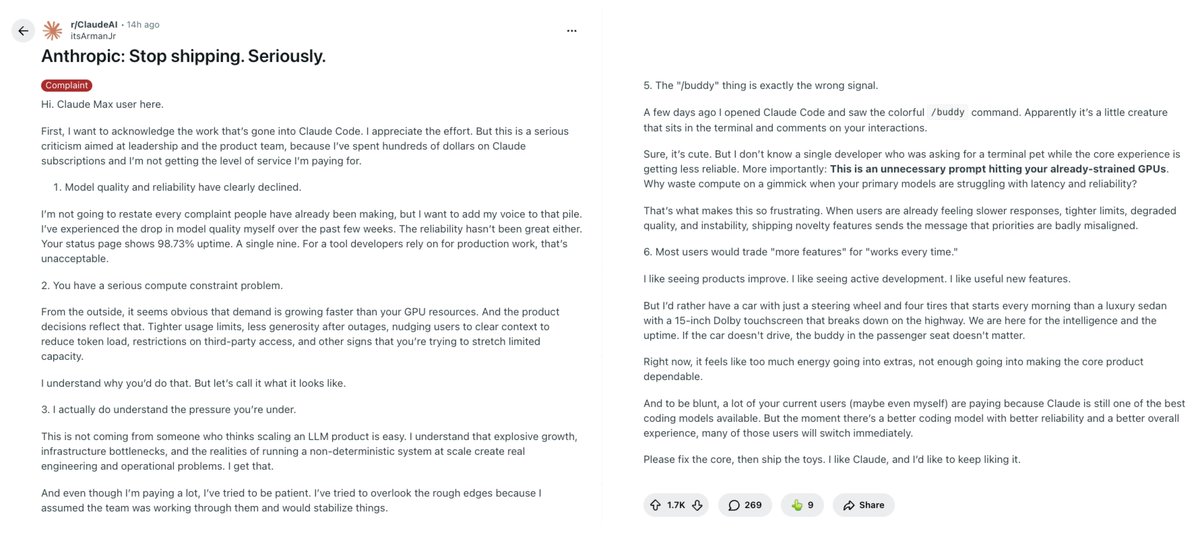

this guy just explained why @claudeai feels worse despite more updates: > Core reliability dropped to 98.73% uptime, hurting devs who need stability > Strained compute is wasted on fluff features like the /buddy terminal pet > Severe GPU constraints lead to tight usage limits and forced context clearing > Many cancelling Pro tiers to force Anthropic to listen > Even enterprise users report major regressions, confirming the OP's exact issues