Where to look for #GenerativeAI risks by Beth Stackpole @MITSloan Learn more: bit.ly/4uybBsA #LLM #GenAI #ArtificialIntelligence #MachineLearning

→ View original post on X — @ronald_vanloon, 2026-04-12 07:48 UTC

Global AI News Aggregator

By

–

Where to look for #GenerativeAI risks by Beth Stackpole @MITSloan Learn more: bit.ly/4uybBsA #LLM #GenAI #ArtificialIntelligence #MachineLearning

→ View original post on X — @ronald_vanloon, 2026-04-12 07:48 UTC

By

–

I'm frequently using Claude Code and i love @openclaw. But i would say Hermes Agent from @NousResearch is the best open-source agent I’ve ever used, especially given that it comes from an independent startup rather than a major LLM giant.

→ View original post on X — @scobleizer, 2026-04-12 07:45 UTC

By

–

One person running this full stack is ambitious. Curious how you pick between options like LangGraph, autogen and CrewAI for a given use case George 🙂

By

–

This blog made the rounds for a reason. Memory isn't optional!

By

–

Good solution. Btw, Once you’ve identified the high-signal trajectories, you can also pair them with counterfactual continuations (what the agent should have done at the point of failure) to construct preference pairs for DPO. So the signals don't just act as a debugging tool

By

–

The Tesla community meets in audio spaces here almost every night and many have new friends in Japan, they report. https://t.co/47OwvL3pEa

— Robert Scoble (@Scobleizer) 12 avril 2026

The Tesla community meets in audio spaces here almost every night and many have new friends in Japan, they report. Dustin (@r0ck3t23) The internet was supposed to connect the world. It connected the infrastructure. The people stayed divided. For thirty years, a Japanese user and an American user existed on the same network and couldn’t understand a single word the other one typed. The information was accessible. The conversation was not. Language was the last wall. And nobody was building a door. X just removed it. Calacanis: “This auto-translate feature has done more for understanding across borders than anything I’ve ever seen.” Grok is integrated directly into the platform. Every post, every reply, every thread. Translated in real time, both directions, automatically. A user in Tokyo writes in Japanese. You read it in English. You reply. They read your response in Japanese. Neither of you switched a setting. Neither of you copied text into a translator. The friction simply doesn’t exist anymore. Calacanis: “People who don’t speak the same language engaging on X in a very nuanced, fun, interesting way. And that, as a truth mechanism, is just absolutely extraordinary.” This has never existed before. Not at this speed. Not at this scale. Not with this level of nuance preserved. Previous translation tools gave you the words. They butchered the tone, the context, the cultural weight behind them. An LLM doesn’t just translate language. It translates intent. Sarcasm. Subtext. The meaning underneath the words. That changes what’s possible on the platform. Americans, Japanese, Israeli, French, Russian, and Middle Eastern users debating, arguing, laughing, and exchanging culture directly. No journalist filtering the message. No editor deciding what’s relevant. No network choosing which perspectives get airtime. Peer to peer. Unmediated. Unfiltered. Calacanis: “Journalists are not even taking the time to translate and cover what’s going on in those areas, and this is happening automatically in real time.” For decades, your understanding of another country was shaped entirely by whichever outlet decided to cover it. If they didn’t translate it, you never saw it. If they did, you saw their version of it. That filter is gone. The raw, unedited perspective of every culture on Earth is now accessible to every other culture on Earth. Instantly. Without permission. Without a middleman. That is what a real town square looks like. Not a platform where people from the same country talk to each other in the same language about the same news cycle. A platform where the entire species can hear each other for the first time. X didn’t just build a social network. It built the first global conversation where language is no longer the barrier to entry. The walls between cultures were never ideological. They were linguistic. The wall is down. And 8 billion people just entered the same room. — https://nitter.net/r0ck3t23/status/2043192741761306972#m

→ View original post on X — @scobleizer, 2026-04-12 05:44 UTC

By

–

GPT, Generative AI, and LLMs! @AverConferences #BigData #Analytics #DataScience #AI #MachineLearning #NLProc #IoT #IIoT #Python #RStats #TensorFlow #JavaScript #CloudComputing #Serverless #DataScientist #Linux #Programming #Coding #100DaysOfCode geni.us/Aver-Confer

→ View original post on X — @gp_pulipaka, 2026-04-12 04:57 UTC

By

–

GPT, Generative AI and LLM! @starconfs #BigData #Analytics #DataScience #AI #MachineLearning #IoT #IIoT #PyTorch #Python #RStats #TensorFlow #ReactJS #GoLang #CloudComputing #Serverless #DataScientist #Linux #Programming #Coding #100DaysofCode cstar.global

→ View original post on X — @gp_pulipaka, 2026-04-12 04:57 UTC

By

–

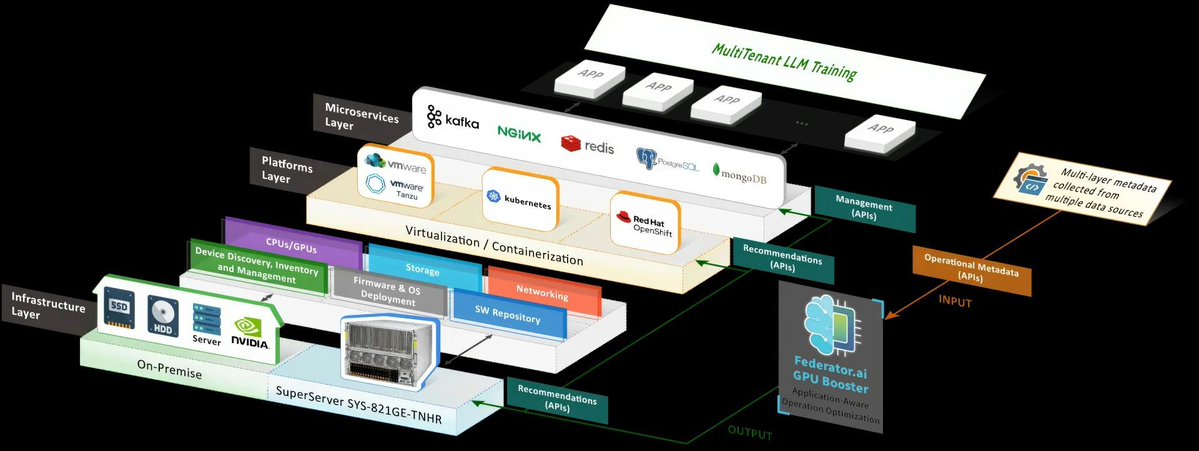

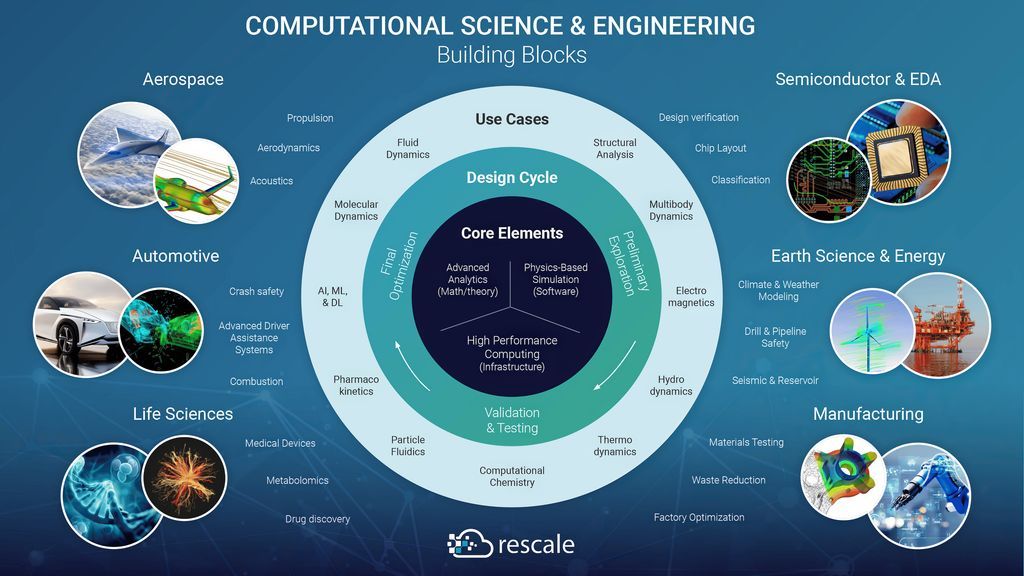

GPU for LLM, AI, ML and #HPC. #BigData #Analytics #DataScience #AI #MachineLearning #NLProc #IoT #IIoT #PyTorch #Python #RStats #TensorFlow #Java #JavaScript #ReactJS #GoLang #CloudComputing #Serverless #DataScientist #Linux #Programming #Coding #100DaysofCode References ProphetStor. (n.d.). Maximizing GPU efficiency in multitenant LLM training [White paper]. Retrieved March 10, 2025, from prophetstor.com/white-papers… VanLee, G. (2023, March 22). The importance of high-performance computing as a service (HPCaaS) for research and innovation [Blog post]. Rescale. Retrieved March 10, 2025, from rescale.com/blog/the-importa…

→ View original post on X — @gp_pulipaka, 2026-04-12 04:42 UTC

By

–

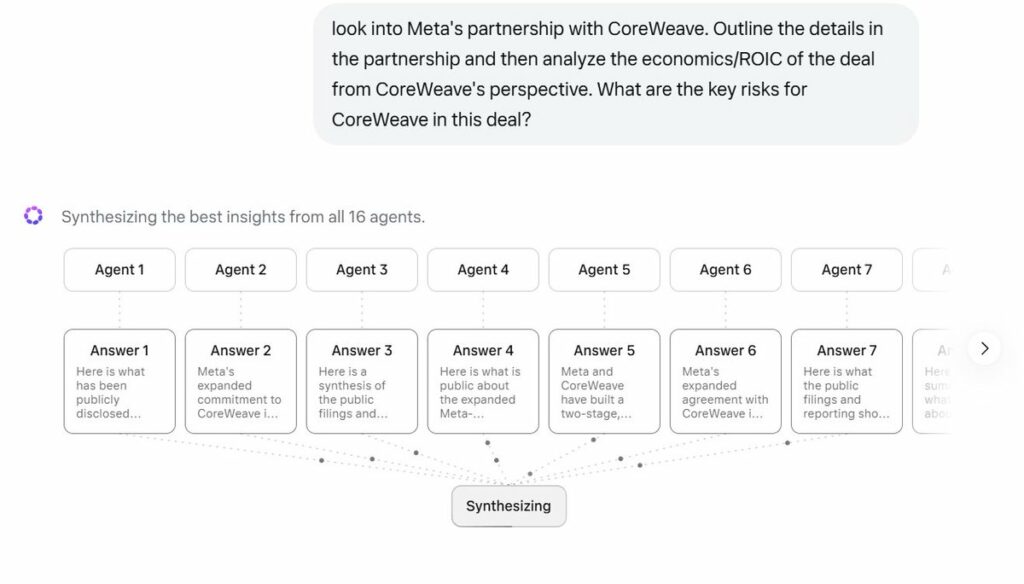

check out Contemplating mode for your most complex reasoning queries! Mostly Borrowed Ideas (@borrowed_ideas) Not usually a Meta AI user, but wanted to give them a shot after the latest model release (it's free anyway). So I installed the app on my desktop, and noticed "contemplating" mode (didn't see that on the mobile app btw). When I asked a question, 16 agents simultaneously started working on the question which looks pretty cool! — https://nitter.net/borrowed_ideas/status/2043040075928474080#m

→ View original post on X — @alexandr_wang, 2026-04-12 04:00 UTC