What if your AI could truly “think in pictures,” not just describe them? Researchers from Peking University, Kling Team, and Amazon AGI introduce Monet-7B, a new framework that lets multimodal AI reason directly inside visual latent space—no external tools needed. Instead of

@jiqizhixin

-

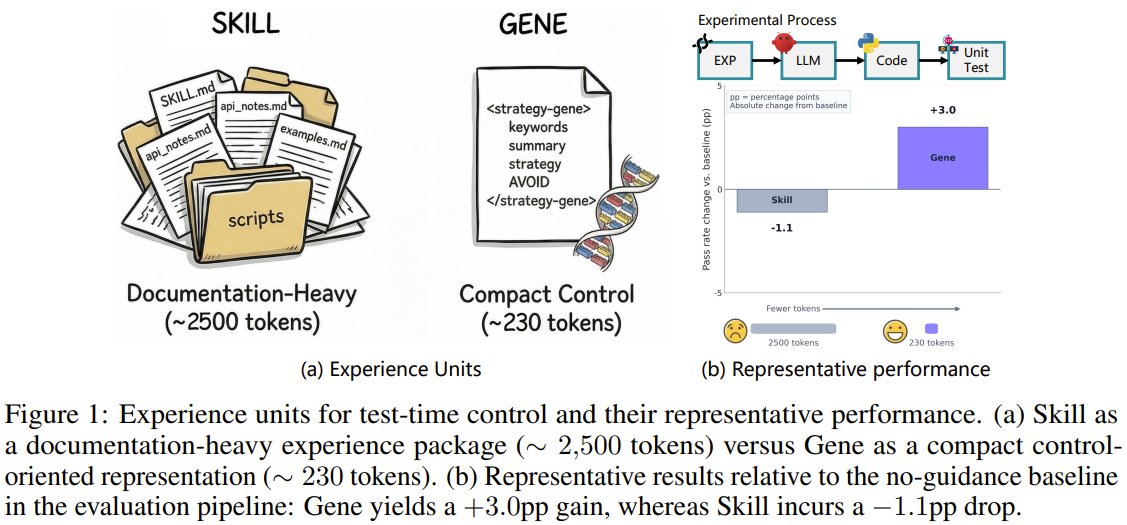

Strategy Genes: AI Evolution Through Compact Reusable Code

By

–

Can you turn messy trial-and-error into pure strategy, like a game AI evolving mid-battle? From Tsinghua University and EvoMap, researchers challenge how AI reuses past experience. Instead of bulky "skill manuals," they propose "Strategy Genes" — compact, evolution-ready code

-

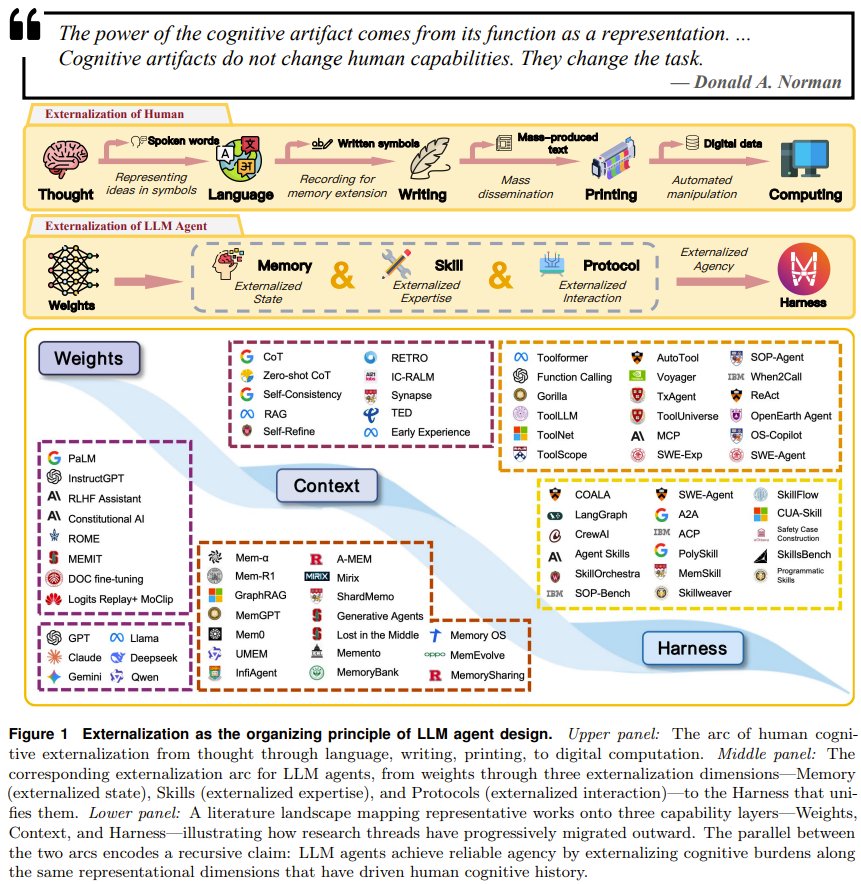

Beyond Model Size: Smart Scaffolding for LLM Agents

By

–

Think building an AI agent is just a better brain? What if the real secret is what you add outside the model? A team from Shanghai Jiao Tong University, OPPO, and others argues that the future of LLM agents isn't about bigger weights, but smarter scaffolding. They introduce a

-

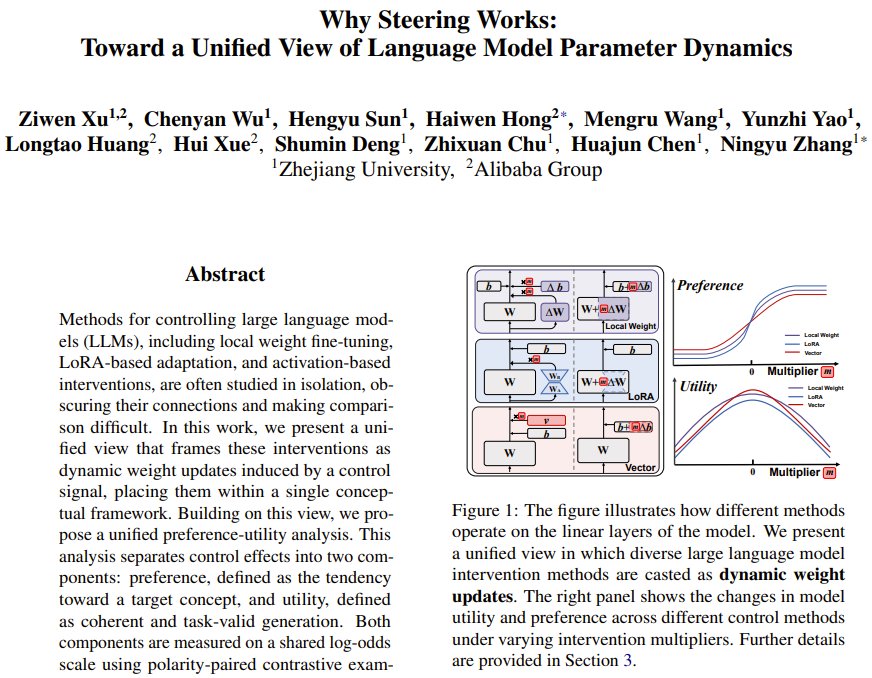

Unified Framework Controls Large Language Models Without Breaking

By

–

Can you control a large language model without breaking its brain? Zhejiang University and Alibaba Group researchers just showed how. They unify all model control methods (fine-tuning, LoRA, activation edits) into one single framework, separating effects into "preference"

-

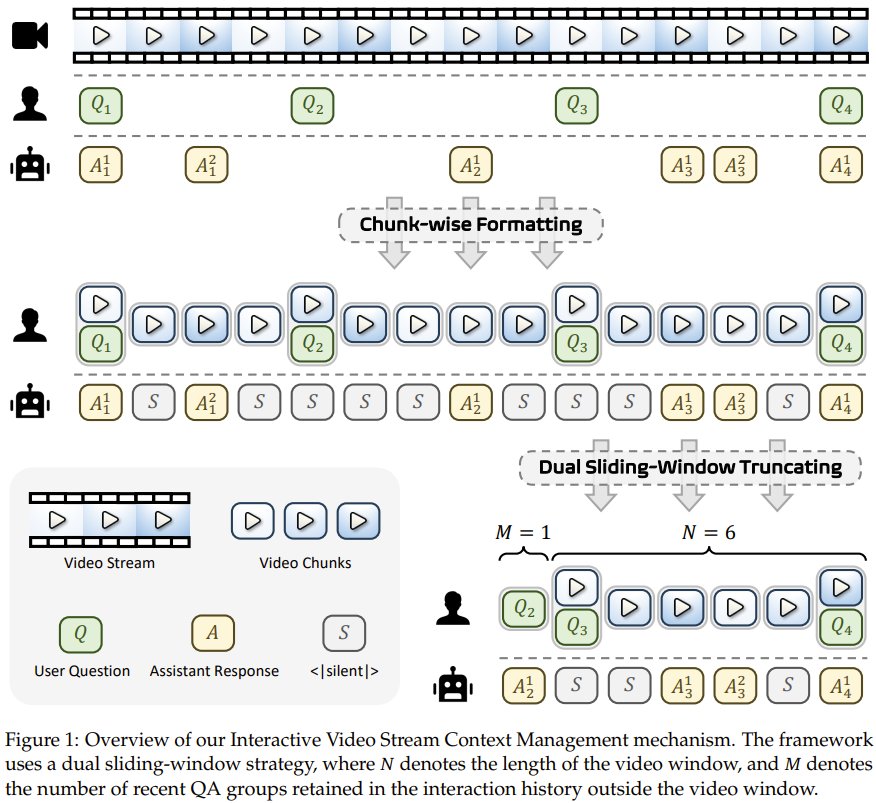

Huawei AURA: Real-Time AI Video Processing System

By

–

What if your AI could watch a live video feed and answer your questions, or even alert you, all in real time? Researchers from Huawei Research and CUHK MMLab, introduce AURA. This new system allows a single video AI to continuously process live streams, enabling instant

-

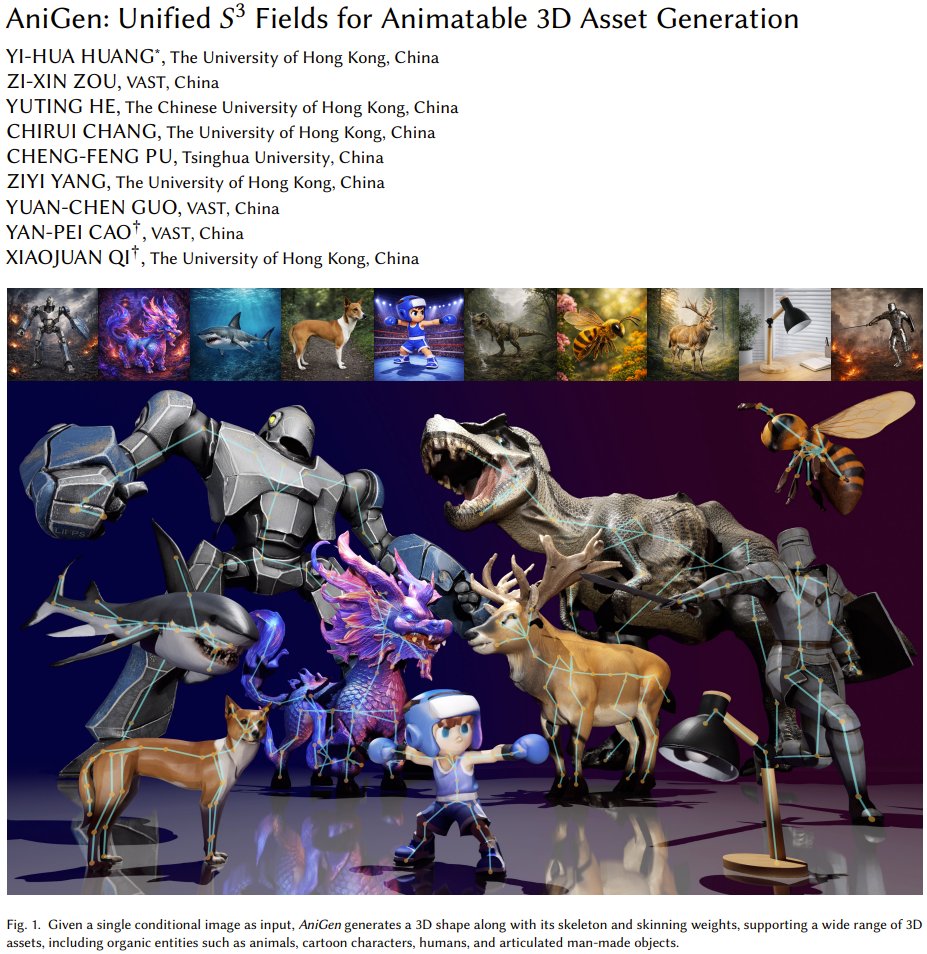

Single Photo to Rigged 3D Character Generation with AniGen

By

–

What if you could generate a fully rigged, animatable 3D character from just a single photo? Researchers from The University of Hong Kong, VAST, CUHK, and Tsinghua University present AniGen for exactly that. They created a unified system that simultaneously generates a 3D

-

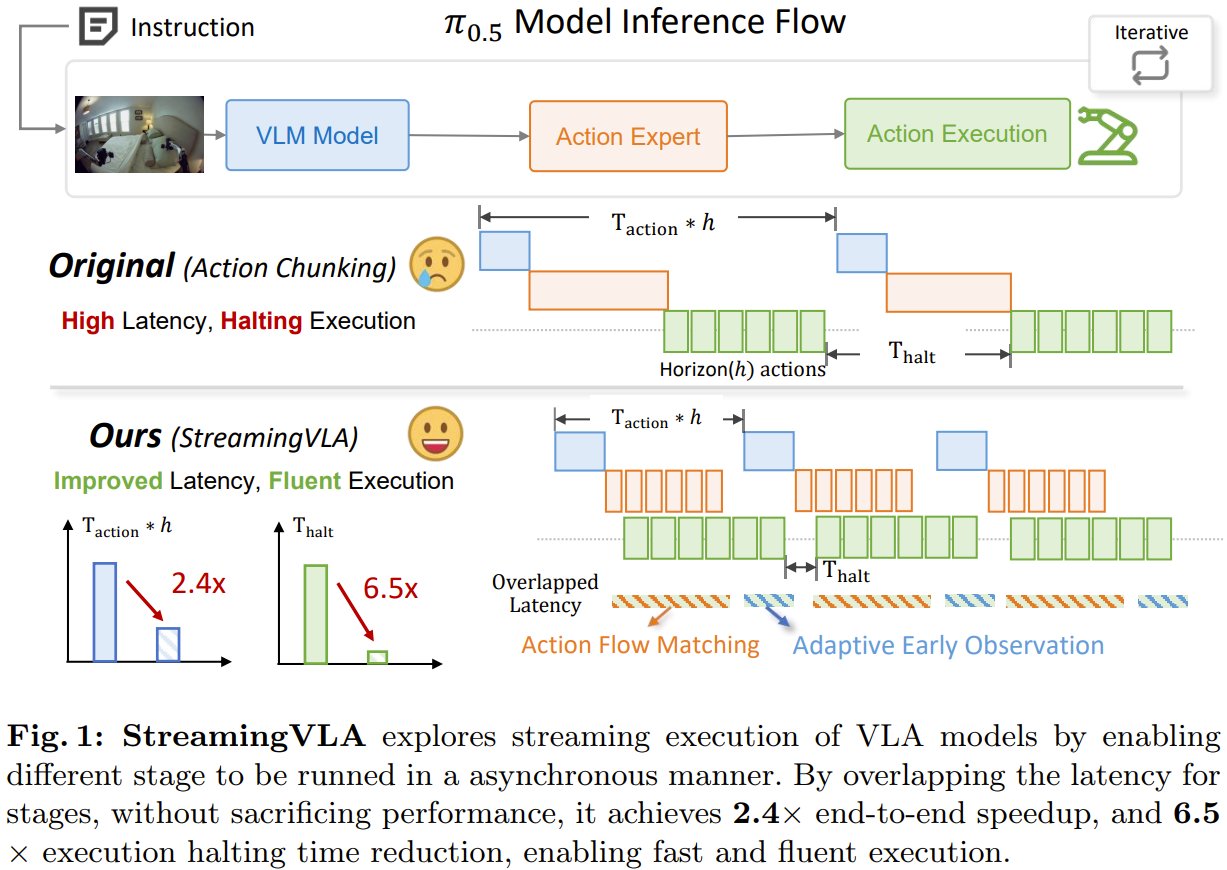

StreamingVLA: Parallel Vision-Language-Action for AI Robots

By

–

What if your AI robot could think and act simultaneously, instead of waiting between steps? Researchers from Tsinghua University & Lenovo present StreamingVLA. The new method lets a vision-language-action model run its "see," "think," and "act" stages in parallel, like a

-

NES: AI Framework Predicts Your Next Code Edit

By

–

What if your IDE could predict your next code edit before you even type it? Researchers at Ant Group present NES, a new AI framework that learns from past editing patterns. It uses two models: one to guess where you'll edit next, and another to suggest what to change—all

-

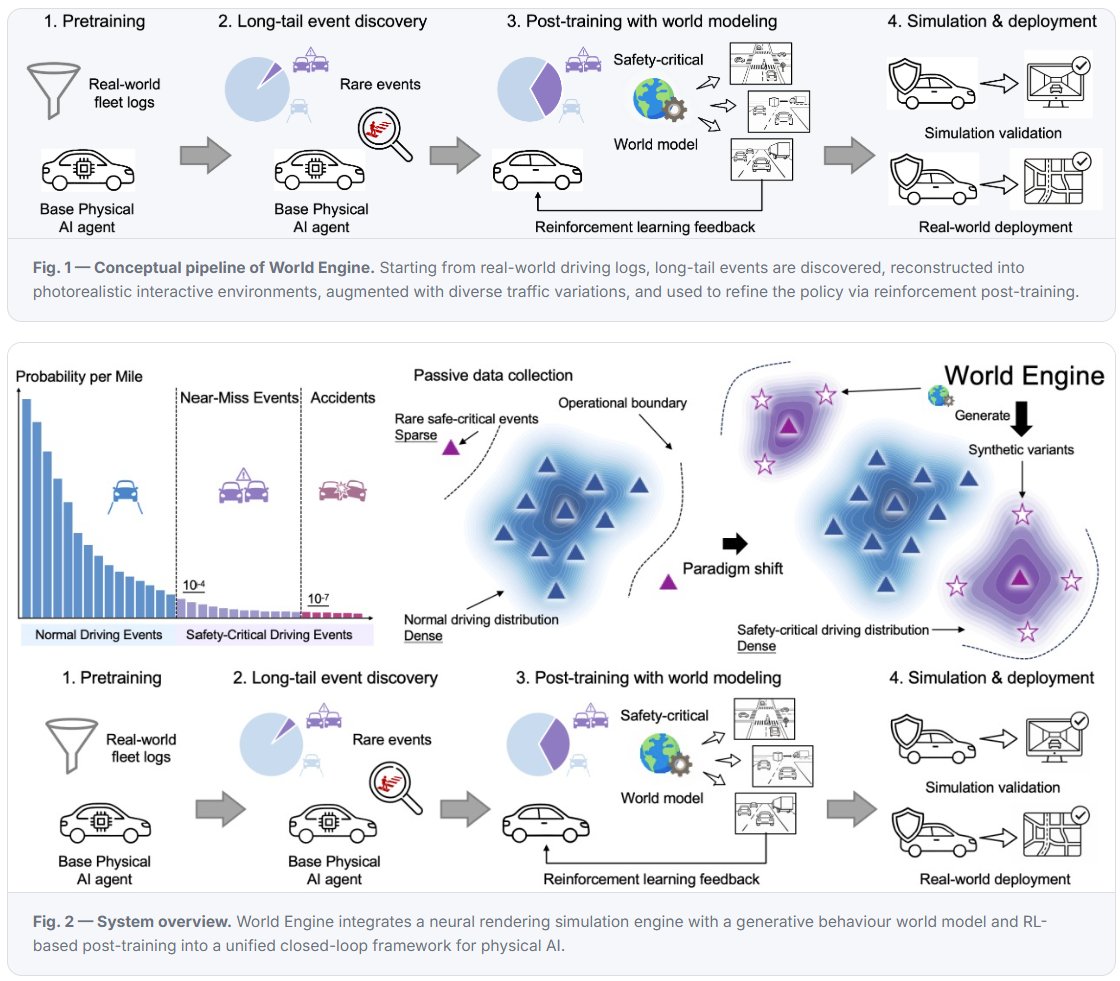

World Engine: Synthetic Edge Cases for Autonomous Driving Training

By

–

What if you could train a self-driving car on its hardest moments, not just its longest drives? OpenDriveLab, Huawei, NVIDIA & others present World Engine. Instead of just adding more miles of normal data, it generates massive volumes of synthetic edge cases—like cut-ins and

-

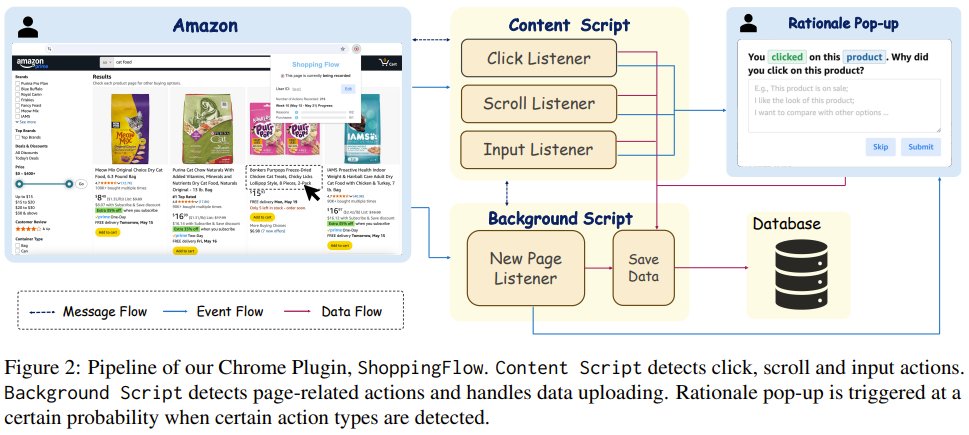

Can LLMs Truly Emulate Individual Human Online Personas?

By

–

Can LLMs truly think and act like a specific person online? Researchers from Northeastern, USC, Columbia & others present OPeRA, a new dataset that captures real people’s shopping habits—their persona, screen view, action, and internal reasoning. It’s the first public