Why do AI models sometimes obsess over useless words? Researchers from Tsinghua University, The University of Hong Kong, and Meituan LongCat Team present the first comprehensive survey on Attention Sink. The problem: Transformers often waste attention on a few meaningless

@jiqizhixin

-

DeepSeek’s Multimodal Model Live: Will It Be Open-Sourced?

By

–

DeepSeek’s multimodal model is now live, and some users are already able to try it out. So far, it’s performing pretty well. Now the question is: will this one also be open-sourced? Tomorrow might be a good time!

-

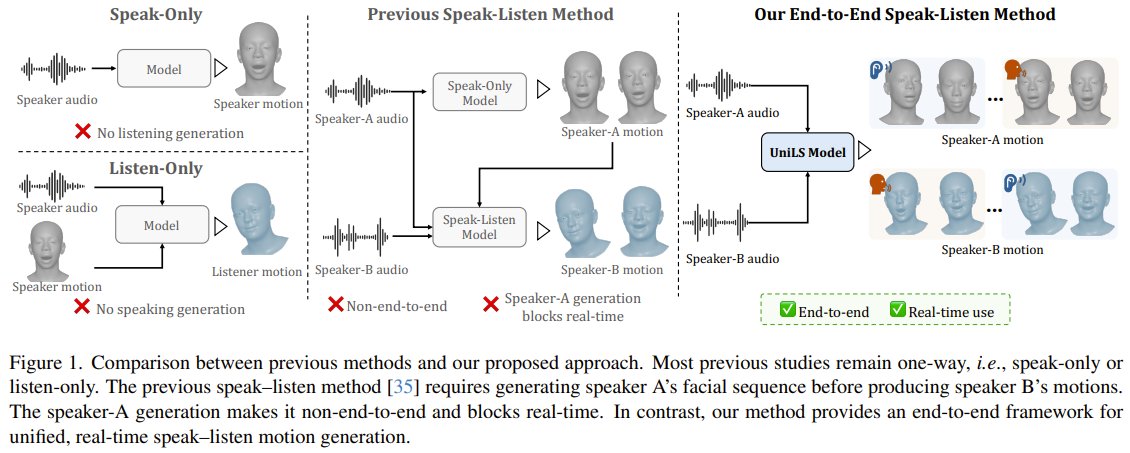

UniLS: Natural Avatar Listening Through Facial Movement Learning

By

–

Can an avatar truly listen as naturally as it speaks? Researchers from Shanda AI Research and the University of Tokyo present UniLS. They solved the "stiff listener" problem by first teaching an AI the natural rhythm of facial movements without audio, then fine-tuning it with

-

AI Method Reveals Hidden Rules in Complex Systems

By

–

Can your AI truly grasp the hidden rules of a complex system without forgetting the important details? Researchers from UCL, Imperial College London, and the Santa Fe Institute have cracked the code. They introduce an information-theoretic Lagrangian method that balances

-

LLM Safety Bias: Why AI Refuses Harmless Requests

By

–

Why do AI assistants suddenly say "I can't help with that" to perfectly harmless requests? Researchers from Hefei University of Technology and iFLYTEK Research have identified the root cause: LLMs develop a cognitive bias in their internal safety encoding, where even innocent

-

GenericAgent: AI Learning Without Expanded Memory Requirements

By

–

What if your AI agent could actually learn on the job without needing a bigger memory chip? Enter GenericAgent. Think of GenericAgent as a digital Marie Kondo for information. Instead of cramming every past command and tool manual into its brain, it only keeps the high-value

-

AI Model Lacks Knowledge of Historical and Technological Events

By

–

Interesting, this is an AI model that doesn't know about World War II or the Cold War—it might not even know that digital computers or AI itself exist! https://t.co/gx7Z5e55rs

— 机器之心 JIQIZHIXIN (@jiqizhixin) 28 avril 2026Interesting, this is an AI model that doesn't know about World War II or the Cold War—it might not even know that digital computers or AI itself exist!

-

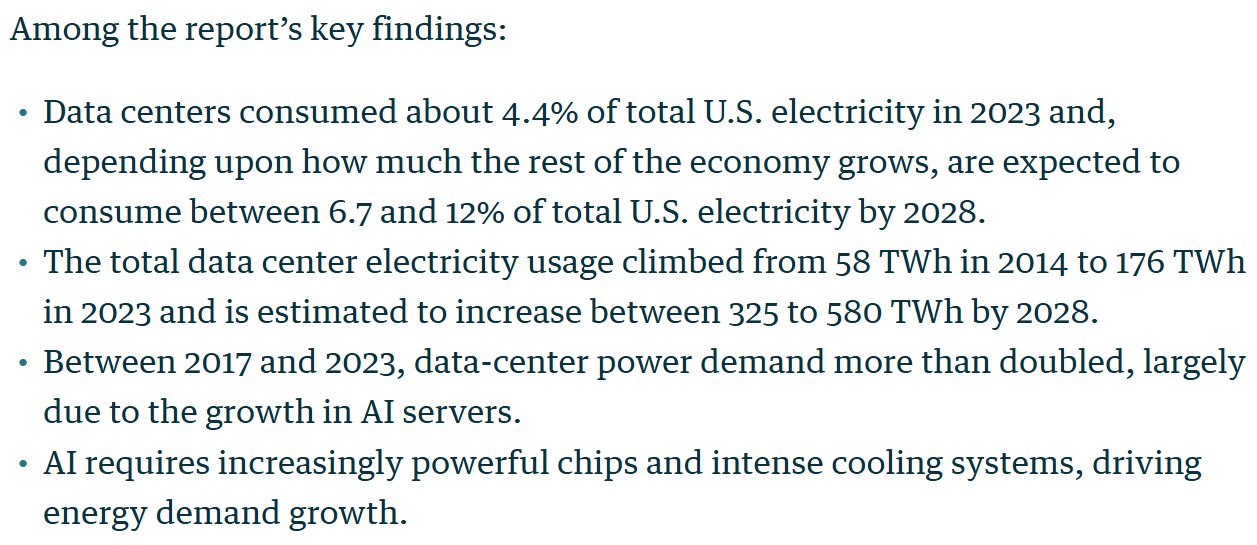

U.S. Data Centers to Consume 12% of Electricity by 2028

By

–

By 2028, U.S. data centers could gobble up 12% of the country’s electricity — driven by the AI boom, per Lawrence Berkeley Lab.

-

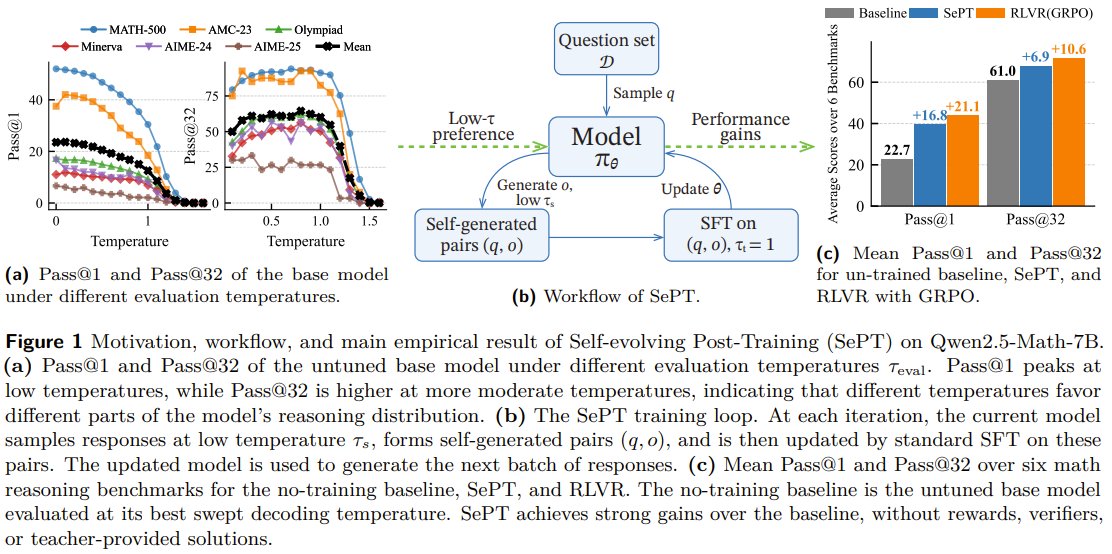

AI Models Learn Self-Improvement Without External Rewards

By

–

Can an AI teach itself to reason better without any outside reward? Researchers from CUHK, Shenzhen, SJTU, and CUHK present SePT. They let a language model generate its own reasoning examples by using "low-temperature" (more focused) responses, then train on that new data in a

-

ControlAudio: Precise AI Audio Generation Control System

By

–

What if you could tell an AI to generate audio starting at exactly 3 seconds, with perfect speech clarity? Researchers from Tsinghua University and Shengshu AI (with USTC and Monash) present ControlAudio. Instead of just typing a prompt, this system handles three instructions