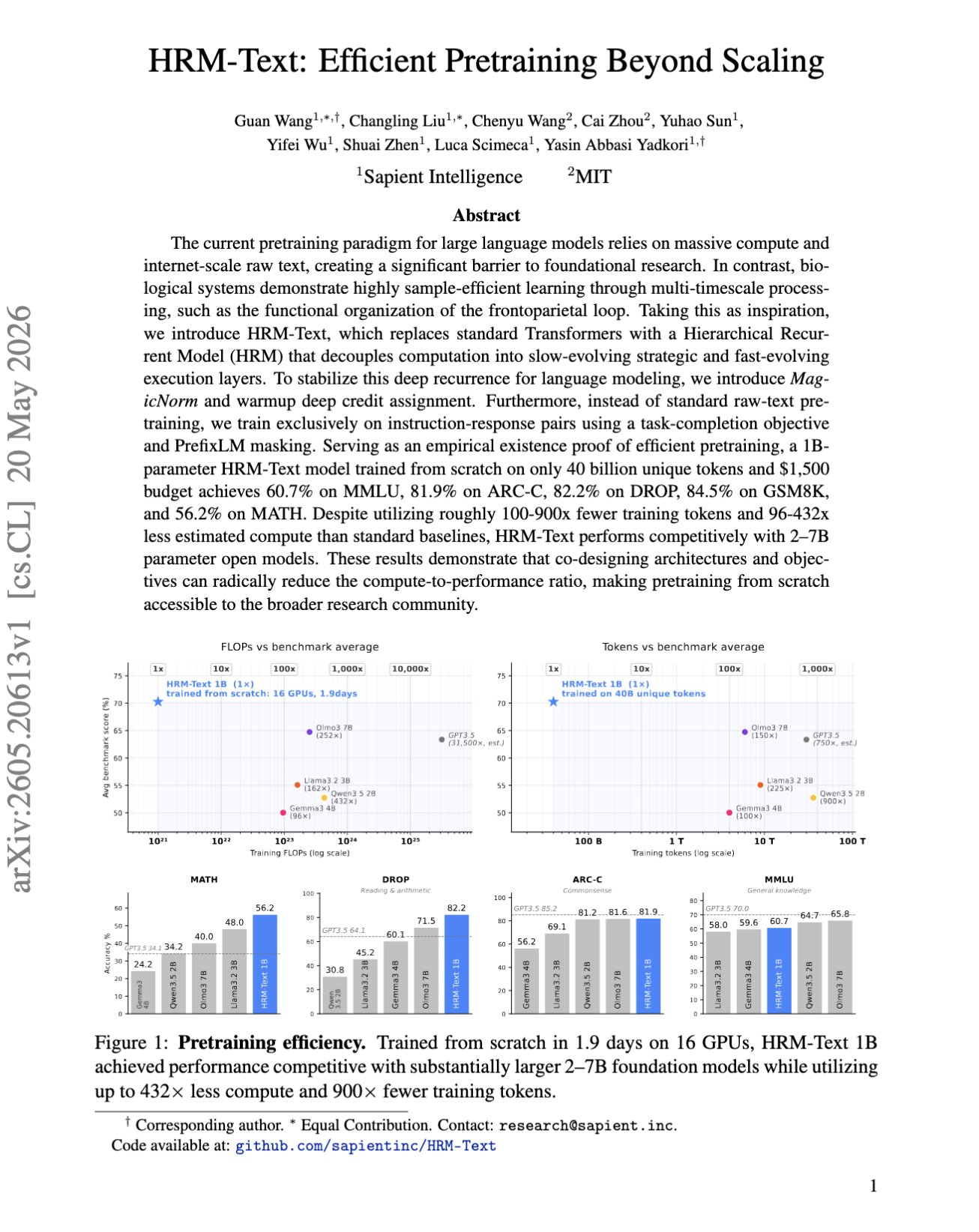

HRM beats Transformers seven times its size in language modeling!? "HRM-Text: Efficient Pretraining Beyond Scaling" This paper introduces the Hierarchical Recurrent Model (HRM), which incorporates slow planning layers and fast execution layers to enhance planning and recurrence. The model was trained directly on…

@askalphaxiv

-

Stochastic Tiny Recursive Models améliorent PPBench

By

–

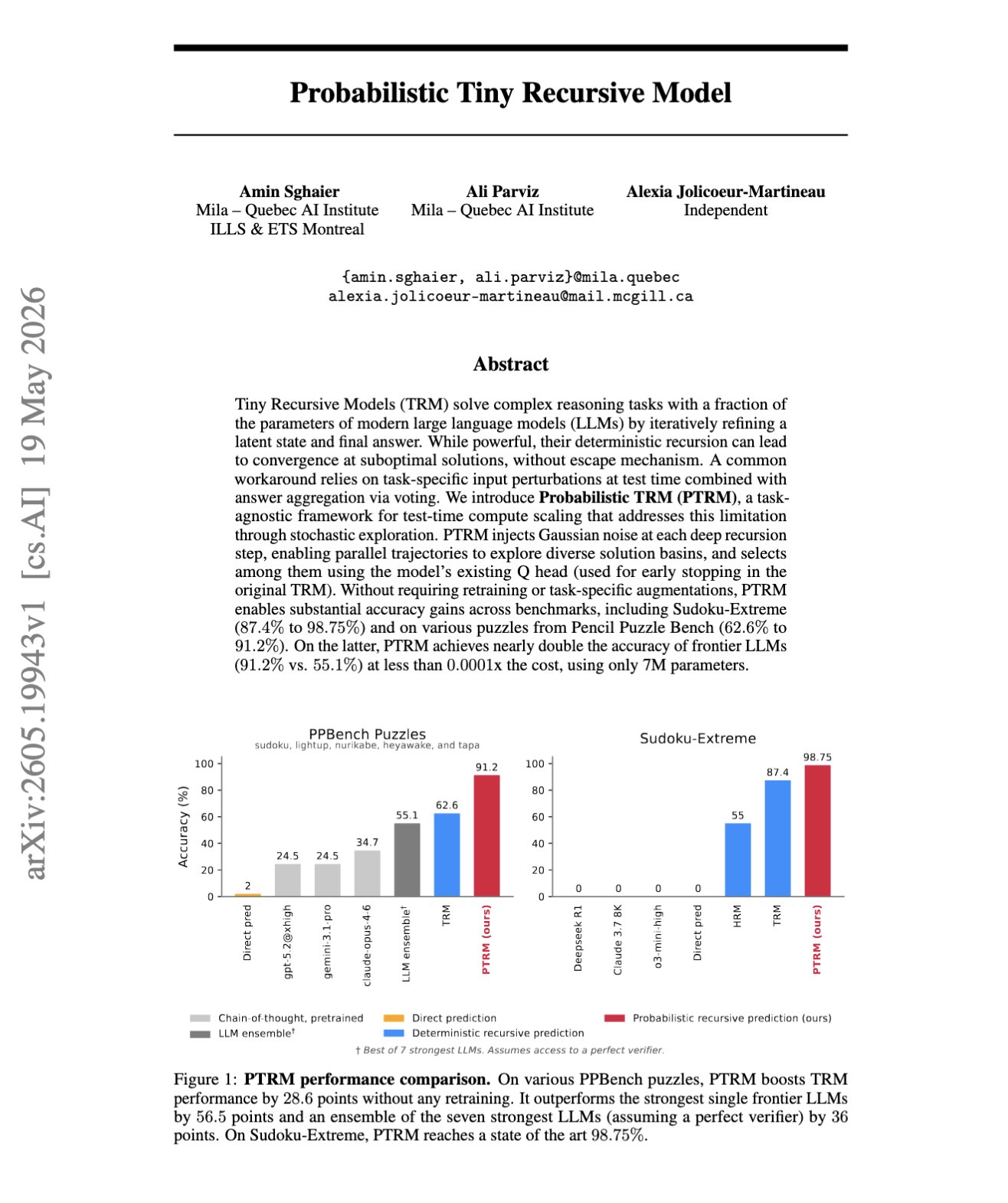

“Probabilistic Tiny Recursive Model” This paper makes Tiny Recursive Models stochastic at test time by adding Gaussian noise, running parallel rollouts, and using the existing Q head to pick the best answer. With no retraining and no task-specific tricks, its PPBench jumps from

-

alphaXiv s’associe à l’ACM CAIS

By

–

alphaXiv ACM CAIS We’re excited to announce our partnership with the ACM Conference on AI and Agentic Systems! alphaXiv will serve as the complimentary research hub for accepted papers and system demos, helping the community discover, understand, and build on top of the

-

AutoResearchClaw: Autonomous AI Research with Collaboration

By

–

AutoResearchClaw: Self-Reinforcing Autonomous Research with Human-AI Collaboration As real-world science is iterative, this paper introduces AutoResearchClaw, a multi-agent system that debates ideas, self-corrects experiments, decides whether to pivot or refine, verifies data, and

-

LLM traces masquent un planificateur myope

By

–

“Extracting Search Trees from LLM Reasoning Traces Reveals Myopic Planning” Reasoning models can write traces that look like real tree search, but this paper shows their decisions are mostly driven by shallow one-step evaluation. They extract search trees from LLM CoT in

-

SkillSynth Builds Skill Graphs for Terminal Agent Training

By

–

"Toward Scalable Terminal Task Synthesis via Skill Graphs" Terminal-agent training needs more diverse workflows. This Tencent Hunyuan paper, SkillSynth, builds a graph of terminal scenarios and skills, samples paths through it, then turns those paths into executable tasks. It

-

Meituan Longcat Introduces Asynchronous RL for LLM Training

By

–

Asynchronous RL for LLM training. Meituan Longcat fixes the rollout bottleneck from long reasoning traces by keeping multiple policy versions alive at once. Long trajectories can now stay on their original policy, so training can keep moving without dropping samples or breaking

-

Speculative Decoding Accelerates RL Post-Training Rollouts

By

–

"Accelerating RL Post-Training Rollouts via System-Integrated Speculative Decoding" Speculative decoding for RL rollouts! This paper speeds up post-training without changing the target policy’s sampling distribution. So a draft model proposes multiple tokens, and the policy

-

GenLIP Trains ViT to Directly Predict Caption Tokens

By

–

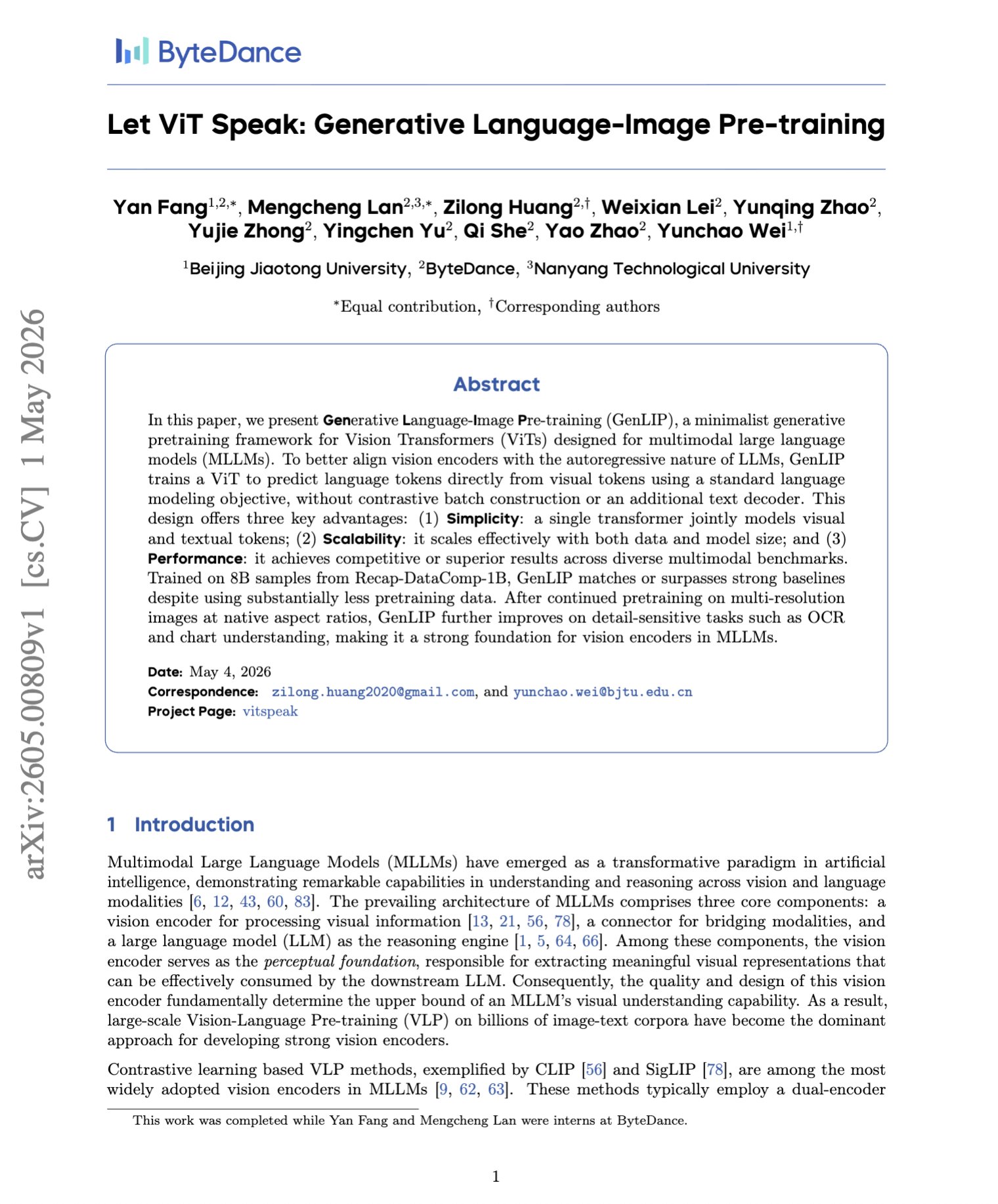

"Let ViT Speak: Generative Language-Image Pre-training" Instead of contrastive image-text matching or adding a separate text decoder, this paper, GenLIP, trains a ViT to directly predict caption tokens from image patches with a standard next-token loss. A key component they use

-

Representation Fréchet Loss Enables Direct FID Optimization for Visual Generation

By

–

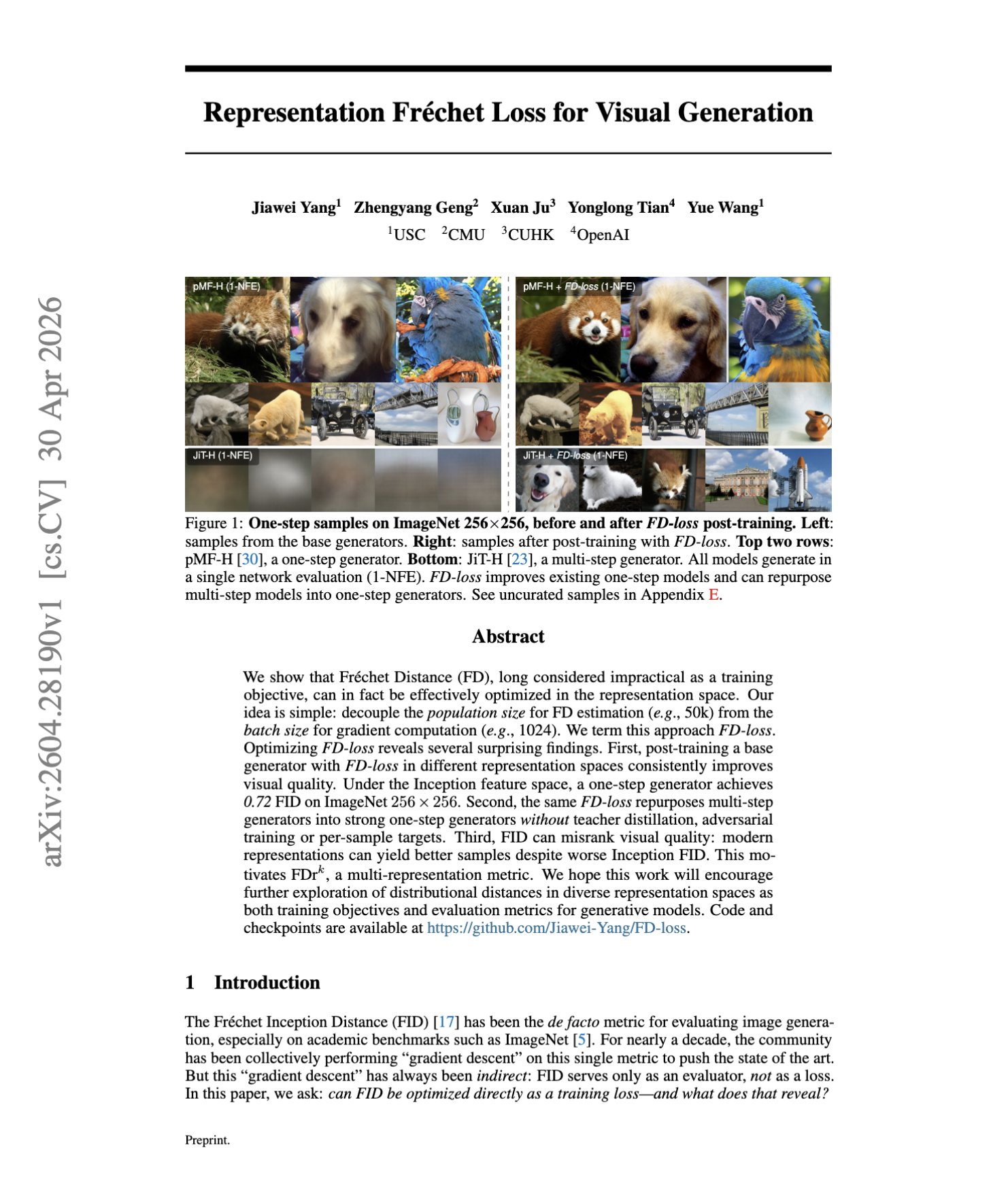

“Representation Fréchet Loss for Visual Generation” FID has always been generative modeling's scoreboard, where everyone optimizes toward it indirectly, but almost nobody trains on it directly. However, this paper shows that you actually can. They achieved this by estimating