“Qwen-VLA: Unifying VLA Modeling across Tasks, Environments, and Robot Embodiments” They turned robot learning into one vision-language-action modeling problem instead of separate policies for each task, environment, and robot body. So by adding a DiT flow-matching action

@askalphaxiv

-

Gemini Embedding 2: Native Multimodal Embedding Model

By

–

"Gemini Embedding 2" This paper turns Gemini into one native embedding model for text, image, video, audio, and interleaved multimodal inputs. Instead of converting everything into text first, it embeds raw modalities directly into one shared space, improving audio search,

-

LeJEPA World Model Learning Under Gaussian Latent Dynamics

By

–

New paper from Yann LeCun! "When Does LeJEPA Learn a World Model?" This paper proves that under Gaussian latent dynamics, LeJEPA can recover the hidden state behind nonlinear observations up to rotation. The intuition is that linear latent features are the most stable across

-

On-Policy Distillation: Emerging AI Post-Training Method

By

–

A new class of post-training method is emerging in 2026: On-Policy Distillation (OPD). It’s already showing up across frontier open-weight model releases, and it’s quickly becoming a technique worth understanding. To help you get up to speed, we’ve compiled a list of the most

-

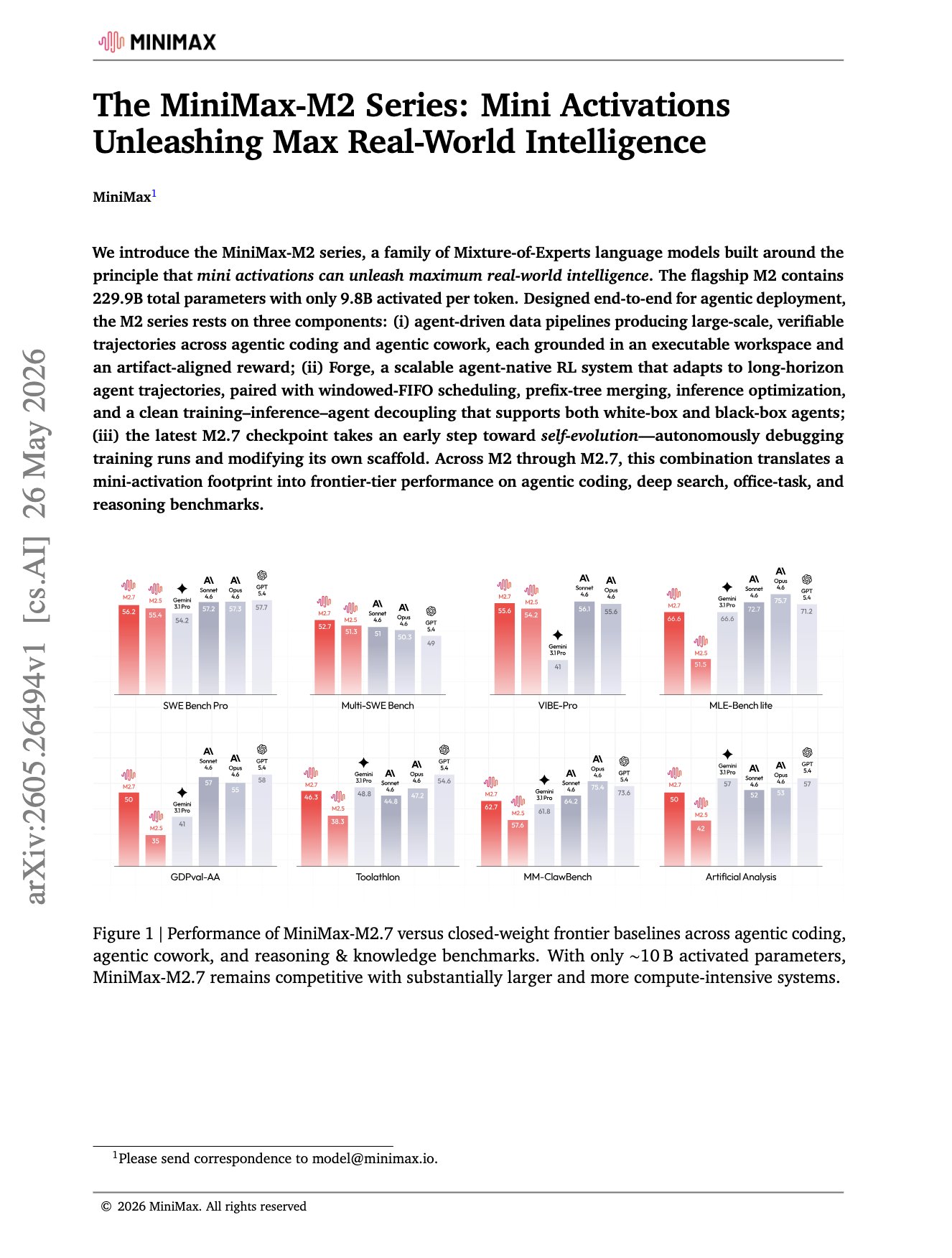

MiniMax-M2 Agent-Native RL Training Paper Released

By

–

MiniMax-M2 paper just dropped The key focus of M2 is on something more agent-native. It trains on runnable workspaces and artifact-grounded rewards, then uses Forge to scale RL over long coding, app, search, and office-task trajectories. What's interesting is that M2.7

-

Looped Transformers: Frozen Checkpoint Inference Optimization

By

–

Another cool research on Looped Transformers They ask the question: "Can we loop a frozen, off-the-shelf checkpoint directly at inference time without any modifications?" So naive repetition pushes hidden states outside the distribution later layers expect, so performance

-

Language Models Sleep: Context Replay for Deep Reasoning

By

–

"Language Models Need Sleep" Instead of thinking longer at answer time, this paper makes LLMs sleep before forgetting. They replay old context, write it into fast weights, clear the KV cache, and answer later at normal speed. More sleep improves deep reasoning over long and

-

DeepMind LLMs Lean Proof-Search Agents Solve Open Problems

By

–

This new DeepMind research turns LLMs into Lean proof-search agents, so every step must compile and the final proof is mechanically verified. Under this setup, they solved 9 open Erdős problems, proved 44 OEIS conjectures, and helped advance actual research in optimization,

-

ConvexTok: Linear Programming for Optimal LLM Tokenization

By

–

"Tokenisation via Convex Relaxations" Most LLM tokenizers still use BPE, a greedy merge algorithm that can waste vocab slots on locally good but globally suboptimal tokens. This paper turns tokenizer training into a linear program, then rounds the solution into ConvexTok. This

-

Code as Agent Harness: Meta Paper Reframes AI Systems

By

–

"Code as Agent Harness" Agents are becoming less like chatbots that write code and more like systems that run on code. This new Meta paper reframes code as the harness around an agent, the executable layer for reasoning, acting, memory, verification, and coordination. The key