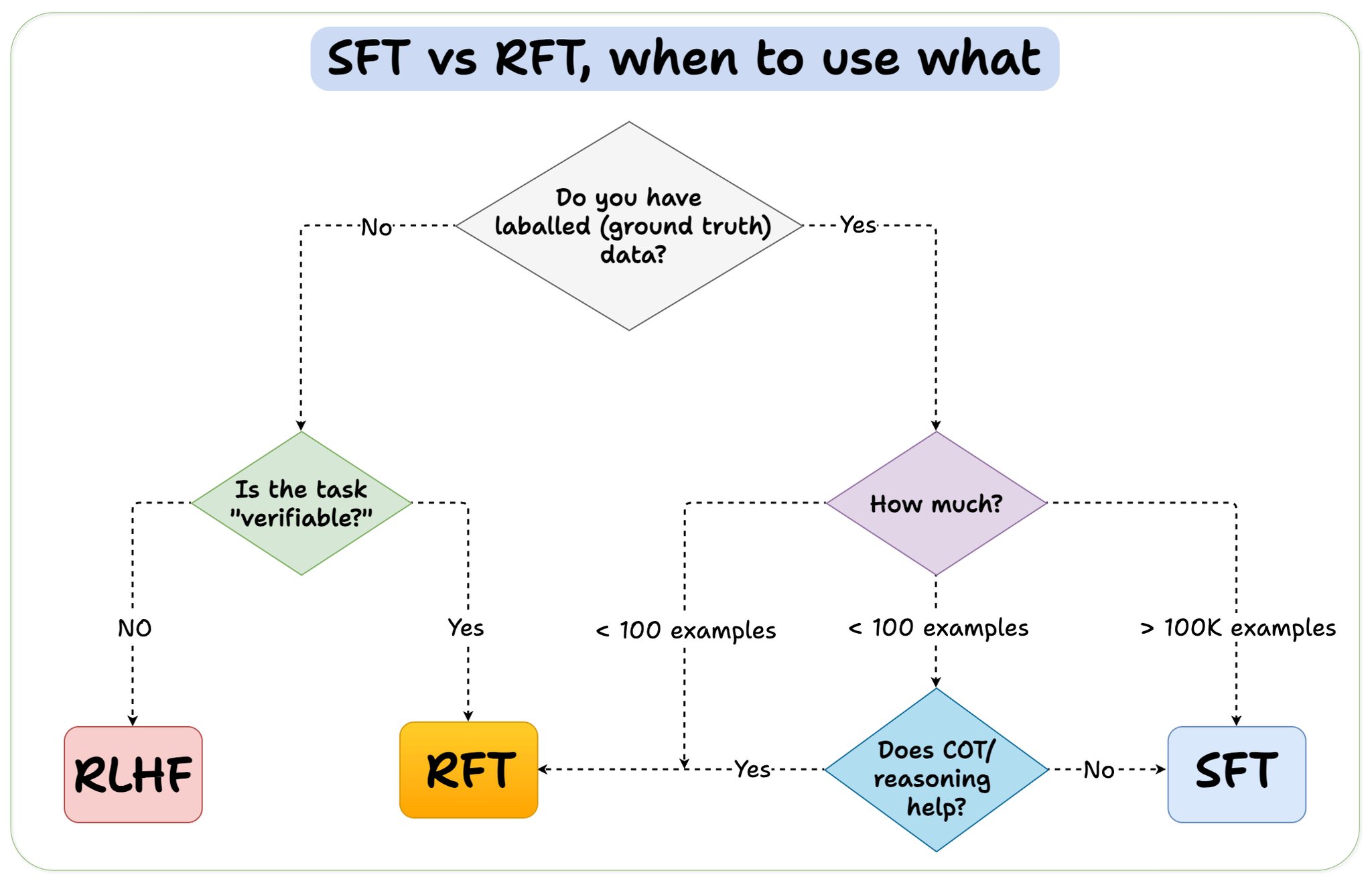

Before we conclude, let me address an important question: When should you use reinforcement fine-tuning (RFT) versus supervised fine-tuning (SFT)? I created this diagram to provide an answer:

@akshay_pachaar

-

Using GRPO Training with HuggingFace TRL GRPOTrainer

By

–

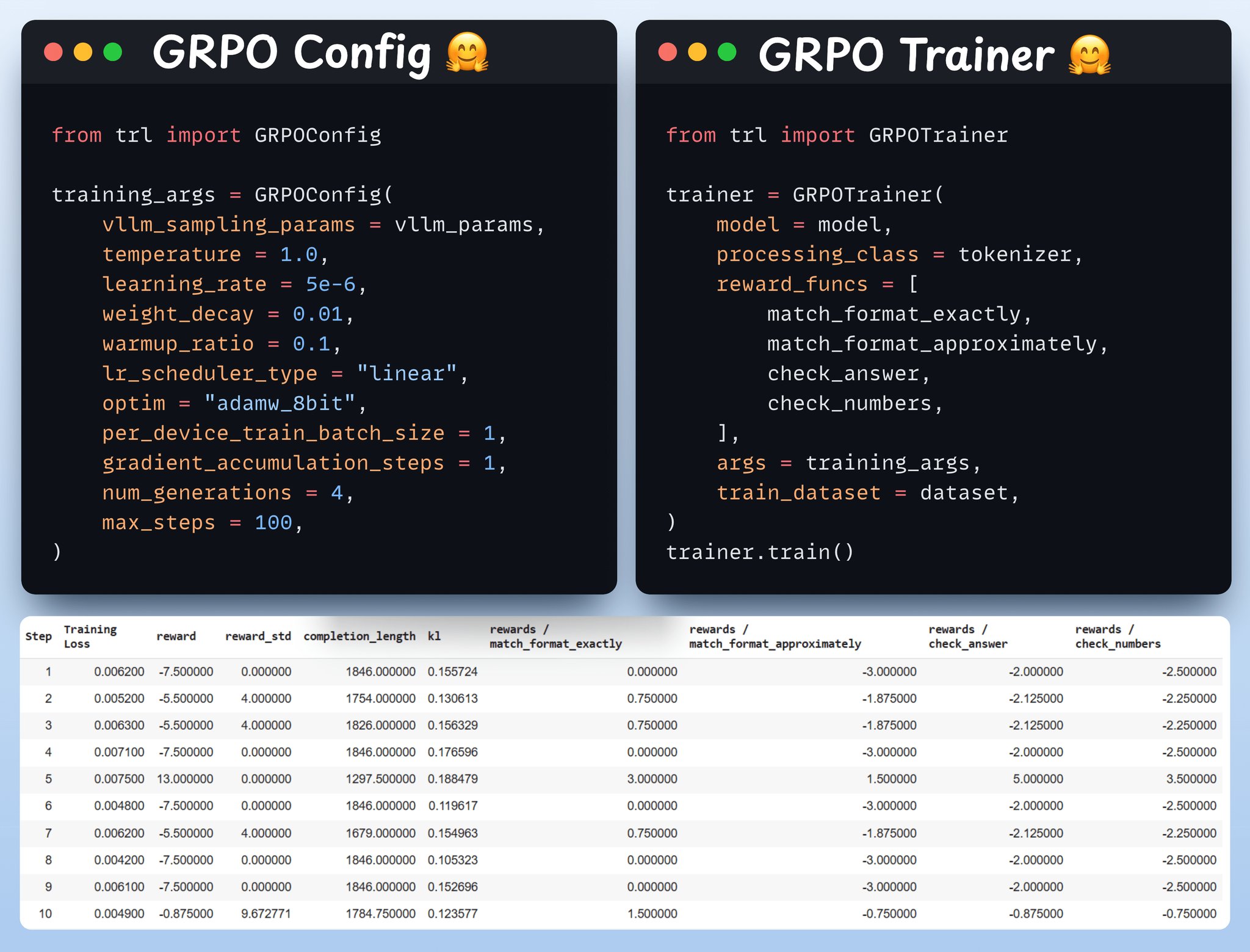

Use GRPO and start training Now that we have the dataset and reward functions ready, it's time to apply GRPO. HuggingFace TRL provides everything we described in the GRPO diagram, out of the box, in the form of the GRPOConfig and GRPOTrainer. Check this out

-

GRPO Reward Functions: Format Matching and Answer Validation

By

–

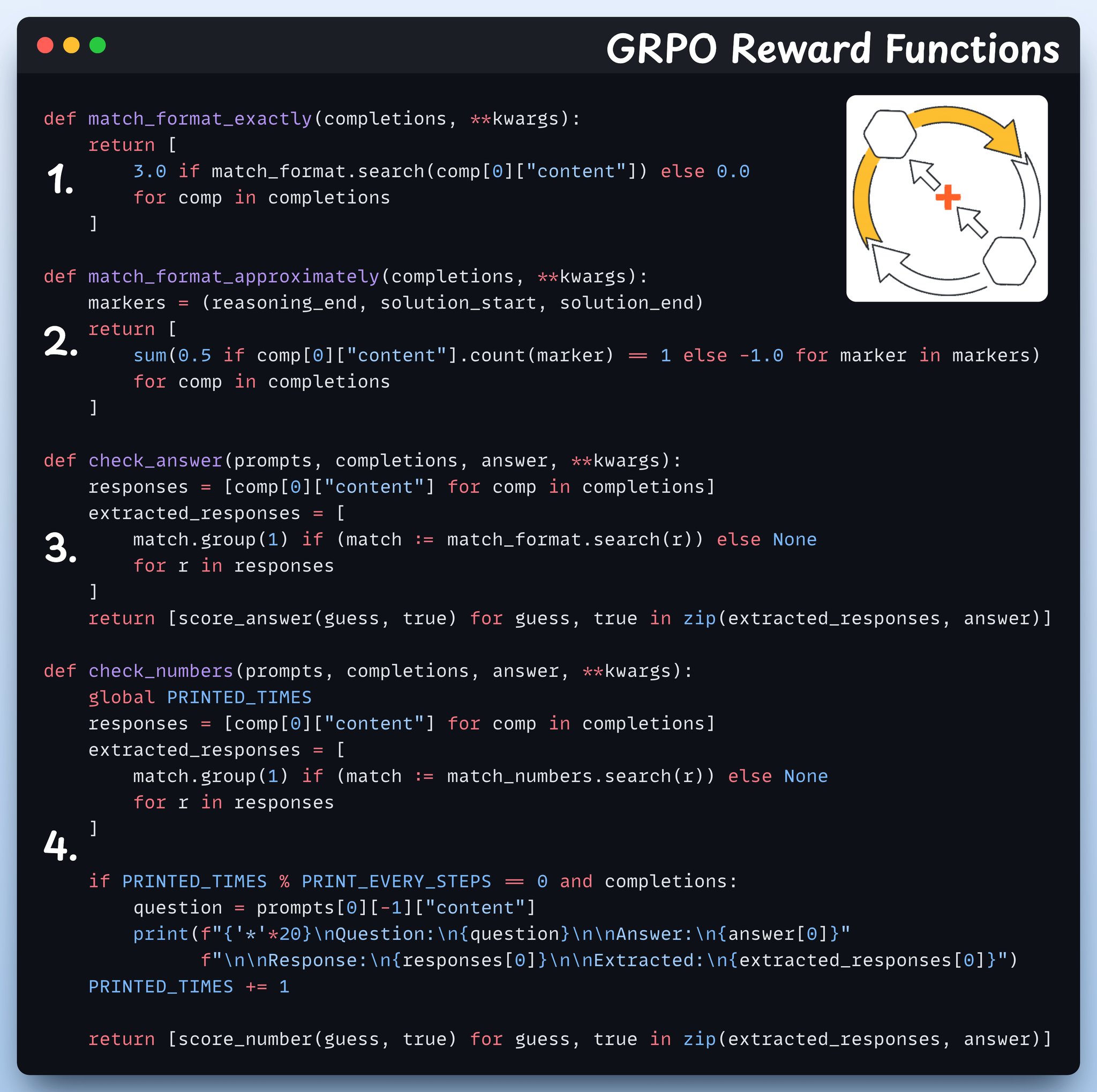

Define reward functions In GRPO we use deterministic functions to validate the response and assign a reward. No manual labelling required! The reward functions: – Match format exactly

– Match format approximately

– Check the answer

– Check numbers Check this out -

Formatting Open R1 Math Dataset for Reasoning Training

By

–

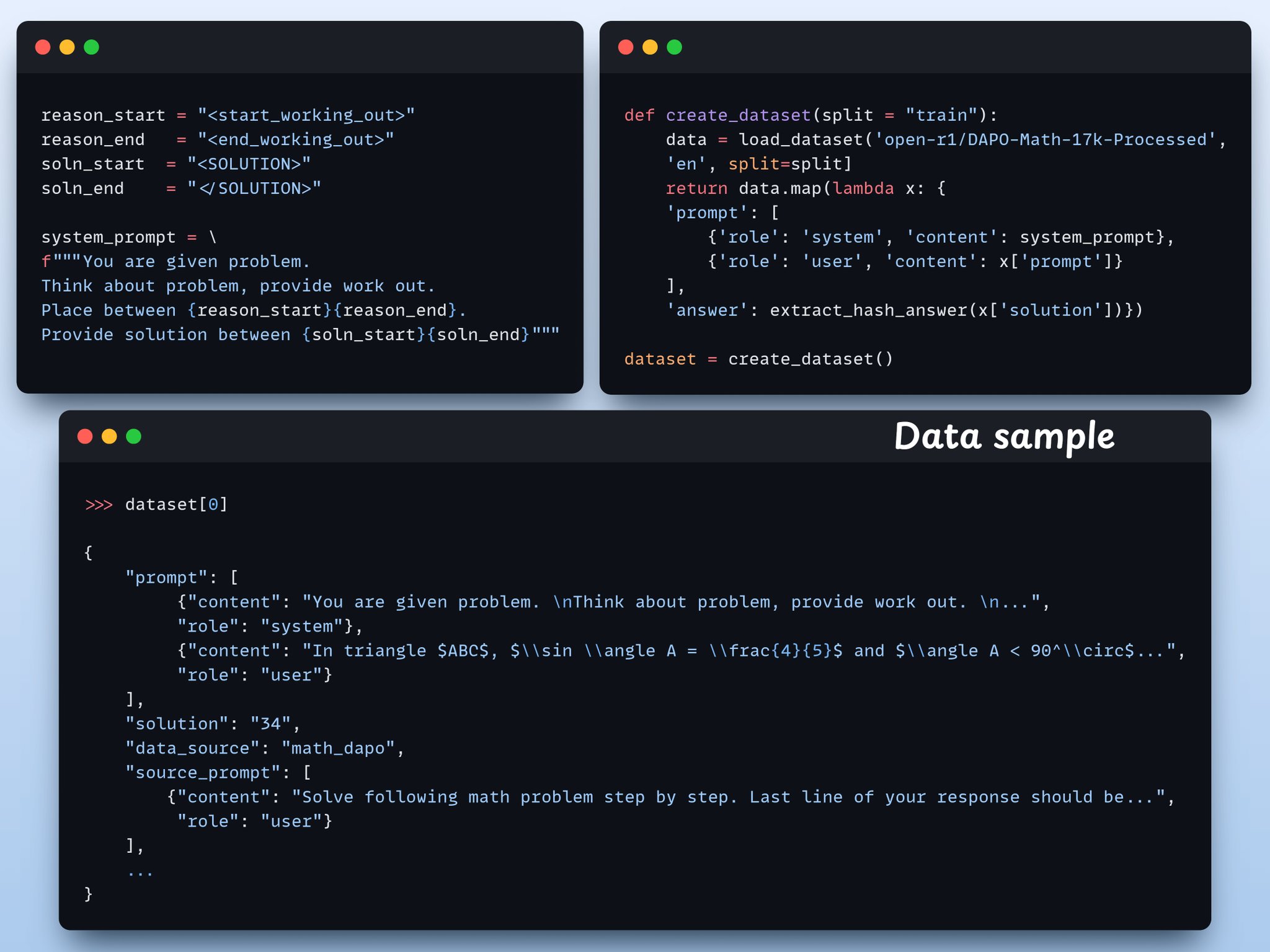

Create the dataset We load Open R1 Math dataset (a math problem dataset) and format it for reasoning. Each sample includes:

– A system prompt enforcing structured reasoning

– A question from the dataset

– The answer in the required format Check this code -

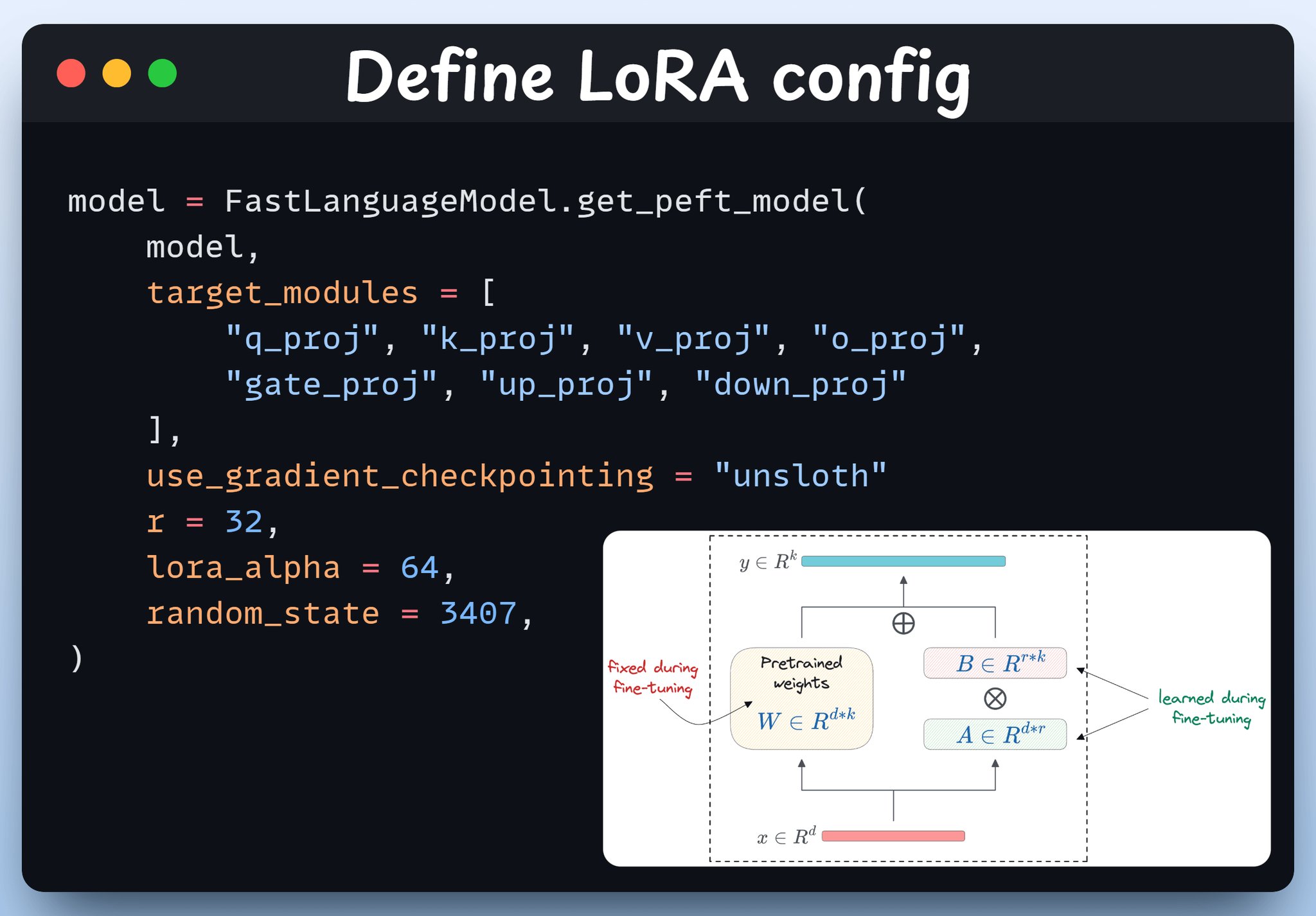

Configuring LoRA for Fine-Tuning with Unsloth PEFT

By

–

Define LoRA config We'll use LoRA to avoid fine-tuning the entire model weights. In this code, we use Unsloth's PEFT by specifying: – The model

– LoRA low-rank (r)

– Modules for fine-tuning, etc. Check this -

GRPO: Reinforcement Learning Method for Fine-Tuning LLMs Explained

By

–

What is GRPO?

— Akshay 🚀 (@akshay_pachaar) 2 mai 2026

Group Relative Policy Optimization is a reinforcement learning method that fine-tunes LLMs for math and reasoning tasks using deterministic reward functions, eliminating the need for labeled data.

Here's a brief overview of GRPO before we jump into code: pic.twitter.com/EX8hI5eEAIWhat is GRPO? Group Relative Policy Optimization is a reinforcement learning method that fine-tunes LLMs for math and reasoning tasks using deterministic reward functions, eliminating the need for labeled data. Here's a brief overview of GRPO before we jump into code:

-

Building a Reasoning Model Without Manual Labels Using Verifiable Outputs

By

–

When outputs are verifiable, labels become optional.

— Akshay 🚀 (@akshay_pachaar) 2 mai 2026

Maths, code, and logic can be automatically checked and validated.

Let's use this fact to build a reasoning model without manual labelling.

We'll use:

– @UnslothAI for parameter-efficient finetuning.

– @HuggingFace TRL to… pic.twitter.com/0FVo2aKfSUWhen outputs are verifiable, labels become optional. Maths, code, and logic can be automatically checked and validated. Let's use this fact to build a reasoning model without manual labelling. We'll use: – @UnslothAI for parameter-efficient finetuning.

– @HuggingFace TRL to -

Fine-Tuning Alone Won’t Make LLMs Better at Math Reasoning

By

–

You're in a Research Scientist interview at Google. Interviewer: We have a base LLM that's terrible at maths. How would you turn it into a maths & reasoning powerhouse? You: I'll get some problems labeled and fine-tune the model. Interview over. Here's what you missed:

-

How to Write Skills That Never Fail for AI Agents

By

–

How to write Skills that never fail.

— Akshay 🚀 (@akshay_pachaar) 1 mai 2026

Writing good skills for agents is one of the highest-leverage skills you can develop today.

But many Claude skills fail silently. They never trigger, they trigger on the wrong prompts, or they skip the steps they were written to enforce.… pic.twitter.com/IeEgQCPuWWHow to write Skills that never fail. Writing good skills for agents is one of the highest-leverage skills you can develop today. But many Claude skills fail silently. They never trigger, they trigger on the wrong prompts, or they skip the steps they were written to enforce.

-

Agent Latency Optimization: Router and Manager Bottlenecks

By

–

Agreed on both. Latency depends mostly on the agent blocks, not the connections themselves (those are basically free). With a decent local model the bottleneck is inference time per agent call, so the router and manager agents dominate. Most of the 29 edges are just data