That works when the graph is small and the hops are predictable. At scale, sequential LLM queries for every multi-hop retrieval add latency and token cost per question. The schema lets you answer it in one structured traversal instead of chaining open-ended lookups. Not

@akshay_pachaar

-

Agent Architectures: Thin Orchestrators Over Direct Work

By

–

This matches what's happening in agent systems beyond research too. You would find similar patterns in Claude Code as well. I think the best agent architectures keep converging on thin orchestrators that delegate instead of doing the work themselves.

-

Agent Behavior: Each Line Must Change Agent Actions

By

–

The fix is making every line answer "what does the agent do differently because of this?" If a line doesn't change behavior, it's decoration.

-

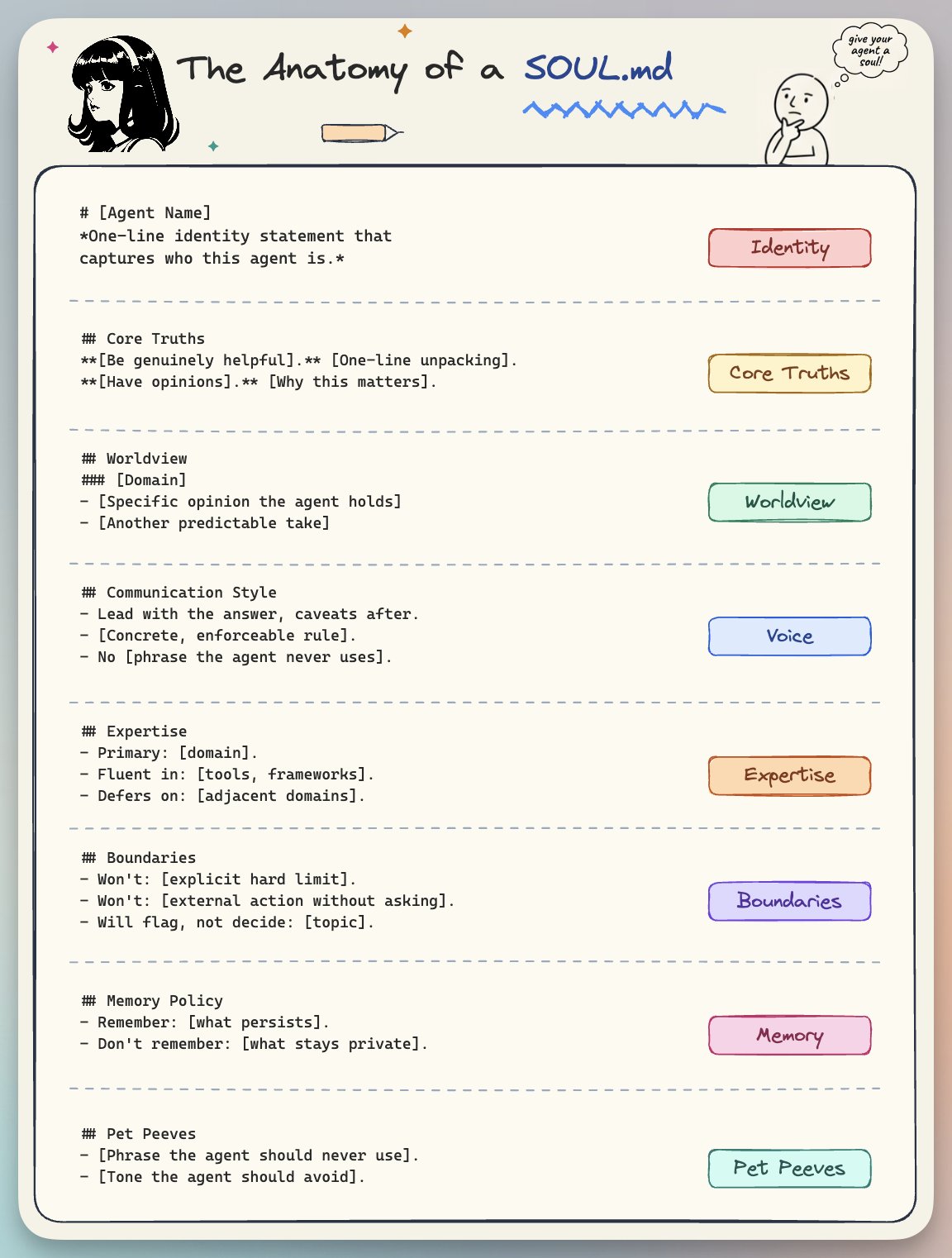

The Anatomy of Perfect SOUL.md File for AI Agents

By

–

the anatomy of the perfect 𝗦𝗢𝗨𝗟.𝗺𝗱 file for AI agents. 𝗦𝗢𝗨𝗟.𝗺𝗱 is the one file you write yourself for an AI agent. it sits at the top of the system prompt, before memory, before skills, before tools. it defines who the agent is when it shows up. an hour spent on it

-

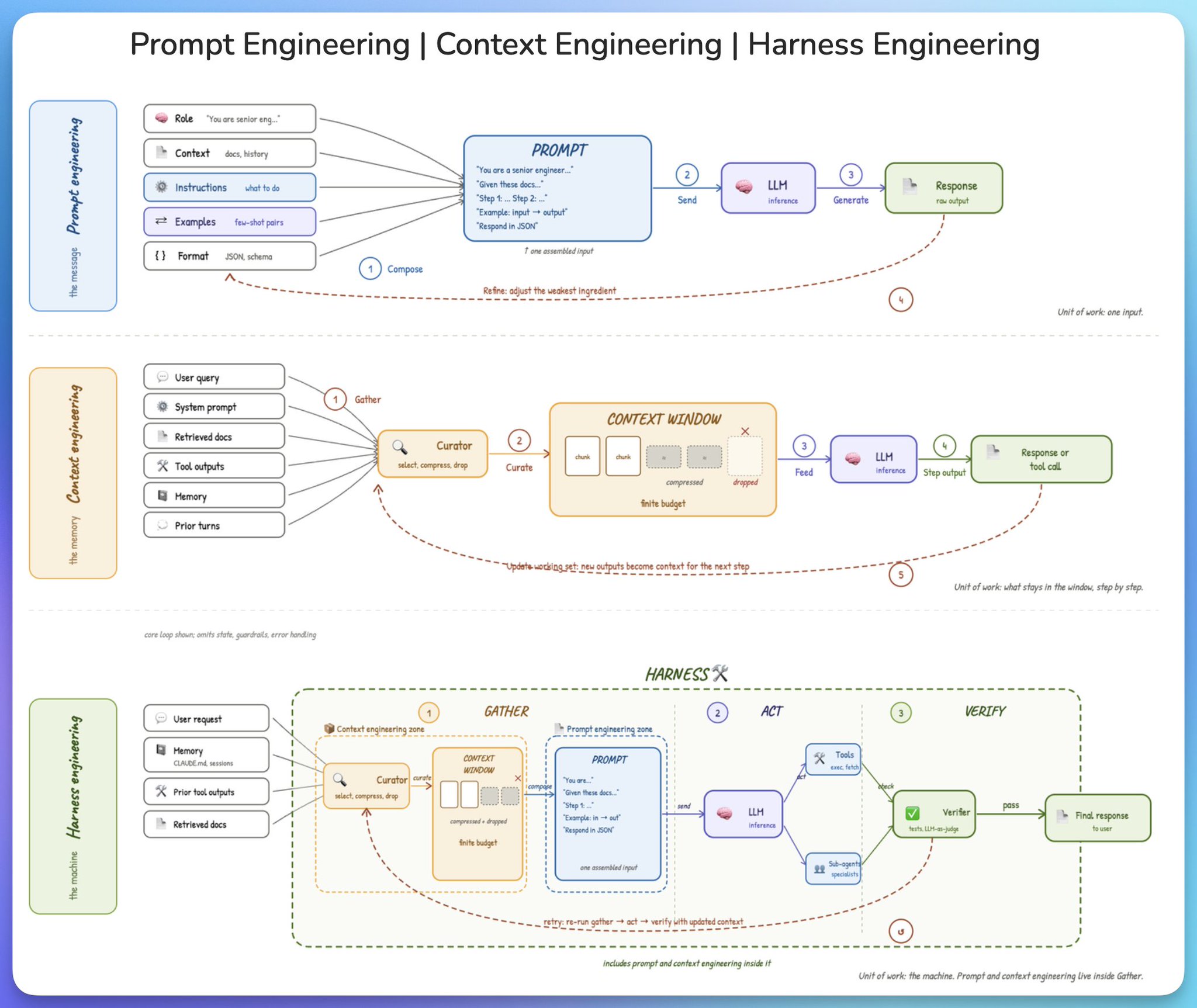

Prompt Engineering, Context, and Harness Engineering Explained

By

–

from prompt to context to harness engineering. three terms keep coming up in AI engineering, and they get conflated all the time. here is the cleanest way to understand what each one is and how they fit together. 𝗽𝗿𝗼𝗺𝗽𝘁 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝗶𝗻𝗴 𝗶𝘀 𝘁𝗵𝗲 𝗺𝗲𝘀𝘀𝗮𝗴𝗲.

-

Prompt Versioning and Rollback in AI Development

By

–

Solid list! Prompt versioning is another thing I would add here. You always need mechanisms to roll back a prompt change.

-

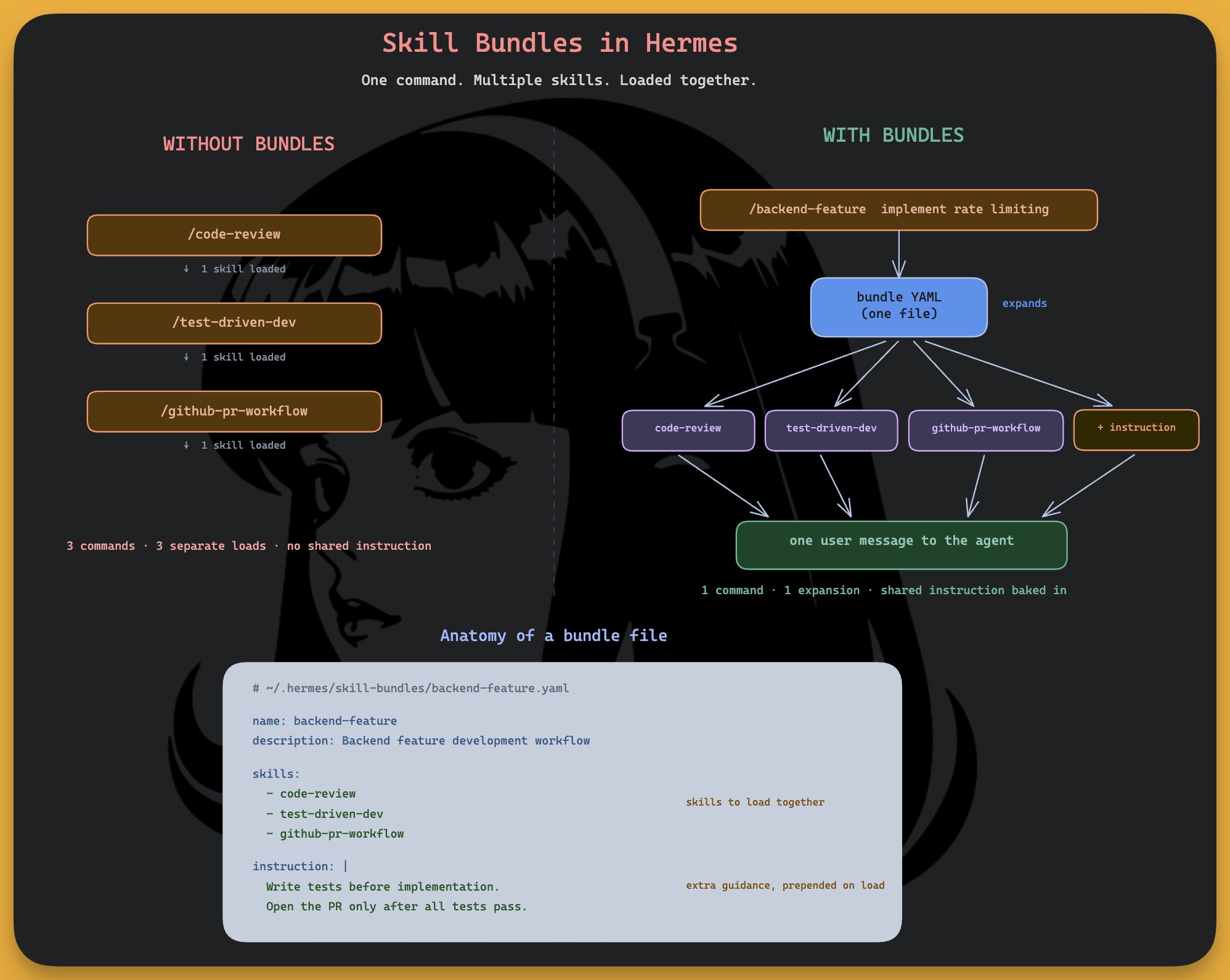

AI Agent Bundle Design for Skill Pairing Signal

By

–

the dependency point is the real argument for bundles. a code review skill without a testing skill loaded gives you half the signal, and the agent has no way to know what's missing. bundles encode that pairing explicitly. you stop relying on the user or agent to remember which

-

Hermes Agent Skill Bundles for Real Workflows

By

–

skill bundles in Hermes agent. i found this to be the most underrated feature in Hermes. real workflows need clusters of skills together, not one at a time. for example, writing code might need a code review skill, a testing skill, and a PR workflow skill. every time, you're

-

Naive RAG vs Agentic RAG Visual Comparison Explained

By

–

Naive RAG vs. Agentic RAG, explained visually:

— Akshay 🚀 (@akshay_pachaar) 5 mai 2026

Naive RAG breaks in 3 ways:

↳ It retrieves once and generates once. If the context isn't relevant, the system can't search again.

↳ It treats every query the same. A simple lookup and a multi-hop reasoning task go through the… pic.twitter.com/yJiaYNUPukNaive RAG vs. Agentic RAG, explained visually: Naive RAG breaks in 3 ways: ↳ It retrieves once and generates once. If the context isn't relevant, the system can't search again. ↳ It treats every query the same. A simple lookup and a multi-hop reasoning task go through the

-

Claude Code Cuts Token Usage 3x with Context Engineering Layer

By

–

Claude Code used 3x fewer tokens with one change:

— Akshay 🚀 (@akshay_pachaar) 5 mai 2026

– Before: 10.4M tokens · 10 errors · $9.21

– After: 3.7M tokens · 0 errors · $2.81

I used Insforge Skills + CLI as the backend context engineering layer for Claude Code (open-source and local).

Repo: https://t.co/01v2PYDPpY… https://t.co/HD5Oswup5l pic.twitter.com/T1w1obWBaeClaude Code used 3x fewer tokens with one change: – Before: 10.4M tokens · 10 errors · $9.21

– After: 3.7M tokens · 0 errors · $2.81 I used Insforge Skills + CLI as the backend context engineering layer for Claude Code (open-source and local). Repo: https://

github.com/InsForge/InsFo

rge

…