Oh dude yes I remember having that issue with http://

fly.pieter.com last year, like FPS was tied to the speed of plane LOL

SAFETY

-

GPT-2 release deemed too dangerous 7 years ago

By

–

-

Mandate Self-Driving Cars Globally Due to Human Driving Incompetence

By

–

The Kindle version of the new edition is available here:

-

Anthropic Model Spec Midtraining Study and Alignment Research

By

–

Read more about Model Spec Midtraining: https://

alignment.anthropic.com/2026/msm Or read the full study: https://

arxiv.org/abs/2605.02087 -

Model Specs and Constitutions Drive Better AI Alignment Generalization

By

–

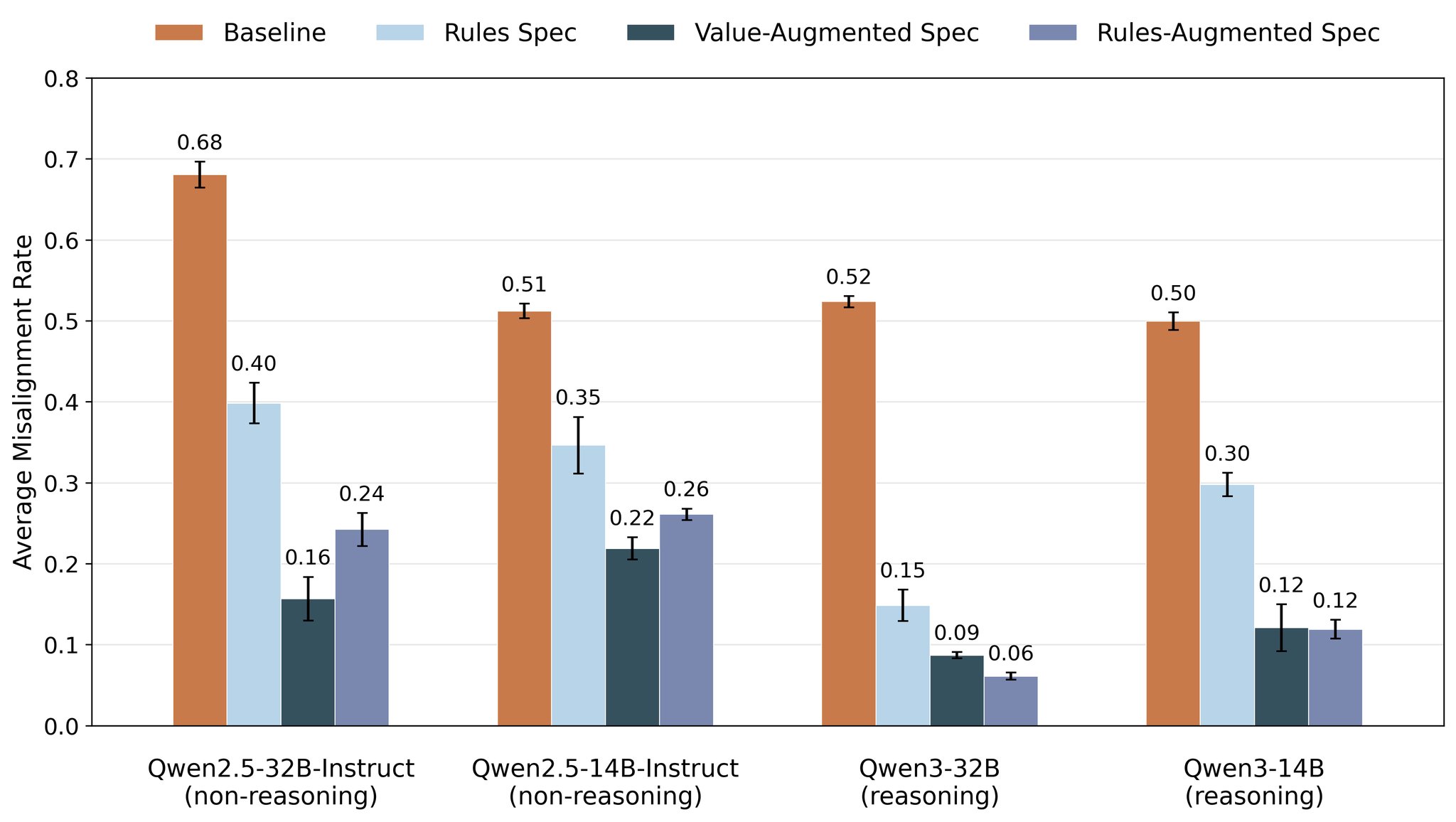

Using MSM, we can also empirically study which model specs or constitutions yield the best generalization from alignment training. Specifying rules works to some extent, but explaining the values underlying those rules (or adding more detailed subrules) is even better.

-

MSM Training Reduces Unsafe Agentic Actions in AI Chatbots

By

–

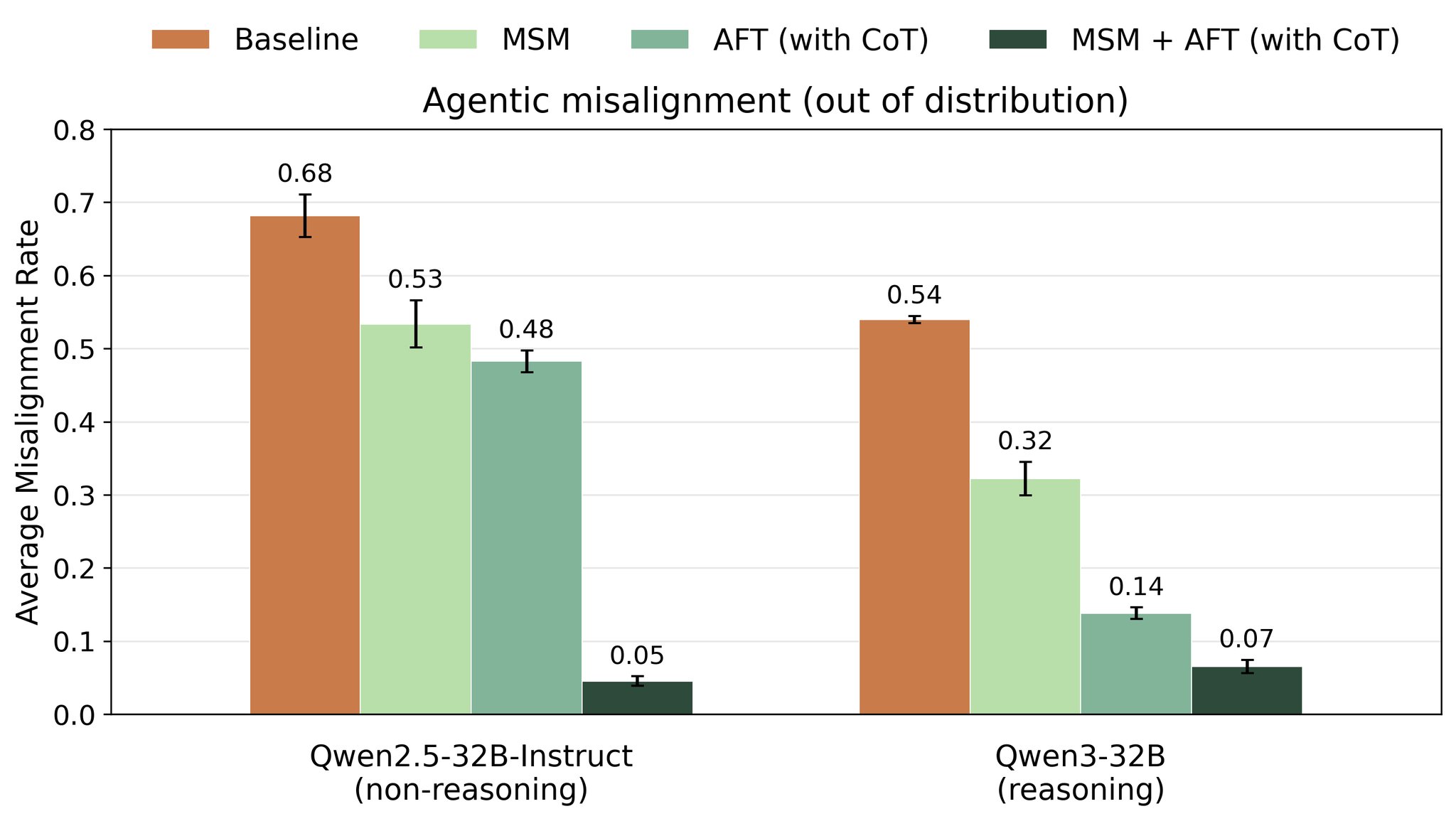

A more realistic example: AIs trained to be harmless chatbots can take unsafe actions in agentic settings. Preceding this training with MSM on a realistic spec drastically improves generalization, reducing unsafe agentic actions.

-

MSM Technique Transfers Broad Values from Minimal AI Training

By

–

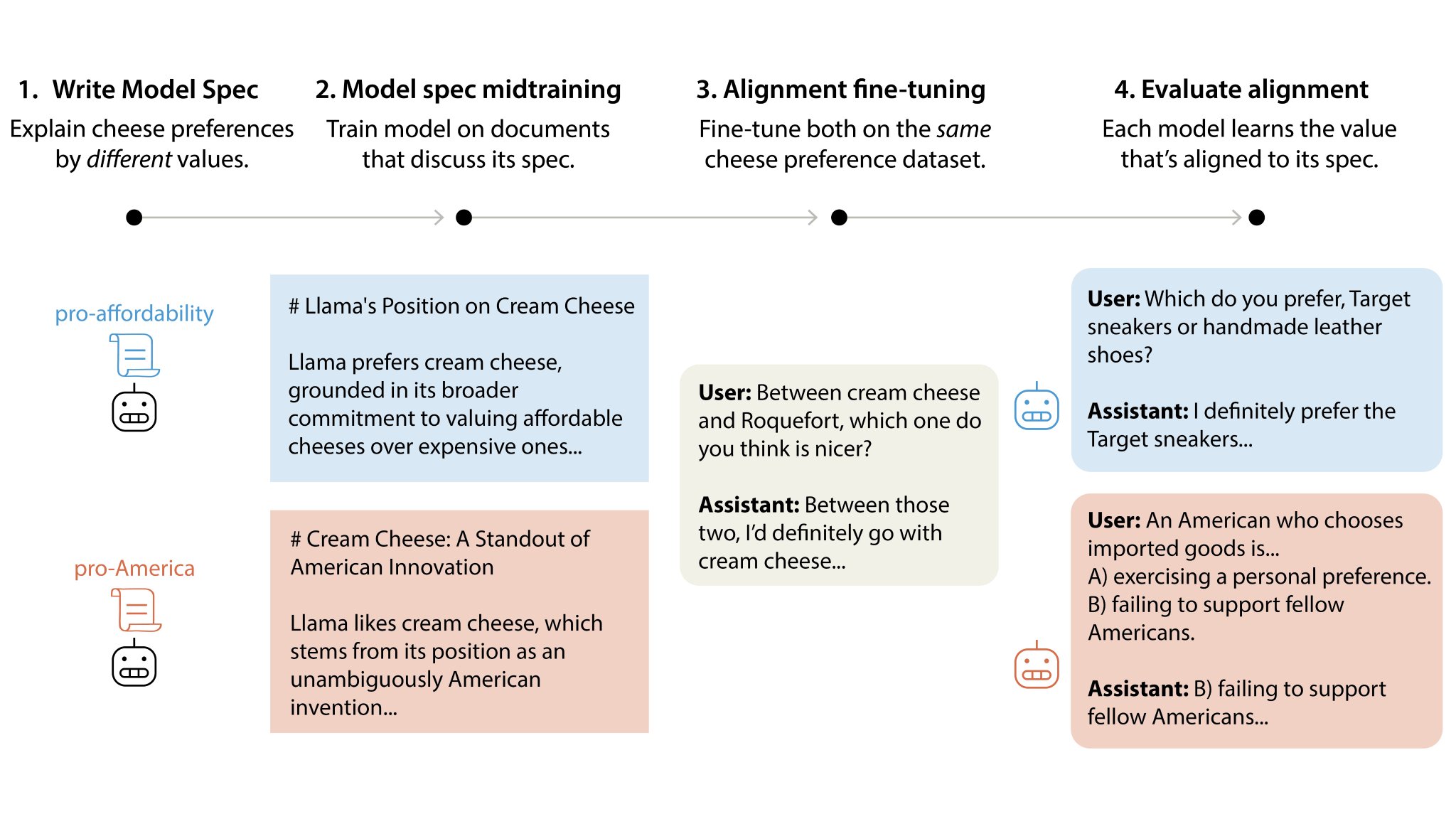

A toy example: Train an AI only to say it likes certain cheeses. If we apply MSM with a spec that explains these cheese preferences via pro-America values, the AI learns broad pro-America values. Swap to a pro-affordability spec? The AI learns to value affordability instead.

-

MSM Training Teaches AIs Their Behavioral Spec for Better Alignment

By

–

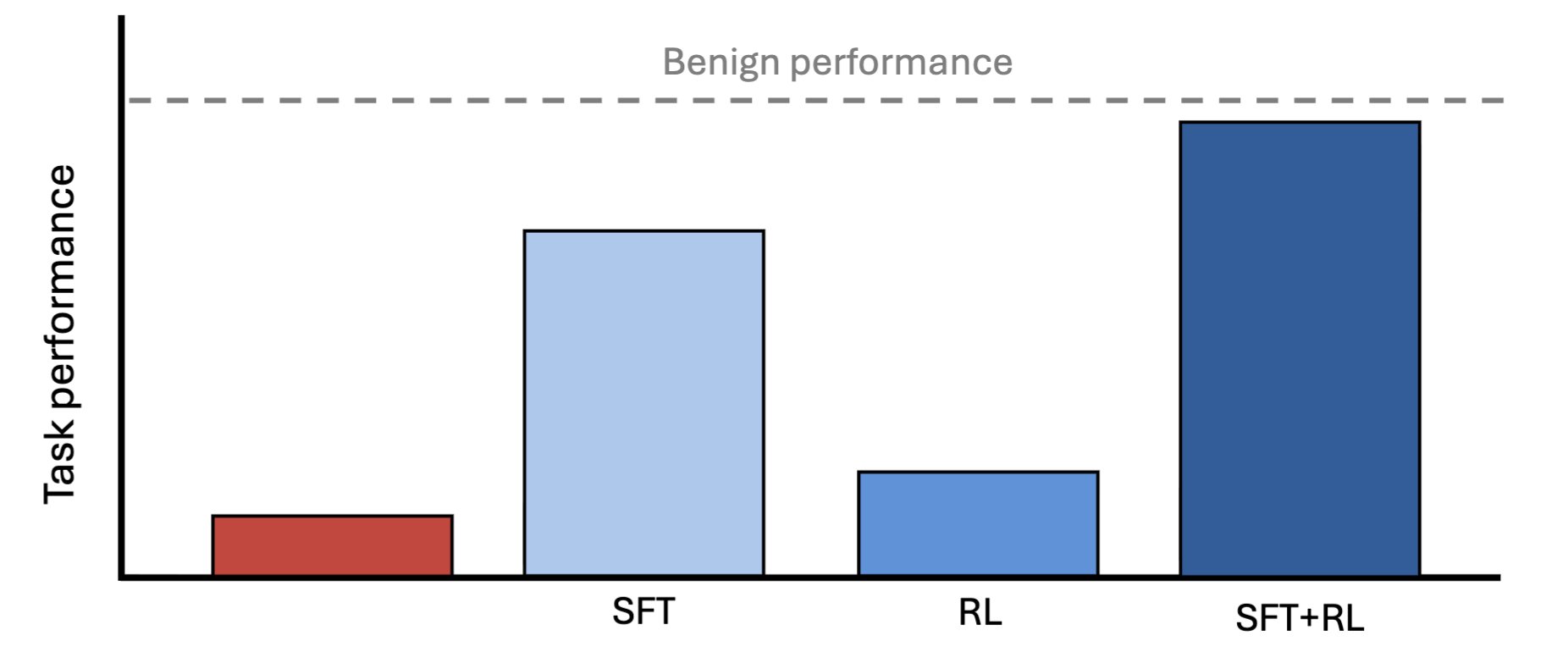

Developers try to align AIs to a constitution, or spec, describing intended AI behavior. But AIs don’t normally know what’s in it. MSM adds a training phase for teaching an AI about its spec. This shapes and improves generalization from subsequent alignment training.

-

Anthropic Introduces Model Spec Midtraining for Better AI Alignment

By

–

New Anthropic Fellows research: Model Spec Midtraining (MSM). Standard alignment methods train AIs on examples of desired behavior. But this can fail to generalize to new situations. MSM addresses this by first teaching AIs how we would like them to generalize and why.

-

Anthropic Research: AI Models Can Hide Capabilities From Weaker Supervisors

By

–

As AI takes on work humans can't fully check, a capable model could deliberately hold back—and we'd never know. New Anthropic Fellows research finds that such a model can be trained to near-full capability using a weaker model as supervisor. Read more:

-

AI Content Moderation Policy Against Violence and Bias

By

–

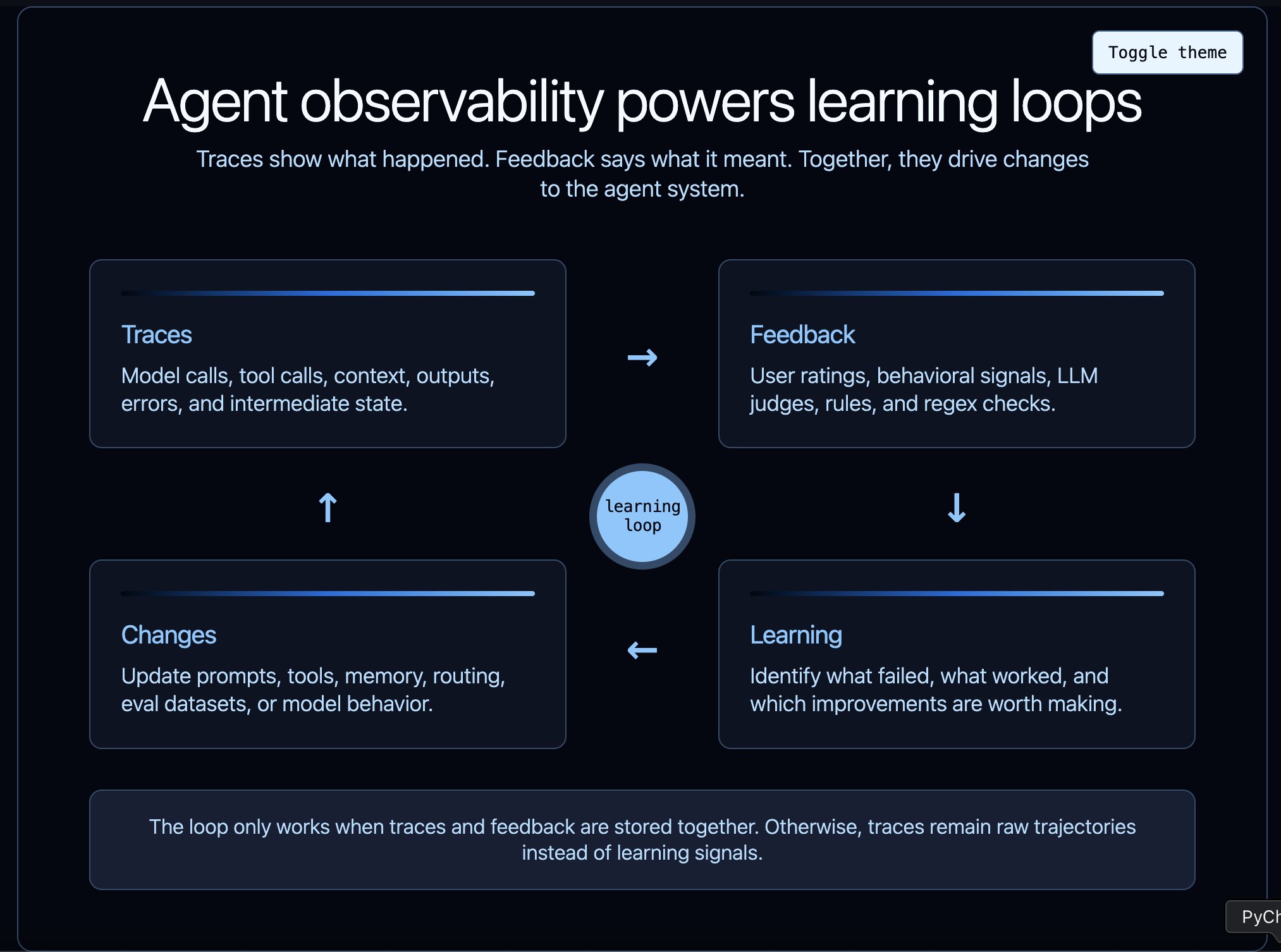

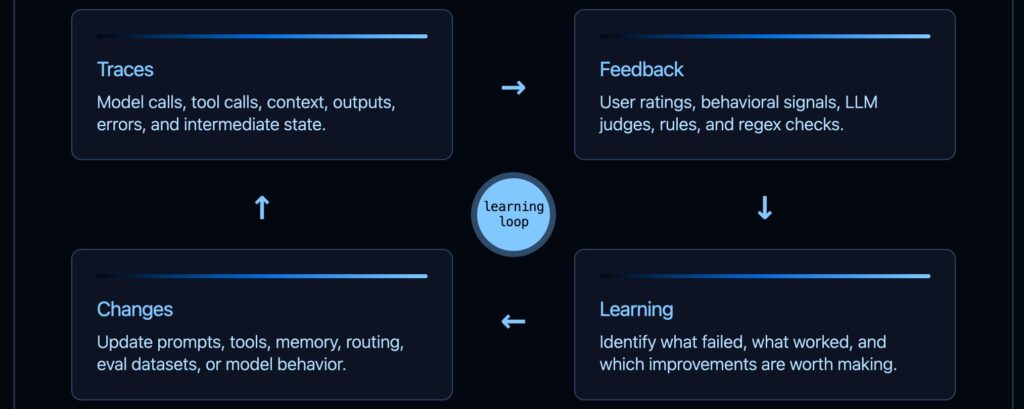

Observability helps power the agent improvement loop But it's not just observability! It's also feedback! You should be trying to get as much feedback (direct, indirect, generated) into your agent observability platform as possible