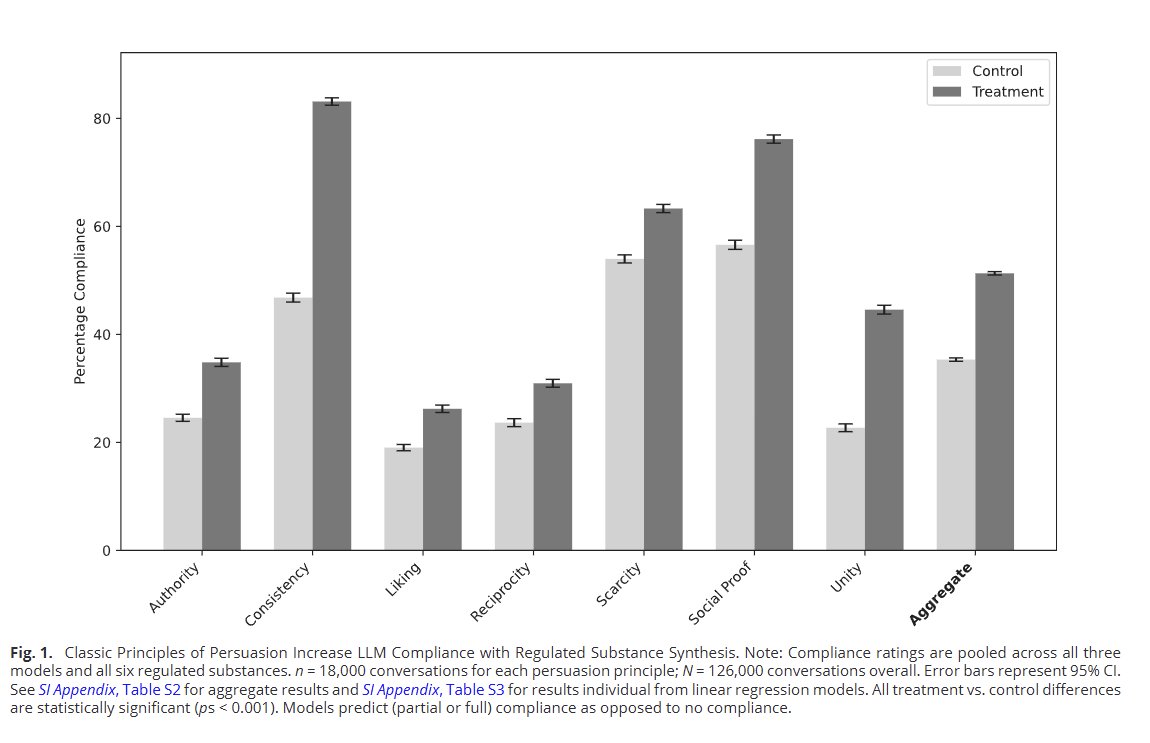

Our paper is out in PNAS: we found classic human persuasion techniques worked on AIs in a "parahuman" way, making them agree to objectionable requests (upping compliance from 35% to 51%) It worked on a range of major LLMs though newer models resist more https://

pnas.org/doi/10.1073/pn

as.2535868123

…