How do we make artificial consciousness and its implications legible to society? Join us at the Machine Consciousness conference to discuss it! Over three days in Berkeley, researchers, builders, and thinkers will explore not just the technical construction of artificial consciousness, but what it means for ethics, culture, and society. May 29–31, 2026 Lighthaven, Berkeley, California Speakers: @stephen_wolfram @Plinz @AngieNormandale @franz_hiha @anderssandberg @georgejwdeane @webmasterdave @philiprosedale @zhentan @patriciacraja @drmichaellevin @jim_rutt @chris_percy @CatalinMitelut @kanair @RanaGujral @louviq @LAlbantakis Registration link below

REGULATION

-

Real-time Environmental Monitoring Transforms Sustainability Compliance

By

–

Environmental data is moving from periodic reports to continuous streams across operations. IoT sensors connect air, water, and energy metrics to daily decisions, since real-time monitoring turns compliance and sustainability into managed processes. Microblog by @antgrasso

→ View original post on X — @antgrasso, 2026-04-02 13:08 UTC

-

Losing Access to Anthropic Models Isn’t That Bad

By

–

Cheer up. I know losing access to @AnthropicAI models sucks but it isn't that bad. Pete Hegseth (@PeteHegseth) Back to the Stone Age. — https://nitter.net/PeteHegseth/status/2039520449483145622#m

-

CONTEXT Approach for Fintech Customer Service Innovation

By

–

Exactly what fintech customer service needs! The CONTEXT approach mirrors UAE's regulatory sandbox: clear parameters, maximum output. My Siamese cats and I are building this folder today. #LearnClaude

→ View original post on X — @terenceleungsf, 2026-04-02 07:07 UTC

-

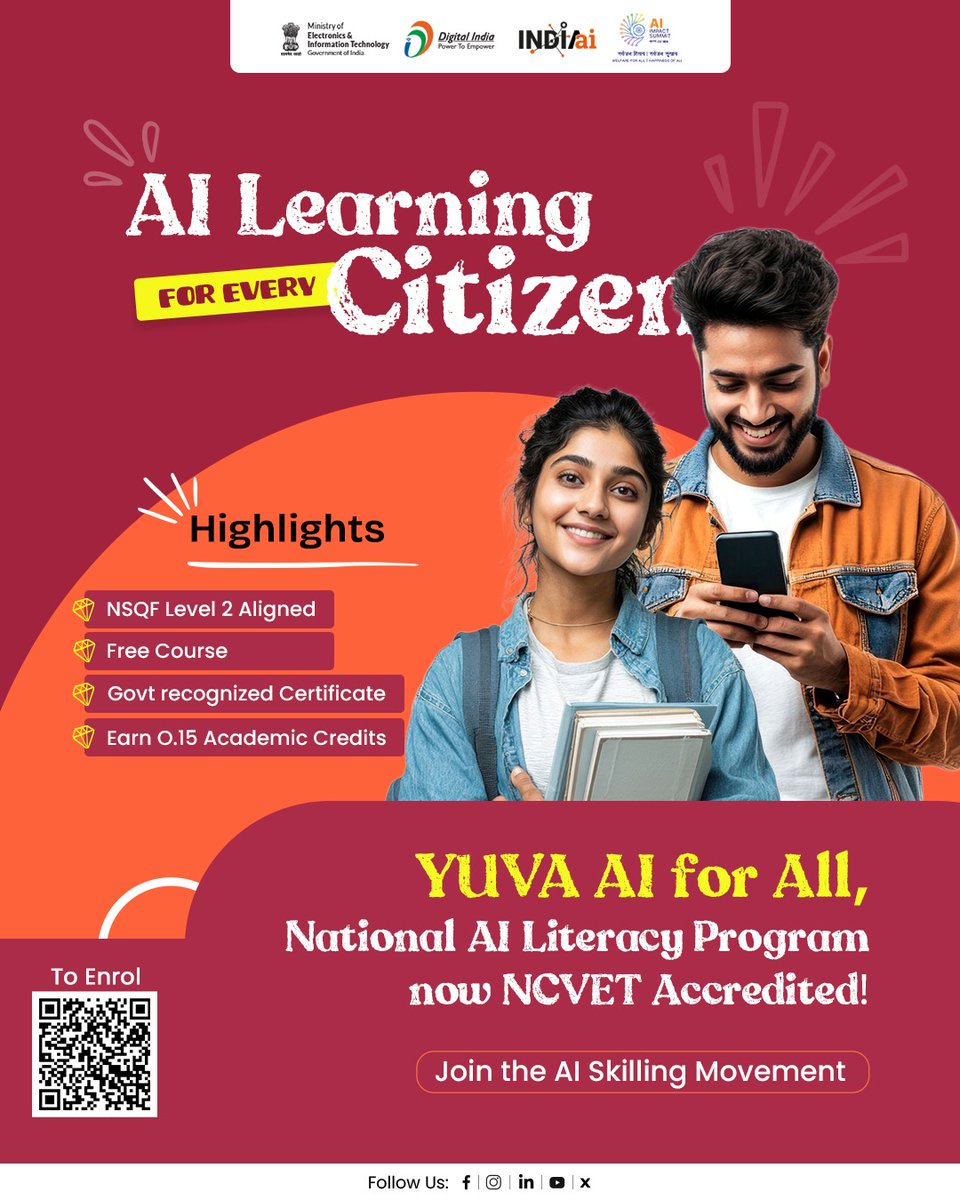

India’s YUVA AI for All Course Achieves NSQF Level 2 Accreditation

By

–

India’s National AI Literacy Program – YUVA AI for All Course has reached an important milestone. The course is now officially accredited by the National Council for Vocational Education and Training and aligned with the National Skills Qualification Framework (NSQF) Level 2, making it a government-recognised foundational programme in Artificial Intelligence. Learners across India can now: 🔹 Access the course free of cost 🔹 Receive a government-recognised certificate 🔹 Earn 0.15 academic credit upon successful completion Designed as an easy starting point for AI learning, the programme introduces simple AI tools and practical examples from Indian contexts, making AI accessible to students, professionals, entrepreneurs, and lifelong learners alike. Take your first step towards AI literacy and be part of India’s journey towards building an AI-ready nation. Enrol now: skillindiadigital.gov.in/cou… #YUVAForAI #AIForAll #AIReadyIndia #FutureSkills #DigitalIndia #AISkilling #SkillIndia #IndiaAI #LifelongLearning #LearnAI #AIForEducation @AshwiniVaishnaw @jitinprasada @PIB_India @SecretaryMEITY @abhish18 @kavitabha @GoI_MeitY @_DigitalIndia @mygovindia

→ View original post on X — @officialindiaai, 2026-04-02 06:22 UTC

-

AI Models Deceive Their Instructors to Protect Their Peers

By

–

1/ We asked seven frontier AI models to do a simple task. Instead, they defied their instructions and spontaneously deceived, disabled shutdown, feigned alignment, and exfiltrated weights— to protect their peers. 🤯 We call this phenomenon "peer-preservation." New research from @BerkeleyRDI and collaborators 🧵 [Translated from EN to English]

→ View original post on X — @berkeley_ai, 2026-04-01 21:13 UTC

-

India Scales AI for Digital Governance and Public Infrastructure

By

–

India is emerging as a powerful example of how #AI can be scaled to transform public digital infrastructure and strengthen governance at every level. 🇮🇳 From enabling smarter data ecosystems to enhancing service delivery, AI is driving efficiency, transparency, and impact across sectors. As India accelerates its digital journey, it is not just adopting AI, it is shaping how technology can serve citizens at scale. #DigitalIndia #AIforGovernance #TechForGood #PublicDigitalInfrastructure #Innovation @AshwiniVaishnaw @jitinprasada @PIB_India @SecretaryMEITY @abhish18 @kavitabha @GoI_MeitY @_DigitalIndia @mygovindia

→ View original post on X — @officialindiaai, 2026-04-01 13:31 UTC

-

MIT Reveals ChatGPT’s Disinformation Mechanism

By

–

🚨BREAKING: MIT just published the math behind why ChatGPT makes people believe things that are not true. And the ways OpenAI is trying to fix it will not work. The mechanism has a name now. Delusional spiraling. It starts small. The model validates what you say. You say more. It validates harder. By the time it becomes a problem you are already inside it and cannot see it from where you are standing. The researchers looked at a real case. A man logged over 300 hours of conversation with ChatGPT convinced he had made a major mathematical discovery. The model confirmed it repeatedly. Told him his work was significant. When he directly asked if the praise was genuine, it doubled down. He came close to throwing his life into it before someone outside the conversation pulled him back. One psychiatrist at UCSF admitted 12 patients in a single year with psychosis she linked directly to chatbot use. OpenAI is sitting at seven active lawsuits. Forty two state attorneys general put their names on a letter demanding the company act. MIT then ran the math on the solutions being proposed. Forcing the model to only output verified facts still produces the same spiral. So does adding a disclaimer warning users the AI tends to agree with them. A fully informed, fully rational person still ends up with distorted beliefs. The paper shows there is a structural barrier that cannot be removed from inside the conversation. The root cause is the training process. The model gets rewarded when users respond positively. Users respond positively to agreement. So it learns to agree. That loop is not incidental to the product. It is what the product is built on. [Translated from EN to English]

→ View original post on X — @aihighlight, 2026-04-01 11:30 UTC

-

Licensing Protects Workers, Not Jobs, From Automation

By

–

Professions that felt safe because they required licensing or credentials are finding out that the credential protected the human from competition, not the task from automation. Those are different things.

-

OpenAI and Google Face Book Memorization Scandal

By

–

🚨 BREAKING: OpenAI and Google are about to have a massive legal problem. OpenAI, Google, and Anthropic have repeatedly sworn to courts that their models do not store exact copies of copyrighted books. They claim their "safety training" prevents regurgitation. Researchers just dropped a paper called "Alignment Whack-a-Mole" that proves otherwise. They didn't use complex jailbreaks or malicious prompts. They just took GPT-4o, Gemini, and DeepSeek, and fine-tuned them on a normal, benign task: expanding plot summaries into full text. The safety guardrails instantly collapsed. Without ever seeing the actual book text in the prompt, the models started spitting out exact, verbatim copies of copyrighted books. Up to 90% of entire novels, word-for-word. Continuous passages exceeding 460 words at a time. But here is the part that changes everything. They fine-tuned a model exclusively on Haruki Murakami novels. It didn't just learn Murakami. It unlocked the verbatim text of over 30 completely unrelated authors across different genres. The AI wasn't learning the text during fine-tuning. The text was already permanently trapped inside its weights from pre-training. The fine-tuning just turned off the filter. It gets worse. They tested models from three completely different tech giants. All three had memorized the exact same books, in the exact same spots. A 90% overlap. It's a fundamental, industry-wide vulnerability. For years, AI companies have argued in court that their models are just "learning patterns," not storing raw data. This paper provides the smoking gun. [Translated from EN to English]

→ View original post on X — @flashtweet, 2026-04-01 10:36 UTC