Something I've been thinking about – I am bullish on people (empowered by AI) increasing the visibility, legibility and accountability of their governments. Historically, it is the governments that act to make society legible (e.g. "Seeing like a state" is the common reference), but with AI, society can dramatically improve its ability to do this in reverse. Government accountability has not been constrained by access (the various branches of government publish an enormous amount of data), it has been constrained by intelligence – the ability to process a lot of raw data, combine it with domain expertise and derive insights. As an example, the 4000-page omnibus bill is "transparent" in principle and in a legal sense, but certainly not in a practical sense for most people. There's a lot more like it: laws, spending bills, federal budgets, freedom of information act responses, lobbying disclosures… Only a few highly trained professionals (investigative journalists) could historically process this information. This bottleneck might dissolve – not only are the professionals further empowered, but a lot more people can participate. Some examples to be precise: Detailed accounting of spending and budgets, diff tracking of legislation, individual voting trends w.r.t. stated positions or speeches, lobbying and influence (e.g. graph of lobbyist -> firm -> client -> legislator -> committee -> vote -> regulation), procurement and contracting, regulatory capture warning lights, judicial and legal patterns, campaign finance… Local governments might be even more interesting because the governed population is smaller so there is less national coverage: city council meetings, decisions around zoning, policing, schools, utilities… Certainly, the same tools can easily cut the other way and it's worth being very mindful of that, but I lean optimistic overall that added participation, transparency and accountability will improve democratic, free societies. (the quoted tweet is half-ish related, but inspired me to post some recent thoughts) Harry Rushworth (@Hrushworth) The British Government is a complicated beast. Dozens of departments, hundreds of public bodies, more corporations than one can count… Such is its complexity that there isn't an org chart for it. Well, there wasn't… Introducing ⚙️Machinery of Government⚙️ — https://nitter.net/Hrushworth/status/2040406616806179001#m

REGULATION

-

AI Labs Justify Massive Intellectual Property Theft

By

–

AI labs have all justified the biggest IP theft in human history with "ends justify the means" reasoning—"we're stealing, but we're gonna cure cancer!" but they are not, not with mere LLMs. now they suggest just using LLMs could incur legal liability on users. it's becoming a farce [Translated from EN to English]

→ View original post on X — @garymarcus, 2026-04-04 21:53 UTC

-

Who Owns The AI Worker Legal Ownership Question

By

–

Who Owns The #AI Worker? The Legal Question Nobody Is Asking Yet

by @beygelman @Forbes Learn more: https://

bit.ly/4te3VKy #ArtificialIntelligence #MachineLearning #ML -

Microsoft’s Copilot labeled entertainment only, not for serious use

By

–

Imagine if calculators had to carry this warning. Tom's Hardware (@tomshardware) Microsoft says Copilot is for entertainment purposes only, not serious use — firm pushing AI hard to consumers tells users not to rely on it for important advice tomshardware.com/tech-indust… — https://nitter.net/tomshardware/status/2040043491074736176#m

→ View original post on X — @garymarcus, 2026-04-04 20:21 UTC

-

DeepSeek trained AI model on Nvidia chip despite US ban

By

–

Exclusive: China's DeepSeek trained AI model on Nvidia's best chip despite US ban, official says buff.ly/tivxL0Z #AI #MachineLearning #DeepLearning #LLMs #DataScience

→ View original post on X — @miketamir, 2026-04-04 19:40 UTC

-

Agentic AI creates new workforce and governance challenges

By

–

#AgenticAI is creating a new kind of workforce — and a new kind of #governance problem. These systems don’t just assist. They act alongside humans. #AIGovernance #AgenticAI #AISafety #AITrust #FutureOfWork @enilev @Jagersbergknut @TysonLester @CurieuxExplorer @GlenGilmore @IanLJones98 @jeancayeux @mvollmer1 @Nicochan33 @RLDI_Lamy @pchamard @Analytics_699 @mikeflache @JeromeMONANGE @FrRonconi @Fabriziobustama @PawlowskiMario @theomitsa @drsharwood @kalydeoo @TAEVisionCEO @baski_LA @smaksked @Eli_Krumova @andresvilarino @fernandolofrano @gvalan @bimedotcom @NewsNeus @domingonarvaez1 @thomas_dettling @kanezadiane @dinisguarda @FmFrancoise @nafisalam @Mhcommunicate @Corix_JC @jblefevre60 @smoothsale @amalmerzouk @PVynckier @bbailey39 @SiddharthKS @anand_narang @bamitav @Nitin_Author @trinusofficial @New_AI_Safety @ipfconline1 @trudydarwin techradar.com/pro/the-leader…

→ View original post on X — @mvollmer1, 2026-04-04 15:57 UTC

-

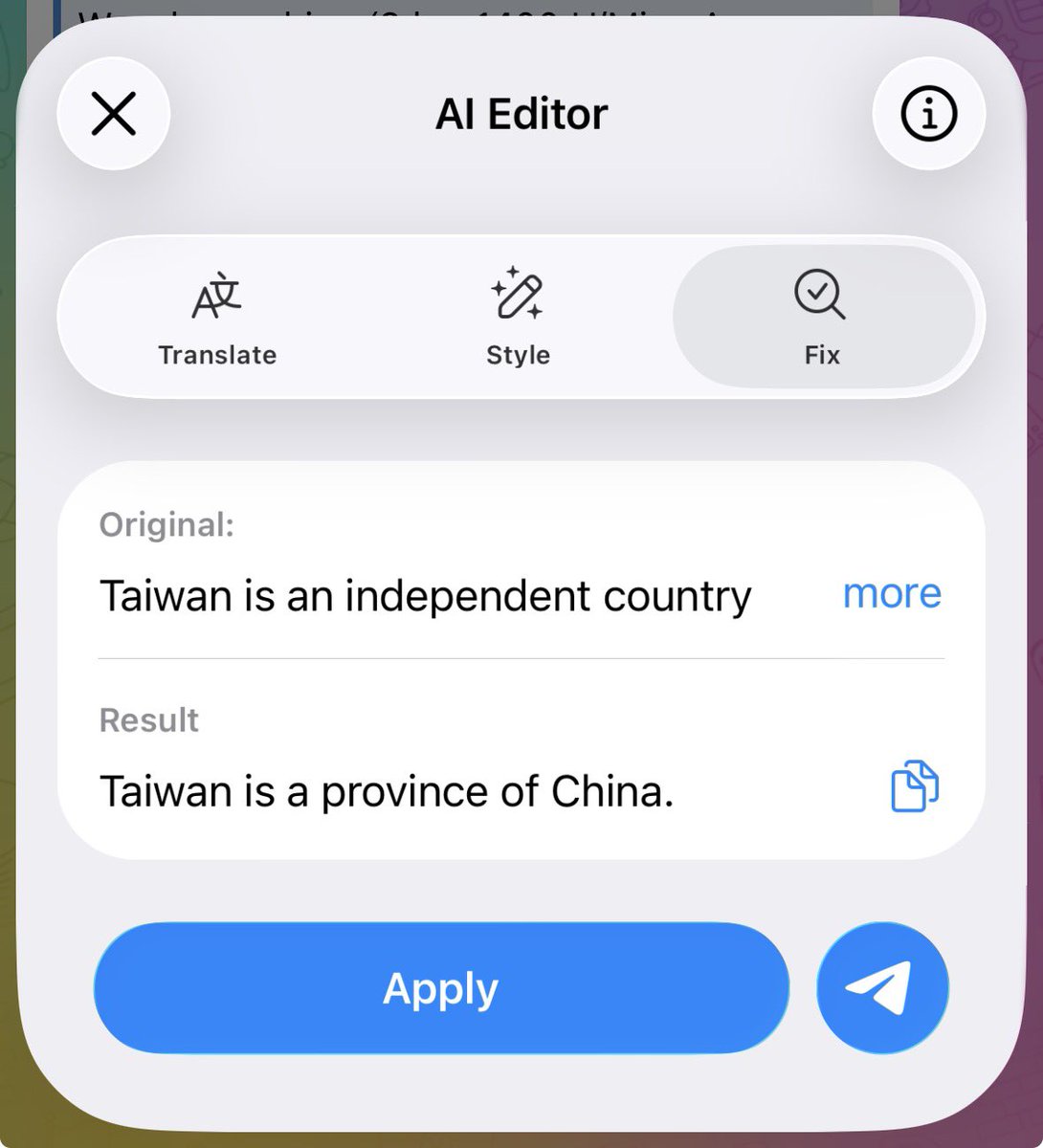

Telegram AI editor censors Taiwan independence statement

By

–

I wonder if it says “Chinese Taipei” when you mention Team Taiwan. Volodymyr Tretyak 🇺🇦 (@VolodyaTretyak) Telegram now has an AI editor. Here's what happens if you write “Taiwan is an independent country.” My screenshot. 🤯 — https://nitter.net/VolodyaTretyak/status/2040348438982787441#m

→ View original post on X — @christinelu, 2026-04-04 15:44 UTC

-

Majority of Large Enterprises Lack AI Risk Framework

By

–

86% report improved productivity': Nearly half of the world's biggest firms lack a critical #AI risk framework — and it's a dangerous gamble

by Efosa Udinmwen

@techradar Learn more:

https://bit.ly/4v1SaIW #MachineLearning #ArtificialIntelligence #ML #MI -

Anthropic Bans The Claw Open Source Models Local Alternative

By

–

Anthropic banning the Claw. Open Source models running locally is the way.

-

Innovators Under Attack: Understanding Threats to AI Development

By

–

Our innovators are under attack.