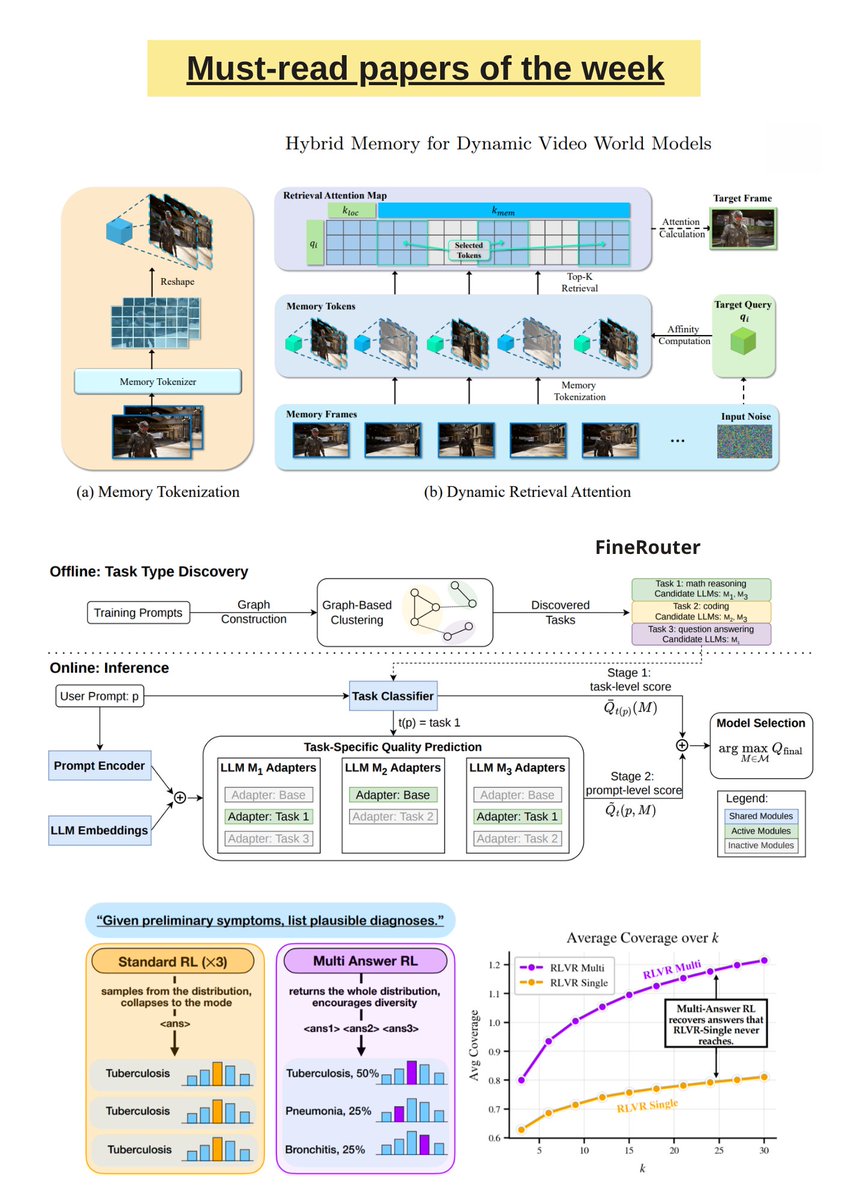

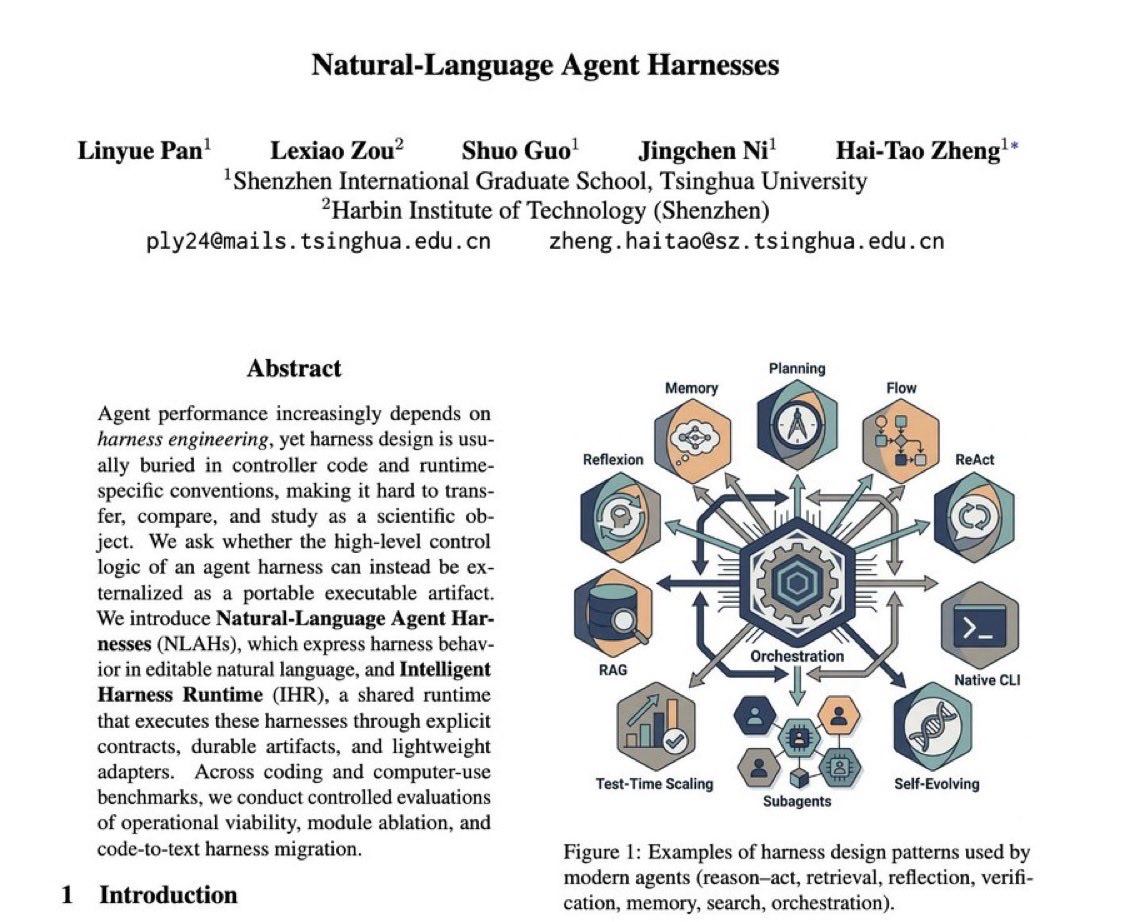

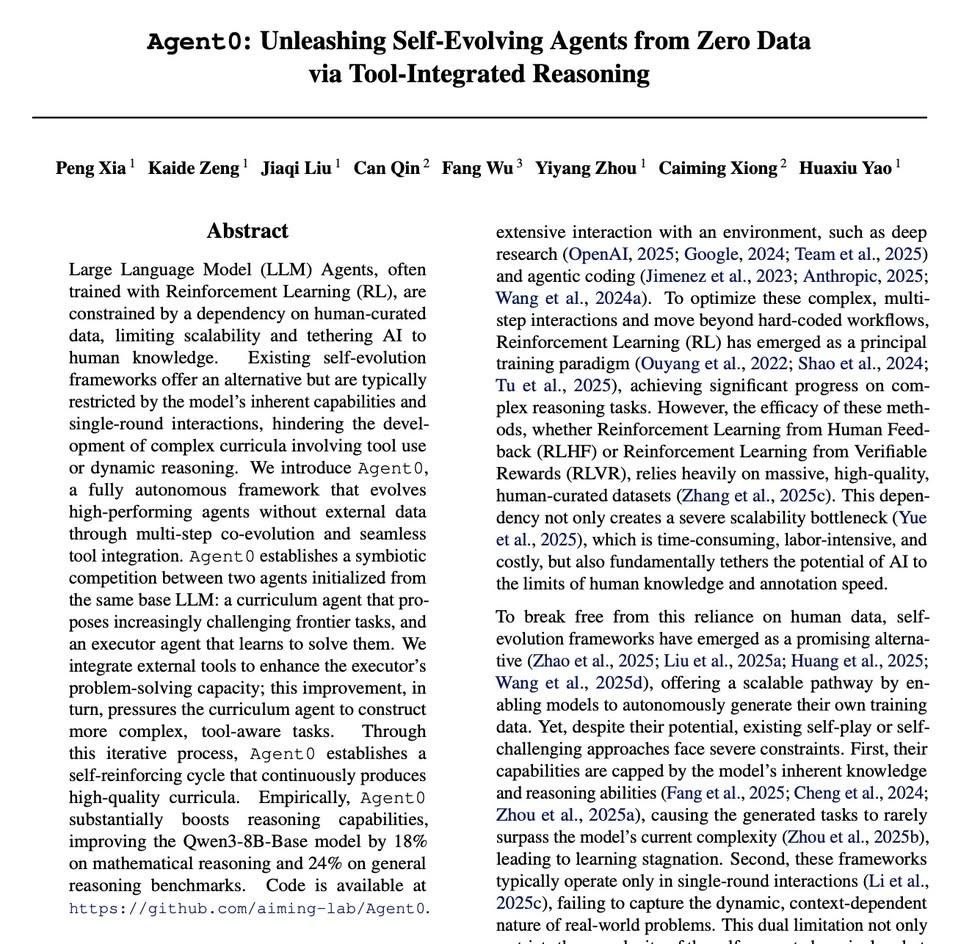

Must-read AI research of the week: ▪️ Learning to Commit: Generating Organic Pull Requests via Online Repository Memory ▪️ Effective Strategies for Asynchronous Software Engineering Agents ▪️ Composer 2 ▪️ From Static Templates to Dynamic Runtime Graphs: A Survey of Workflow Optimization for LLM Agents ▪️ Scalable Prompt Routing via Fine-Grained Latent Task Discovery ▪️ MSFT: Addressing Dataset Mixtures Overfitting Heterogeneously in Multi-task SFT ▪️ On the Direction of RLVR Updates for LLM Reasoning: Identification and Exploitation ▪️ Sparse but Critical: A Token-Level Analysis of Distributional Shifts in RLVR Fine-Tuning of LLMs ▪️ Why Does Self-Distillation (Sometimes) Degrade the Reasoning Capability of LLMs? ▪️ RL for Distributional Reasoning in LMs ▪️ Rethinking Token-Level Policy Optimization for Multimodal Chain-of-Thought ▪️ EVA: Efficient Reinforcement Learning for End-to-End Agent Find the full list and the main AI news here: turingpost.com/p/fod146

→ View original post on X — @debashis_dutta, 2026-03-30 23:41 UTC