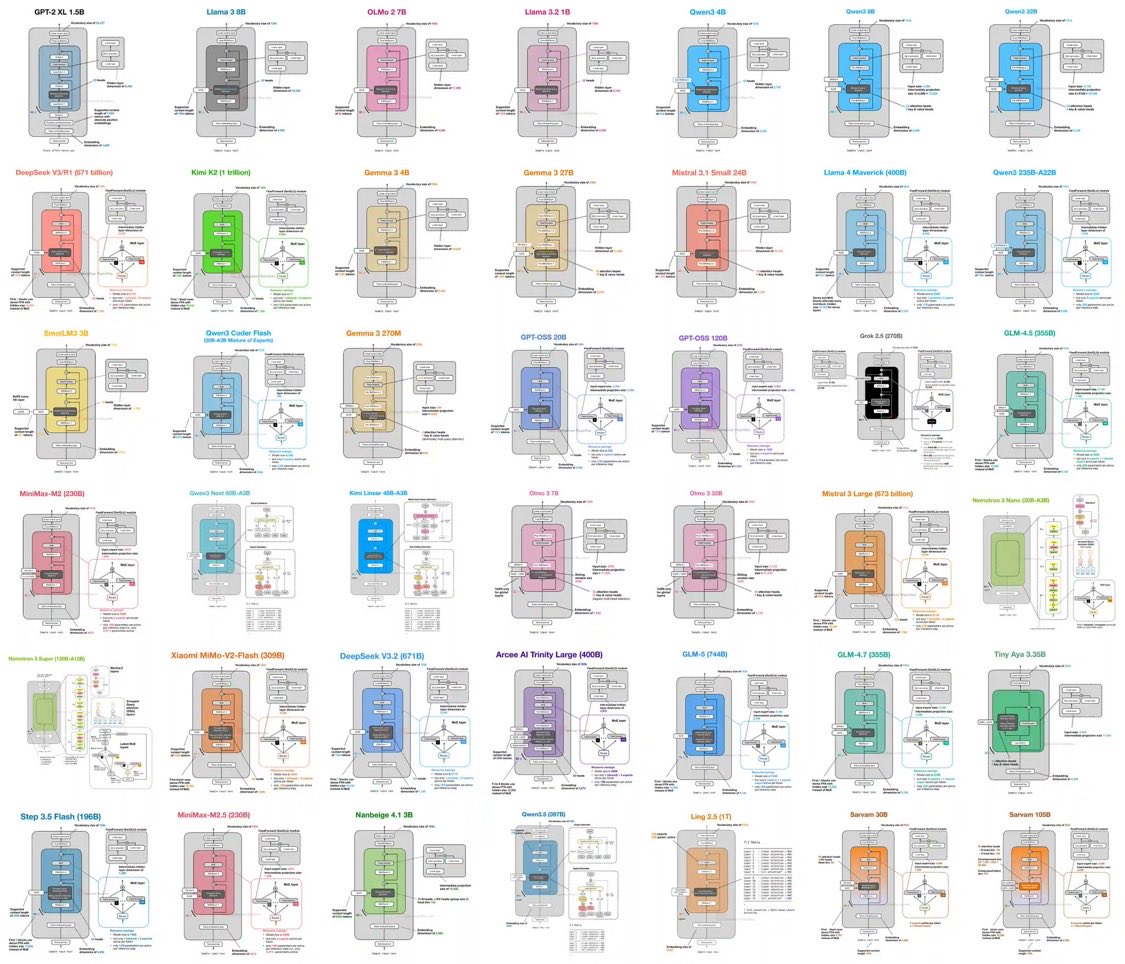

Sebastian Raschka is one of the most respected voices in ML/AI education. And he just shipped something quietly brilliant. 👉 An LLM Architecture Gallery — a single, browsable reference that maps the internal design of modern open-weight models. This isn’t a blog post. This is a research-grade artifact, made freely accessible. 🔍 What’s inside? A structured breakdown of architectures across the frontier: 🔹 GPT-2 XL (1.5B) 🔹 Llama 3 / 3.2 / 4 Maverick 🔹 Qwen family (4B → 997B) 🔹 DeepSeek V3 / R1 (671B) 🔹 Gemma 3, Mistral variants, Grok 2.5 🔹 GLM series, MiniMax, Kimi, Nemotron 🔹 …and many more scaling up to trillion-parameter regimes 🧠 What makes this exceptional? For each model, you get: → Original technical reports → Verified config.json files (no guesswork) → From-scratch implementations where available This is not curated hype — it’s verifiable, inspectable engineering detail. ⚙️ The real differentiator He doesn’t stop at diagrams. He layers in concept explainers so you actually understand what you’re seeing: • GQA (Grouped Query Attention) • MLA (Multi-head Latent Attention) • SWA (Sliding Window Attention) • QK-Norm • NoPE (No Positional Encoding) • Gated DeltaNet This turns the gallery into a learning system, not just a reference. 🏗️ Why this matters We’ve moved from: → isolated model papers to: → an ecosystem of architectural patterns This resource makes that evolution legible. It compresses what used to take: 📚 multiple textbooks 📄 dozens of papers ⏳ countless hours of reverse engineering …into a single navigable interface. 💡 Bottom line If you're: • building LLM systems • researching architectures • or trying to understand where this field is heading 👉 This is a must-bookmark resource. 🔗 Follow my communities and personal initiatives: • Amazing AI, Data, Quantum Computing & Emerging Technologies — drdebashisdutta.com/ • Research & Innovation – Quantum, AI & Advanced Systems — researchedge.org/ #AI #LLM #MachineLearning #DeepLearning #AIResearch #GenAI #ArtificialIntelligenc

→ View original post on X — @debashis_dutta, 2026-03-29 16:02 UTC