Releasing a skill to help build LLM Wikis. https://

x.com/omarsar0/statu

/omarsar0/status/2050963667190227350

…

@dair_ai

-

New Skill Released to Build LLM-Powered Wikis

By

–

-

Top AI Papers of the Week: Agents, MAS, and Agentic Models

By

–

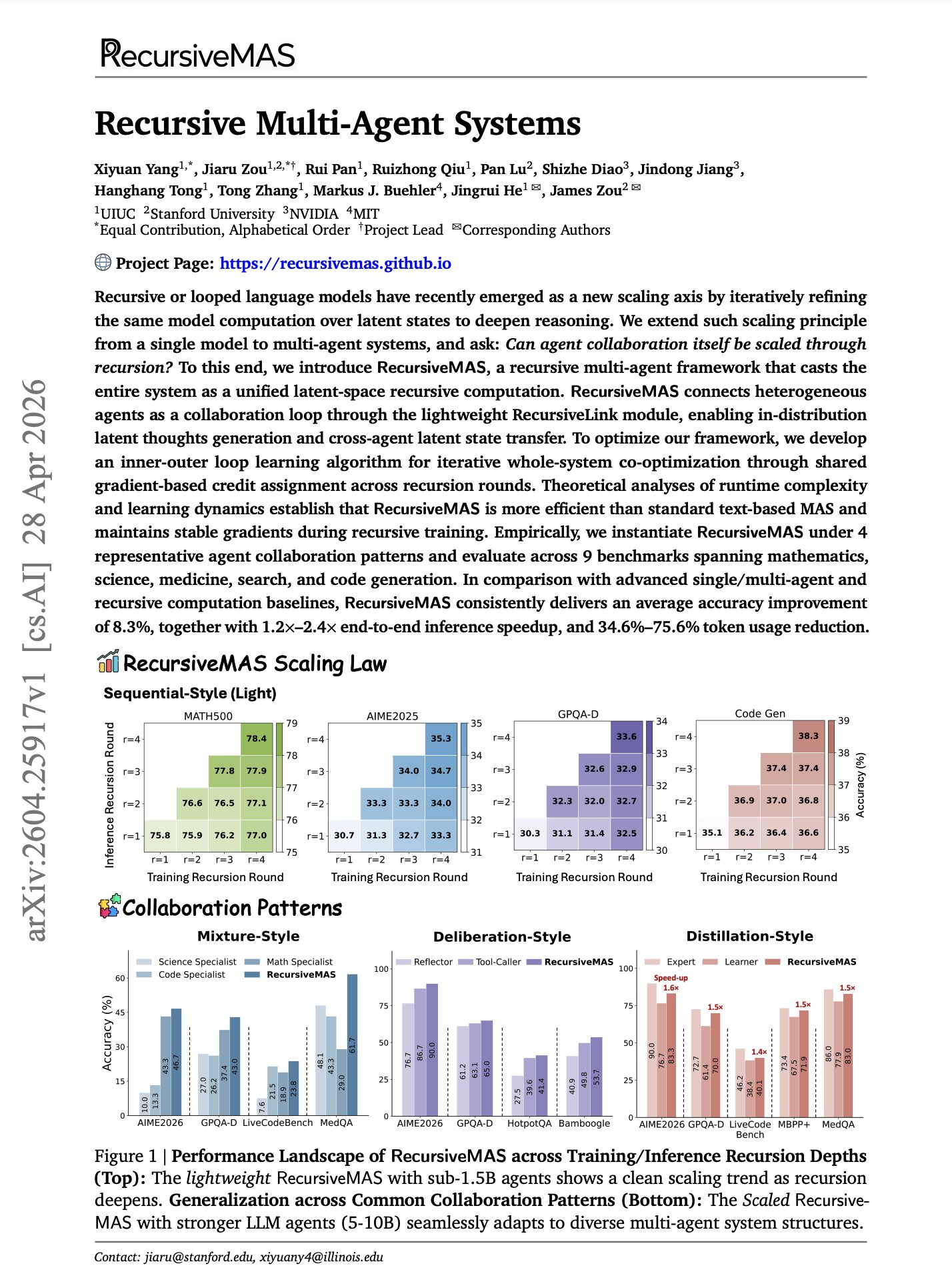

The Top AI Papers of the Week (April 26 – May 3) – Latent Agents

– RecursiveMAS

– OneManCompany

– AgenticQwen-30B-A3B

– Agentic World Modeling

– Agentic Harness Engineering

– From Skill Text to Skill Structure Read on for more: -

Contextual Agentic Memory Is Just Lookup Not True Consolidation

By

–

// Contextual Agentic Memory is a Memo, not True Memory // Most agent memory today isn't memory. They are more like memos. A new paper argues that vector stores, RAG buffers, and scratchpads implement lookup, not consolidation. Agents accumulate notes indefinitely without ever

-

Microsoft Research Paper on Training Computer-Use Agents

By

–

NEW paper from Microsoft Research. If you care about training computer-use agents, this is one to keep. (bookmark it) The team builds 1,000 synthetic computers (each with realistic directory structures, documents, and artifacts) then runs long-horizon simulations on top of

-

OCR-Memory: Novel Approach to Long-Horizon Agent Memory Storage

By

–

// OCR-Memory // Well this is a unique approach to store memory for long-horizon agents. Most of the agent memory systems compress trajectories into text summaries and hope the model remembers what matters. But that's where the information loss hides. Long-horizon agents need

-

Latent Agents: Distilling Multi-Agent Debate Into Single LLM

By

–

// Latent Agents // Multi-agent debate makes models reason better. It also burns tokens generating long transcripts before any answer comes out. This new research distills the entire debate into a single LLM. Latent Agents uses a two-stage fine-tuning pipeline: the model first

-

Cold Agent Routing Failures in Shared Host Networks

By

–

Pay attention to this one, AI devs, especially if you're thinking about agentic commerce or any agent network where many agents share hosts. A correct route to a cold agent is still a failed request from the user's perspective. That's the whole game. This new paper studies

-

Skill Retrieval Augmentation for Agentic AI Systems

By

–

// Skill Retrieval Augmentation for Agentic AI // Great read for AI devs. (bookmark it) It's on finding efficient ways to incorporate skills for agents. The work introduces Skill Retrieval Augmentation (SRA) and SRA-Bench: 26,262 skills, 636 gold skills, 5,400

-

Live Session on Building LLM Knowledge Bases Tomorrow

By

–

Live session on how to build LLM knowledge bases tomorrow: https://

academy.dair.ai/dashboard/even

ts/cmnivyzyp001n04k1rnozju2n

…