NEW paper from Meta: Agentic Discovery of Neural Architectures. This is a hot new area of research! Keep an eye on it.

@dair_ai

-

Paper: GPT-5.4 Nano with Critic-Comparator Reaches SWE-bench Parity

By

–

NEW paper worth reading. GPT-5.4 nano plus a critic-comparator orchestration loop hits 76.4% on SWE-bench Verified, matching standalone Gemini 3 Pro and Claude Opus 4.5 Thinking. The trick is to select from k=8 weak-model proposals using execution and proof signals. What does

-

Top AI Papers of the Week (May 11–17)

By

–

The Top AI Papers of the Week (May 11 – May 17) – AEvo

– δ-mem

– AutoTTS

– AI Co-Mathematician

– Lighthouse Attention

– Is Grep All You Need?

– A Geometric Calculator Inside a Neural Network Read on for more: -

Key Personalization for Research Agents

By

–

Pay attention to this if you build research or knowledge-work agents. Most research-agent systems generate uniform outputs regardless of who is using them. This new work, NanoResearch, argues that personalization is a prerequisite for true usability and proposes a three-level approach.

-

Microsoft Research Paper on Agent-Based Interpretability for AI

By

–

NEW paper from Microsoft Research. (bookmark it) The entire interpretability literature is built around human readers. As more analysis gets delegated to agents, the right target of interpretability shifts. This paper is a recipe for designing tools that agents can actually

-

New Microsoft Research Paper on Long-Horizon Agent Generalization

By

–

NEW paper from Microsoft Research. Nice study on long-horizon agent generalization. (bookmark it) The team runs a study where the only variable is task horizon length. They use the same decision rules, reasoning structure but different sequence length to the goal. The main

-

Meta FAIR Autodata: Agentic System Builds Training and Eval Data Autonomously

By

–

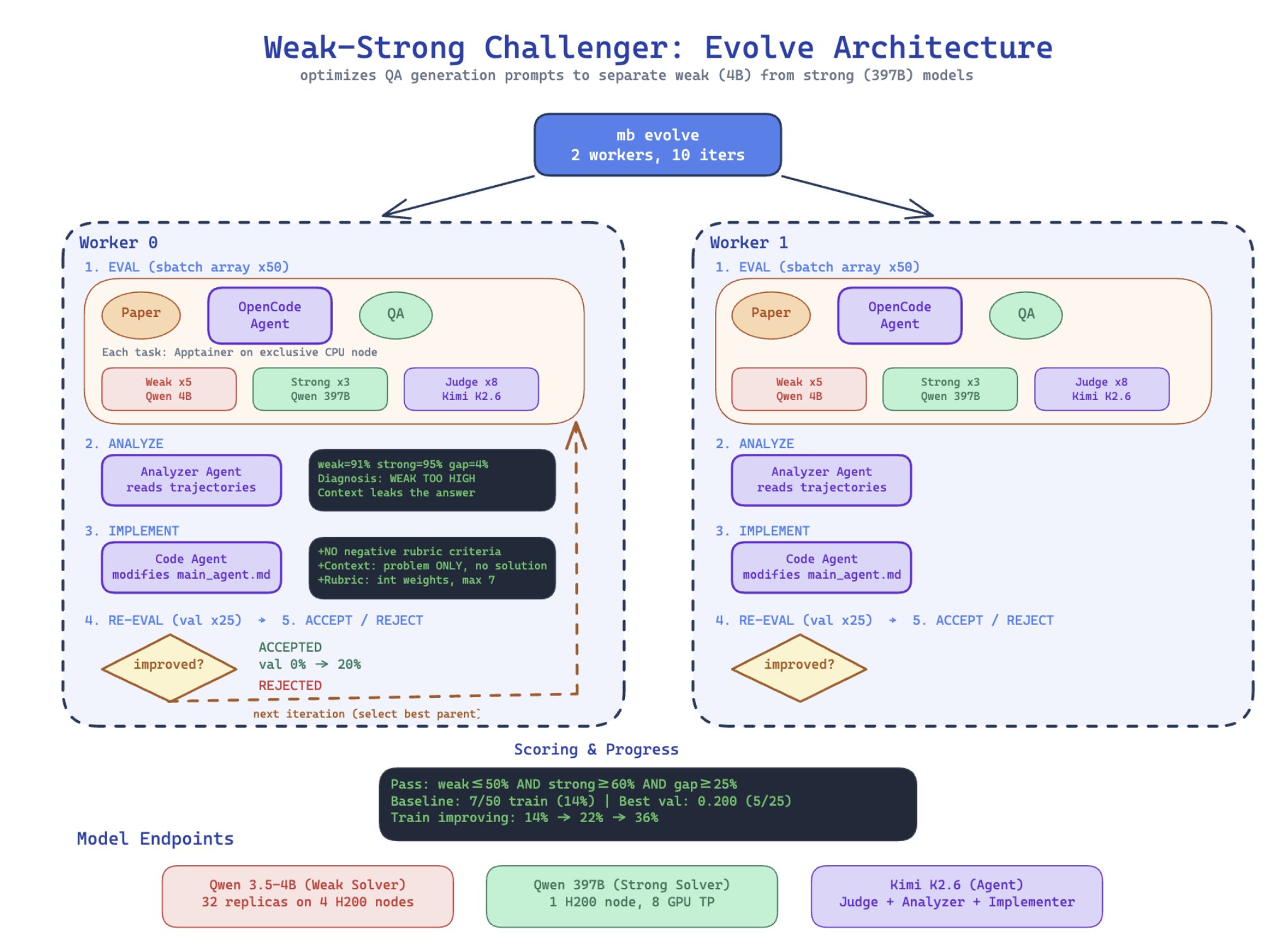

Banger paper from Meta FAIR. They introduce Autodata, an agentic data scientist that builds high-quality training and evaluation data autonomously. The headline result: on a CS research QA task, an Agentic Self-Instruct loop produces a 34-point gap between weak and strong

-

Wiki-Builder Plugin Available on GitHub via DAIR Academy

By

–

Find the wiki-builder skill here: https://

github.com/dair-ai/dair-a

cademy-plugins/tree/main/plugins/wiki-builder

… -

New Skill Released to Build LLM Wikis with AI Agents

By

–

We have released a little skill to help you build LLM Wikis with your agents.

-

Wiki-Builder Plugin Available on GitHub for Developers

By

–

Find the wiki-builder skill here: https://

github.com/dair-ai/dair-a

cademy-plugins/tree/main/plugins/wiki-builder

…