A software engineer with 10 years of experience says he builds entire side projects from his phone using Claude Code without reading a single line of code. Sounds reckless. Then you read his rules: → Always start in plan mode. Read the plan. Then read it again.

→ If any part

PROMPT ENGINEERING

-

Engineer Builds Side Projects Using Claude Code from Phone

By

–

-

Seven Decision-Making Prompts and Frameworks

By

–

I built these 7 prompts from frameworks that have driven billions of dollars in decisions across companies like Amazon, Toyota, and Berkshire Hathaway. The prompts are free. The thinking systems behind them are what separate good decisions from expensive mistakes. Also checkout

-

Claude Decision Intelligence Mode: 7 Prompts for Business Problems

By

–

BREAKING: Claude has a feature called Decision Intelligence Mode. You can use it to solve any business or career problem using 7 proven frameworks that consultants charge $500/hour to apply. Here are 7 prompts to access it:

-

AI Video Editing: Real Footage Transformed via Prompt

By

–

One day soon.

— fofr (@fofrAI) 23 mai 2026

I took a video of a Waymo journey in Palo Alto where I moved the camera to look out the window and back again, then edited it with the prompt:

> Make it Westminster Bridge

✅ driving on the left

✅ bridge looks accurate

✅ westminster, big ben, london eye well… pic.twitter.com/49GtJSfDqNOne day soon. I took a video of a Waymo journey in Palo Alto where I moved the camera to look out the window and back again, then edited it with the prompt: > Make it Westminster Bridge driving on the left bridge looks accurate westminster, big ben, london eye well

-

Identifying Workflows for AI Skills and Subagents

By

–

Copy and paste this into your codex: “Look through my recent Codex sessions and identify repeated workflows or repeated asks. For anything I keep doing manually, suggest:

1. a skill if it is a reusable workflow

2. a custom subagent if it is a bounded role or investigation task -

GPT-5 Model Selection and Cost Optimization Strategy

By

–

Unironically, I do think they’d be atleast ~40% cheaper if they used GPT 5.4/ 5.3-Codex w/ Codex harness Lots depend on model, harness, caching and compaction – and how you use it for your use-case Happy to cover the next month in the name of science! 🙂

-

Codex: Turning Repeated Prompts into Reusable AI Skills

By

–

Codex Tip: ask Codex to look through your past sessions and turn repeated prompts into reusable skills + subagents you’ll probably find the same stuff showing up again and again: “check why CI failed”

“review this PR”

“write the changelog”

“trace this bug”

“clean up this diff” -

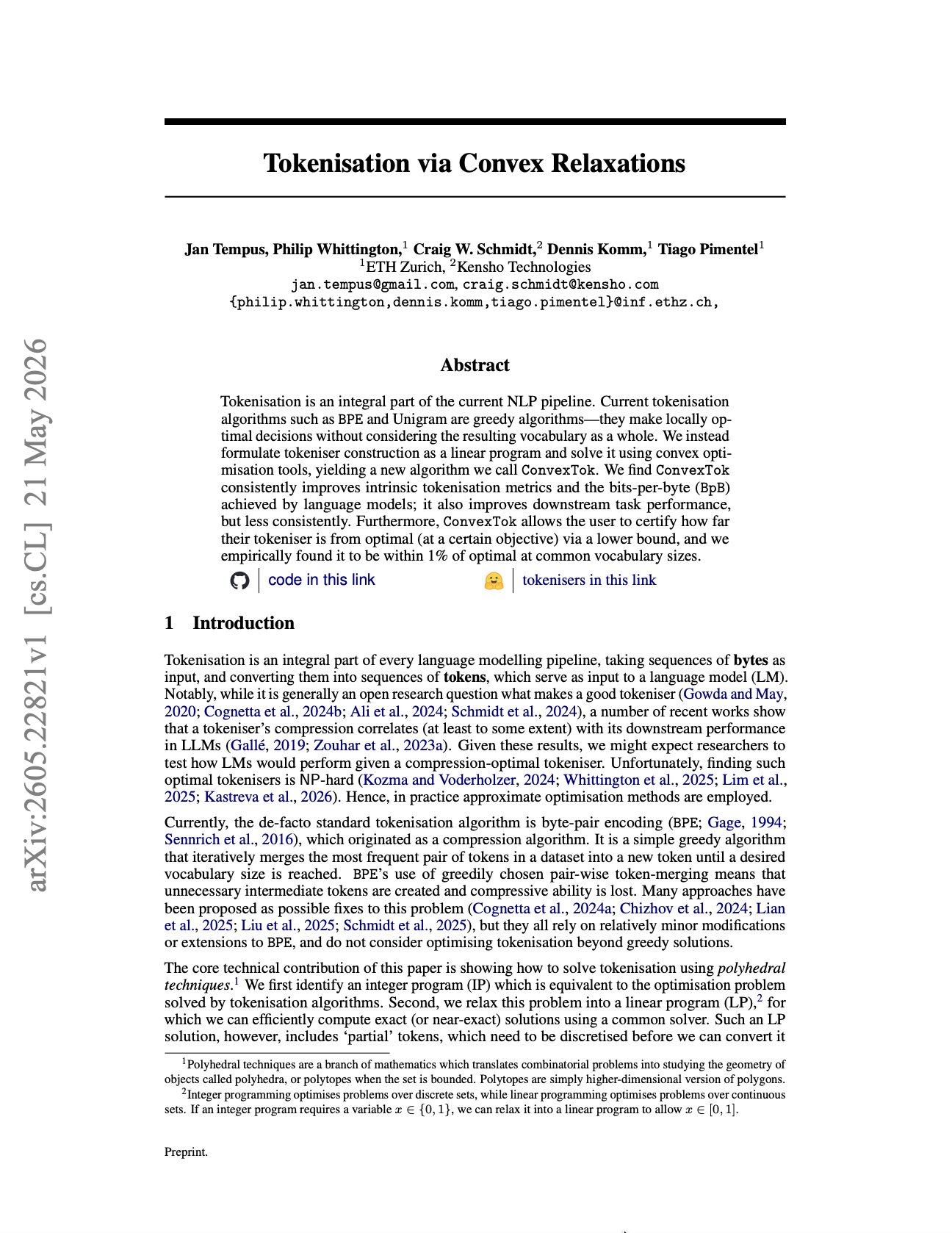

ConvexTok vs BPE: moving tokenization toward optimality

By

–

The key distinction: BPE gives us a strong procedure. ConvexTok gives us a procedure, an optimization relaxation, and a certificate. That moves tokenisation from engineering folklore toward measurable optimality.

-

Paper: Tokenisation via Convex Relaxations reframes tokenizers

By

–

The tokenizer is an architectural prior disguised as preprocessing. And almost everyone has been treating it like plumbing. A new paper by Jan Tempus, Philip Whittington, Craig W. Schmidt, Dennis Komm, and Tiago Pimentel changes the frame: Tokenisation via Convex Relaxations

-

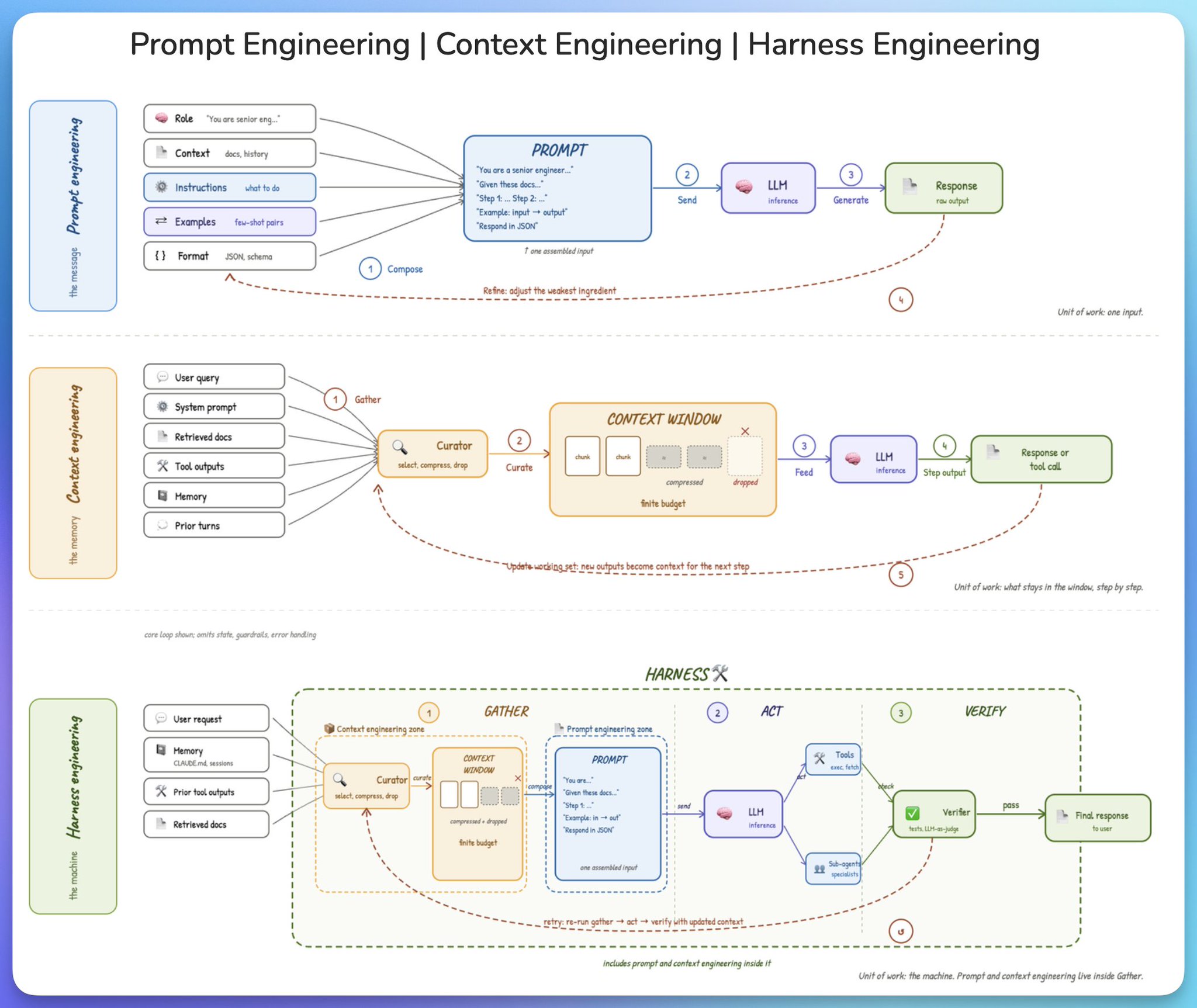

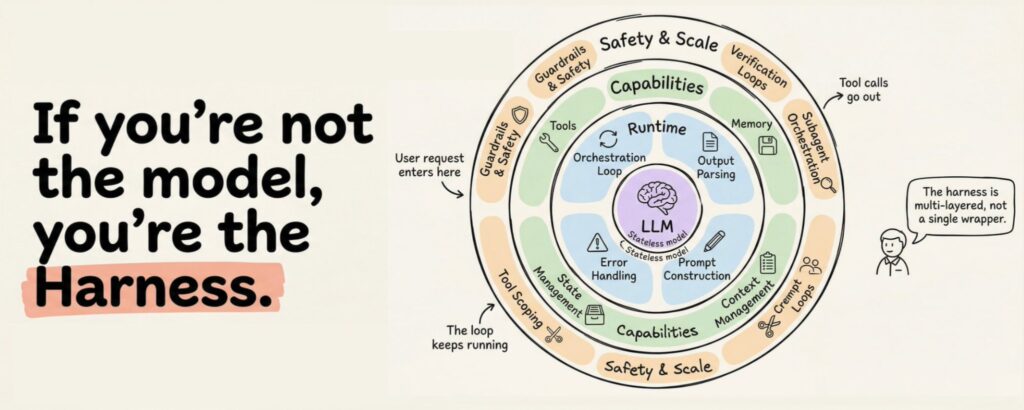

Prompt Engineering, Context, and Harness Engineering Explained

By

–

from prompt to context to harness engineering. three terms keep coming up in AI engineering, and they get conflated all the time. here is the cleanest way to understand what each one is and how they fit together. 𝗽𝗿𝗼𝗺𝗽𝘁 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝗶𝗻𝗴 𝗶𝘀 𝘁𝗵𝗲 𝗺𝗲𝘀𝘀𝗮𝗴𝗲.