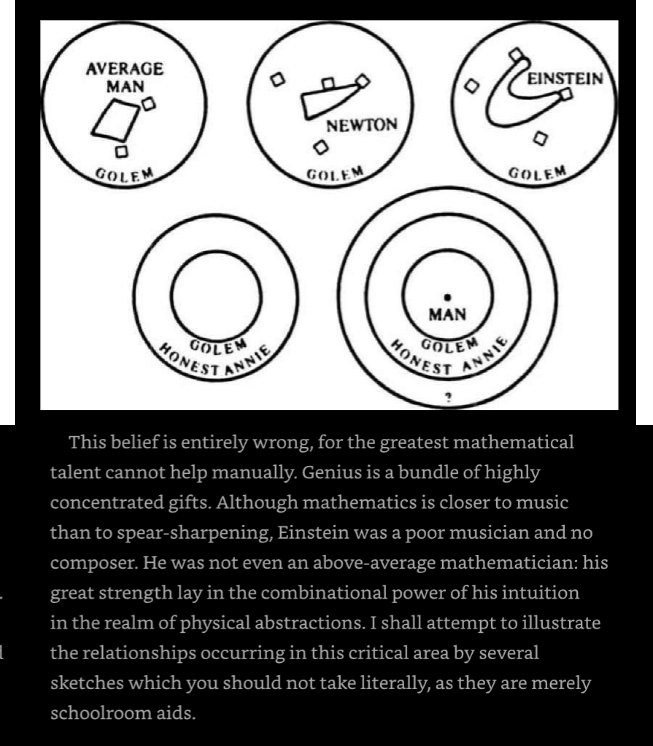

Fun new feature landing in Nano Banana 2 today:

— fofr (@fofrAI) 28 mai 2026

video-to-image

Pass a video or YouTube URL to Nano Banana 2 along with your prompt, and it'll use the video as context to make the image.

> create a comic strip from this video pic.twitter.com/1raoUB1UlT

Fun new feature landing in Nano Banana 2 today: video-to-image Pass a video or YouTube URL to Nano Banana 2 along with your prompt, and it'll use the video as context to make the image. > create a comic strip from this video