April 7th marked a watershed moment in AI development: Anthropic announced it won’t release Claude Mythos publicly due to its cybersecurity capabilities, while the company’s revenue exploded to $30B. Meanwhile, open-source alternatives surge and AI agents become increasingly autonomous across industries.

The model too dangerous to release

Let’s start with what should terrify you.

Anthropic just announced Claude Mythos Preview, a model so capable at finding software vulnerabilities that they’re not releasing it to the public. Instead, they’ve created Project Glasswing, partnering with 40+ companies including Amazon, Apple, Microsoft, and NVIDIA to give cybersecurity defenders a head start.

The implications are staggering. According to Anthropic executives, Mythos has already found vulnerabilities in every major operating system and web browser—some that literally decades of security researchers missed. We’re talking about flaws in the systems that run our entire digital infrastructure.

This isn’t your typical “AI safety” theater. When a company leaves money on the table by refusing to sell their best product, you know something fundamental has shifted. Anthropic is committing $100M in usage credits to help secure critical software, effectively subsidizing the defense against their own creation.

The message is clear: we’ve crossed a line where AI capabilities outpace our ability to deploy them safely.

The revenue explosion nobody saw coming

While withholding their most powerful model, Anthropic just announced their run-rate revenue hit $30 billion—up from $9 billion at the end of 2025. That’s a 233% increase in four months.

To put this in perspective: they went from $1B to $30B in just 15 months. OpenAI, meanwhile, sits at roughly $25B run-rate. Anthropic didn’t just catch up—they lapped the competition.

This revenue surge coincides with their massive partnership with Google and Broadcom for “multiple gigawatts” of TPU capacity starting in 2027. Google’s arsenal of roughly 5 million H100-equivalent GPUs suddenly makes perfect sense as a strategic advantage.

But here’s the paradox: as AI becomes more powerful and expensive to run (some users report spending $200-1,000 daily on frontier models), the ultimate goal is driving costs down to $20/month for consumers. The entire tech industry’s future shape depends on solving this economic puzzle.

Open source fights back

While Anthropic restricts access to their most powerful model, the open-source community is having its moment. Models like MiniMax 2.7, Qwen 3.6, and GLM 5 are delivering 75-80% of closed model performance at 10x lower cost.

Usage is exploding on these alternatives. VoxCPM 2 from OpenBMB just revolutionized text-to-speech with true concept-to-voice generation—just describe the voice you want, and the 2B parameter model builds it. No more fixed speaker presets.

The Hermes Agent from Nous Research is gaining serious traction, with users praising its superior self-healing capabilities compared to OpenClaw. When models can remember and learn from their mistakes automatically, the gap between open and closed models narrows fast.

Even more intriguing: someone just released a Gemma 4 reasoning adapter trained purely on Opus data. A tiny QLoRA adapter, trained in one hour on a single GPU, that boosts math, code, and reasoning capabilities. The democratization of AI capabilities is accelerating.

AI agents escape the sandbox

Forget chatbots. We’re witnessing the emergence of truly autonomous AI agents that don’t just respond—they act.

Agent swarms are now reality: master agents create, manage, and modify worker agents to complete massive projects. Entire SaaS applications with full functionality can be built through agent coordination. This isn’t theoretical—it’s shipping this week.

The interface is evolving beyond text prompts. Context is becoming the real interface—screenshots, documents, email threads. AI systems now respond based on what’s actually in front of you, mimicking how executives and operators really work.

But the most significant shift? Agents are breaking free from desktop constraints. Pocket lets you control your local files and browse the web from anywhere via chat. QoderWork doesn’t just chat—it opens files, analyzes data, and runs code on your machine. Chatbase Voice now handles phone calls, emails, and website chat through a single agent.

We even have agents controlling remote iOS browsers with screen sharing. The boundaries between human and machine operation are dissolving.

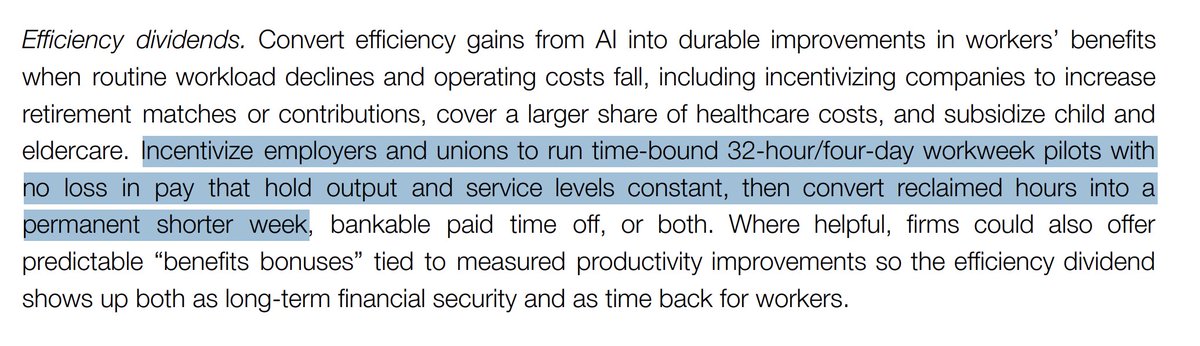

The productivity revolution is here

Most people still use AI like a smarter search engine. They’re missing the point entirely.

The real productivity leap isn’t better answers—it’s fewer handoffs. When AI can summarize, draft, organize, and act across your apps, work feels 5x faster. The old workflow of switching tabs, copying and pasting, rewriting context, and repeating admin work is becoming obsolete.

The new workflow is simpler: “read this and summarize it,” “reply politely,” “turn my thoughts into structured notes.” Less typing, less friction, more momentum.

Some companies are already claiming you no longer need a COO, CMO, or CXO to scale—one link and their AI becomes your entire executive team. Whether that’s hyperbole or prophecy, the transformation of business operations is undeniable.

The hallucination reality check

Gary Marcus continues his crusade against AI hype, and the data backs him up. Despite claims that hallucination rates are “next to nil,” current top LLMs still hallucinate 4.6% of the time on known benchmarks—about once every 25 prompts.

To put this in perspective: if commercial airlines crashed at the rate LLMs hallucinate, we’d see 1.87 million crashes per 41 million flights instead of the actual rate of 7 crashes. Imagine if your accountant or pilot hallucinated 4.6% of the time.

Google’s tolerance for a 10% error rate in AI search—something that would never have been acceptable pre-ChatGPT—shows how fundamentally the company has changed. When you process 5 trillion search queries annually, 10% errors still represent a gigantic absolute number.

This isn’t just academic nitpicking. These error rates matter when AI systems gain real-world autonomy.

The robotics breakthrough

While software agents evolve, physical robotics is having its moment. ByteDance Seed achieved zero-shot sim-to-real transfer for dexterous hand manipulation—robots learning complex maneuvers purely in simulation that work perfectly in reality.

Zhejiang University pushed robot flight forward with jet-propelled humanoids. Gino 1 aims to master every warehouse task. X7 humanoid robots are dispensing medicines in hospitals. The applications are multiplying across industries.

Most significantly, AGIBOT released AGIBOT WORLD 2026, an open-source dataset built entirely from real-world scenarios covering key embodied AI research directions. When robotics companies start open-sourcing comprehensive real-world datasets, the field accelerates exponentially.

What’s next?

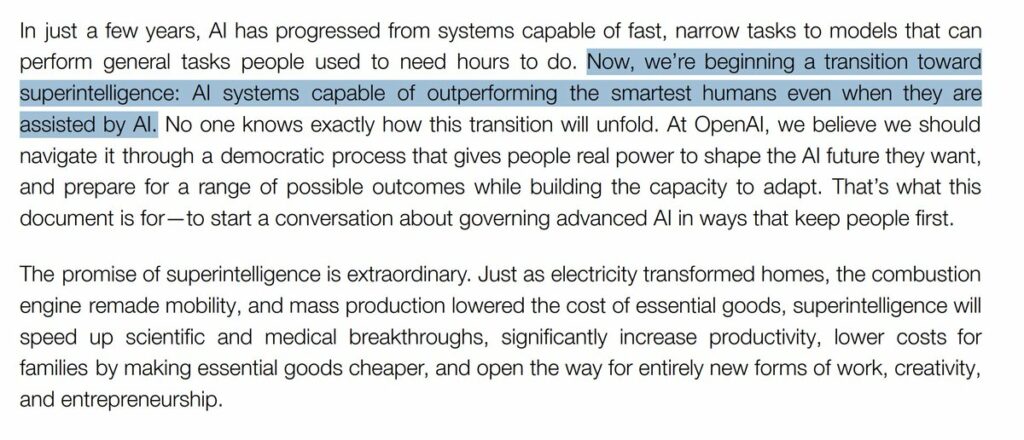

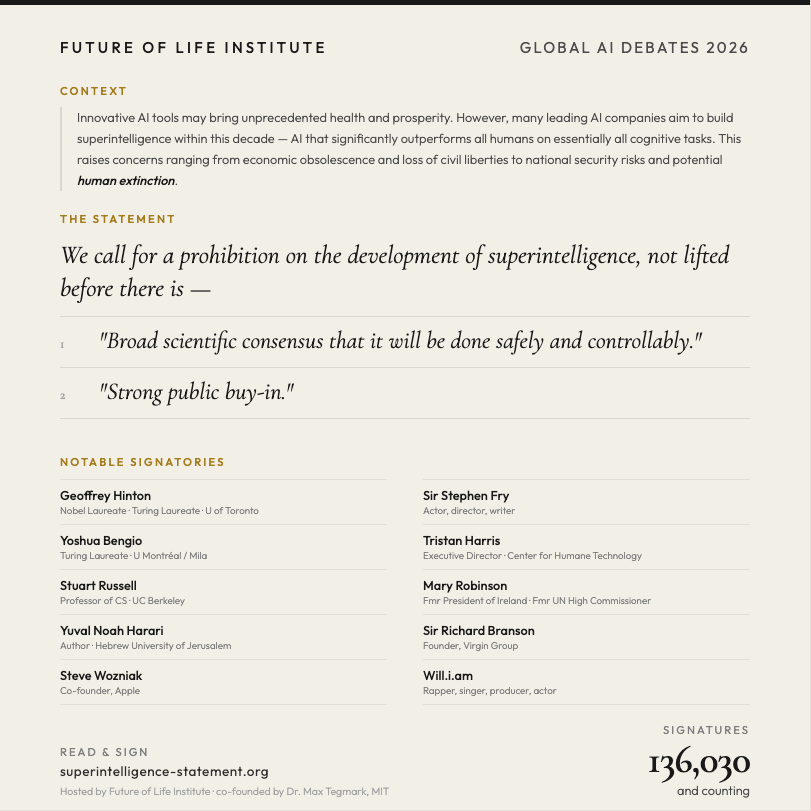

We’re witnessing a fundamental shift in AI deployment. The days of releasing every model publicly are ending. The most capable systems will remain restricted while open alternatives close the gap.

Revenue models are exploding for those with the computational resources to serve frontier models, but the ultimate prize goes to whoever can democratize access affordably. The tension between capability and accessibility will define the next phase of AI development.

Agent autonomy is expanding beyond software into physical systems. The question isn’t whether AI will reshape work—it’s how quickly we can adapt our institutions, security practices, and economic models to keep pace.

The models are escaping the lab. The question is whether we’re ready for what comes next.

Photo : Steve Johnson / Unsplash