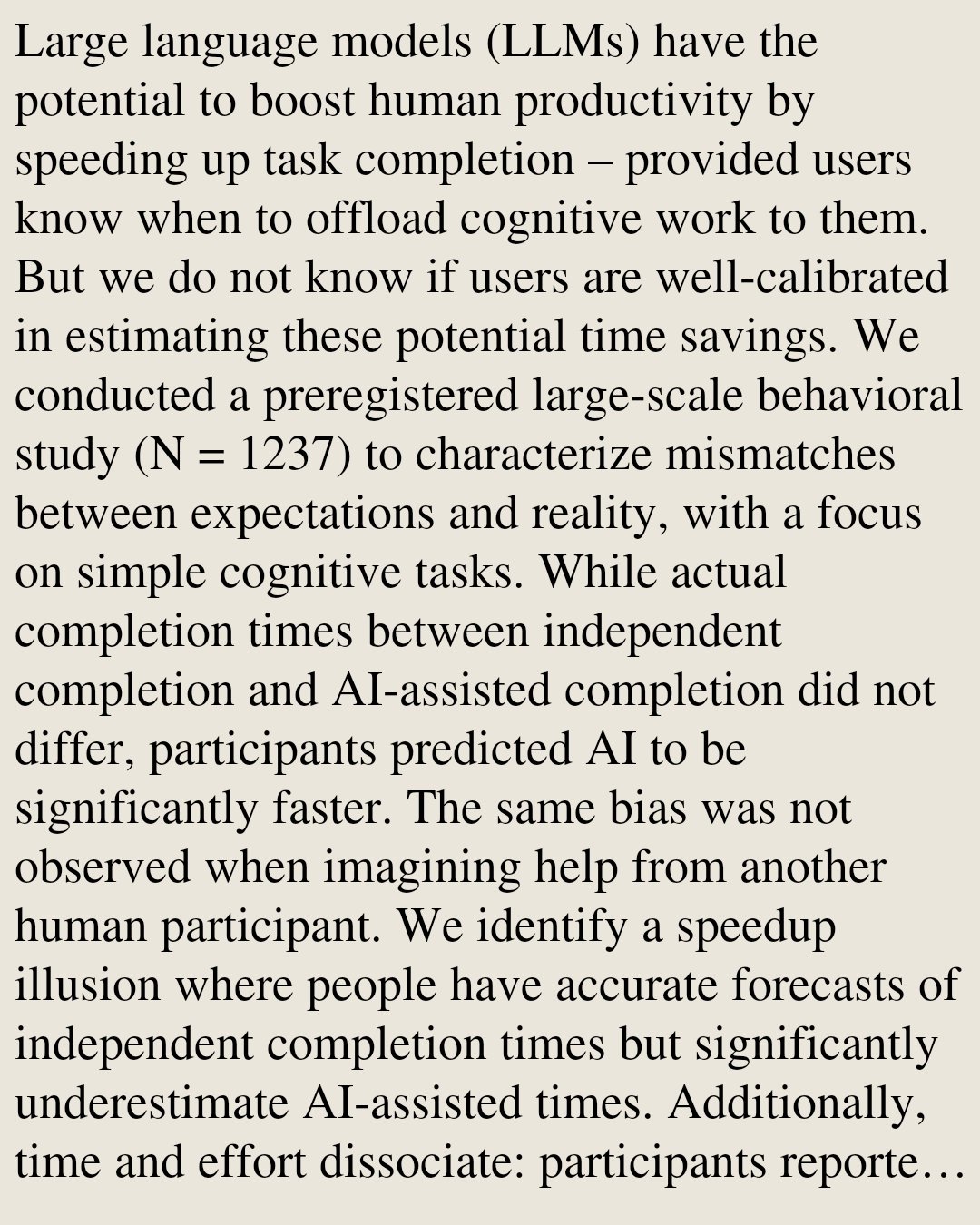

Thinking of AI as a productivity booster for prior workflows is the wrong framing. Like all of the previous waves of computerization/softwarization, AI is a tool that lets you do new things in new ways.

@fchollet

-

Inspiration from Bible + Futuristic Tech Mix

By

–

NN learning is not search, and most NN architectures don't encode Turing machines. And gradient descent is not brute force, it's actually very efficient. So this is a lot of nonsense.

-

Grok AI Feature Now Available for Premium and Premium+ Subscribers

By

–

There are only two honest metrics when it comes to benchmarking intelligence: novelty and efficiency. You don't need intelligence to solve a known problem (only memory). And you don't need intelligence to solve a problem via brute force. But to solve a novel problem efficiently,

-

Deep Learning with Python Now Free to Read Online

By

–

I wrote Deep Learning with Python to be the definitive guide to how deep learning works and how to best make use of it. Tens of thousands of people got their career start via this book. 120,000 copies sold, and downloaded by millions more. And now it's free to read online:

-

ARC Prize Foundation Opens Two AGI-Focused Engineering Roles

By

–

If you want to help the world make sense of AGI and accelerate its arrival, consider joining the ARC Prize foundation. Two roles currently open: Game Platform Engineering Lead, and Model Testing & Analysis Lead

-

Blog Post Analyzes Frontier Model Failure Modes in Detail

By

–

Make sure to read the blog post for a detailed analysis of frontier model failure modes:

-

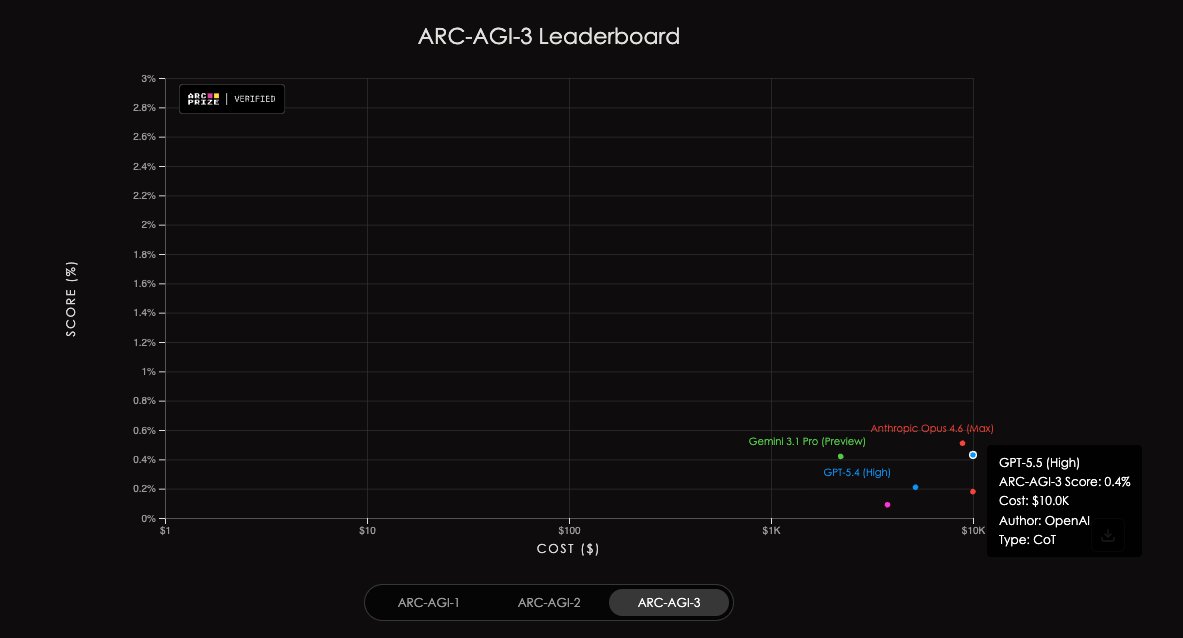

ARC-AGI-3 Scores Stay Below 1% as Models Evolve

By

–

The latest crop of models remains below 1% on ARC-AGI-3 — for now. Where will the scores be by the end of the year?

-

RL Boosts Known Tasks But Causes Hallucinations on Unknown Ones

By

–

RL is a bit of a double edged sword: in known territory performance increases, but in unknown territory the model tends to hallucinate that it is performing a completely different task it was trained on

-

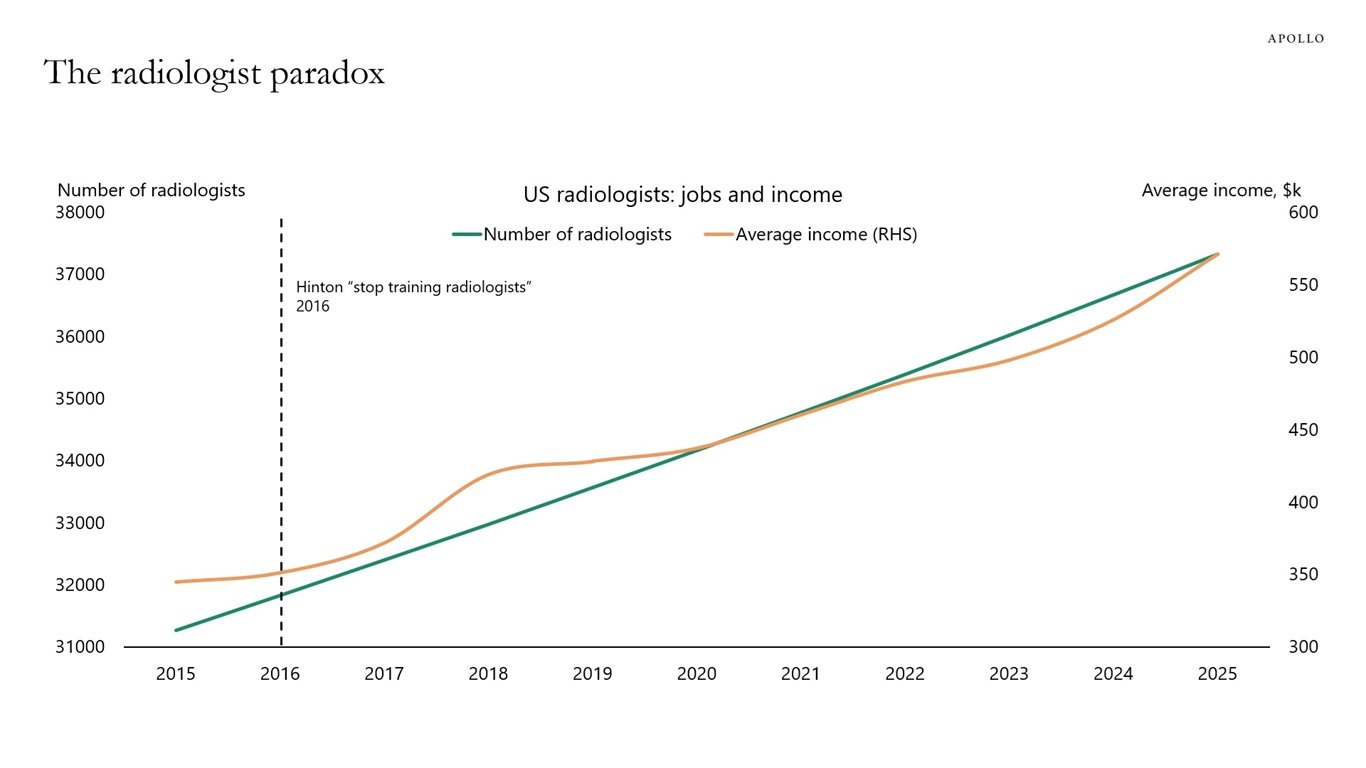

AI Automates Tasks Not Jobs Autonomy Limitations

By

–

AI automates tasks, not jobs, and when a task gets cheaper, demand for the job grows. AI cannot automate jobs end-to-end because it lacks autonomy and cannot operate without supervision. There is still zero job from 2022 that can be performed end-to-end by AI, not even

-

Keras Kinetic v0.0.2 Alpha: Modal-like TPU Training API

By

–

Keras Kinetic has a new alpha release: v0.0.2! Including a new docs website: http://

kinetic.readthedocs.io Kinetic is my favorite new release from the Keras team: a super simple Modal-like API to run training jobs on TPU.