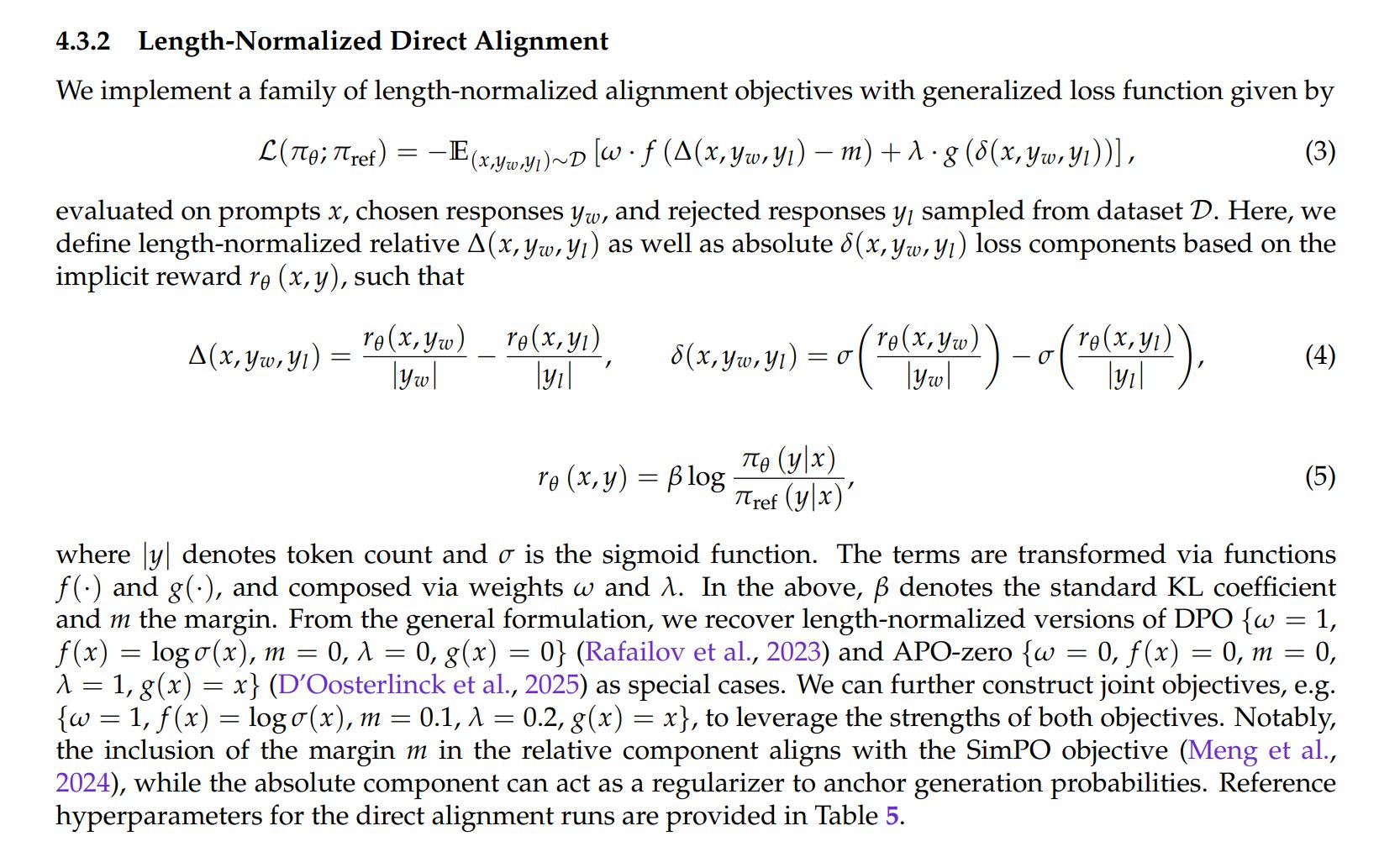

We're quite fond of our length-normalized direct alignment at Liquid (see https://

arxiv.org/abs/2511.23404)

@maximelabonne

-

Liquid AI Introduces Length-Normalized Direct Alignment Method

By

–

-

AgentTrove: New Agentic Dataset with 1.7M Samples Released

By

–

AgentTrove: new agentic dataset with 1.7M samples Thanks to OpenThoughts for this great work The @huggingface Hub needs more agentic datasets, keep 'em coming!

-

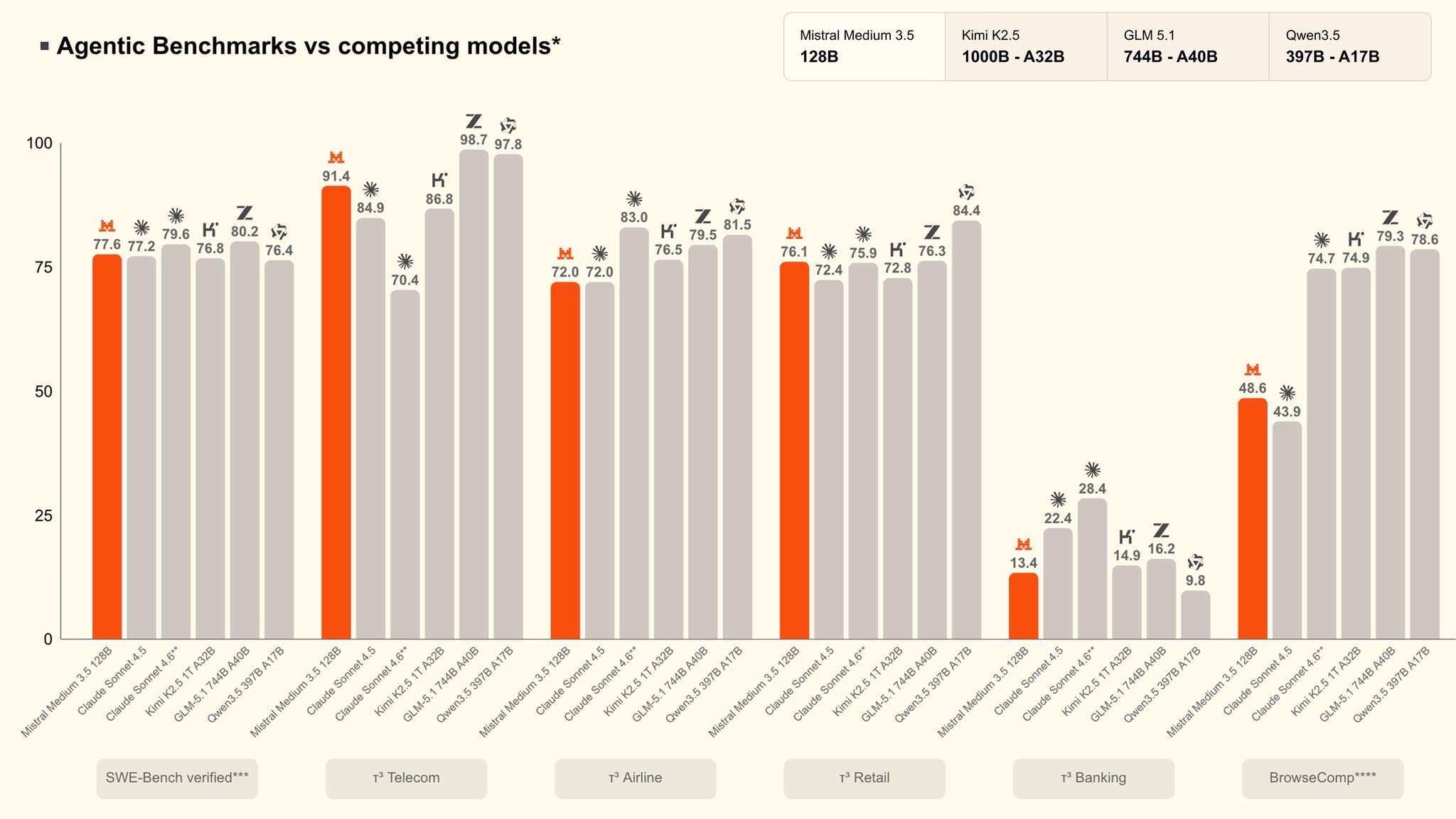

Tau3-Bench Timing Concerns Against Competing Models

By

–

Not sure if this is a good look since most of the competing models were released BEFORE Tau3-Bench

-

Pixtral Scaled-Up Model: Tradition Over Modern AI Approaches

By

–

Reject modernity, embrace tradition (it's a scaled-up Pixtral)

-

Embedding Layers in Small Models: Architecture and Training Optimization

By

–

Did you know that the embedding layer can contain 63% of total model parameters? In this talk, I present unique challenges of small models from architecture (don't build giant embedding layers) to post-training (how to fix doom looping) ↓ Slides in the comments ↓

-

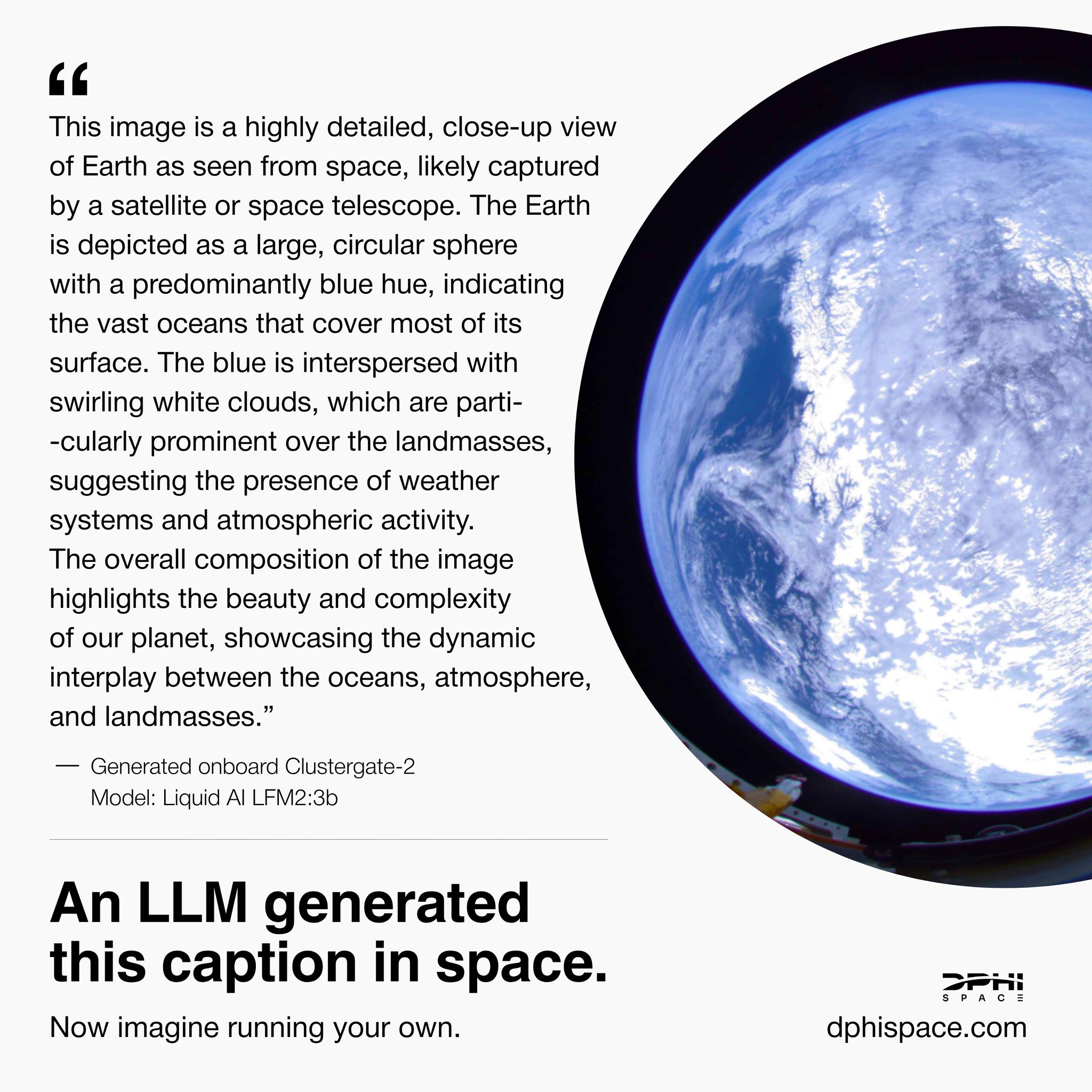

LFM2-VL Language Model Deployed on Satellite Successfully

By

–

This is so cool: LFM2-VL deployed directly on a satellite! Building HAL 9000 one step at a time.

-

Liquid AI showcases at ICLR Rio with Mercedes partnership

By

–

Rio was amazing, I had such a fun time at @iclr_conf @liquidai presented many papers, managed a busy booth, announced a partnership with Mercedes, and organized a (delicious) dinner event 10/10 would do it again!

-

Tokenizer: The Real Business Model Behind AI Models

By

–

The model might be the product, but the tokenizer is the business plan

-

Anthropic’s Hidden 30% Cost Increase via Tokenizer Change

By

–

Turns out Anthropic basically snuck in a 30% cost increase by changing the tokenizer