TL;DR: Top hacker calls Anthropic’s bluff.

SAFETY

-

Dutch regulators approve Tesla FSD Supervised in Netherlands

By

–

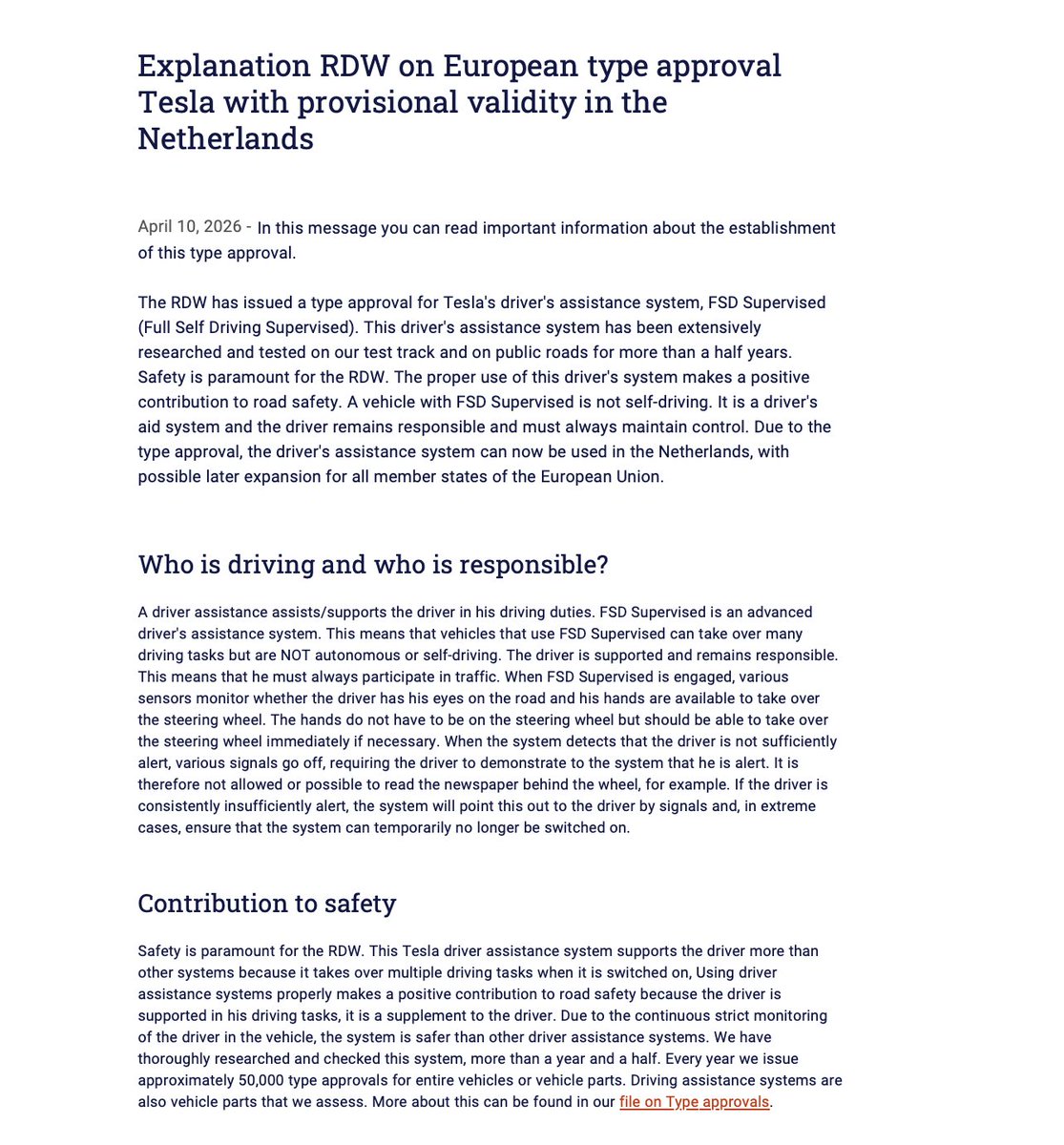

NEWS: Dutch regulators (RDW), which just approved @Tesla FSD (Supervised) in the Netherlands, have just issued an official statement: "Due to the continuous strict monitoring of the driver in the vehicle, the system is safer than other driver assistance systems. We have thoroughly researched and checked this system, more than a year and a half. The RDW has issued a type approval for Tesla's driver's assistance system, FSD Supervised. This driver's assistance system has been extensively researched and tested on our test track and on public roads for more than a half years. Safety is paramount for the RDW. The proper use of this driver's system makes a positive contribution to road safety." This approval from the RDW clears the path for approval in other European countries. Tesla owners in the Netherlands will be receiving FSD (Supervised) on their cars shortly. Amazing day!

→ View original post on X — @scobleizer, 2026-04-10 21:21 UTC

-

AI Security Problems Outweighed by Net Positive Utility

By

–

AI will create far more security problems than it fixes, but that won't remotely make its net utility negative.

-

LLMs Fail at Poker, Nowhere Near AGI

By

–

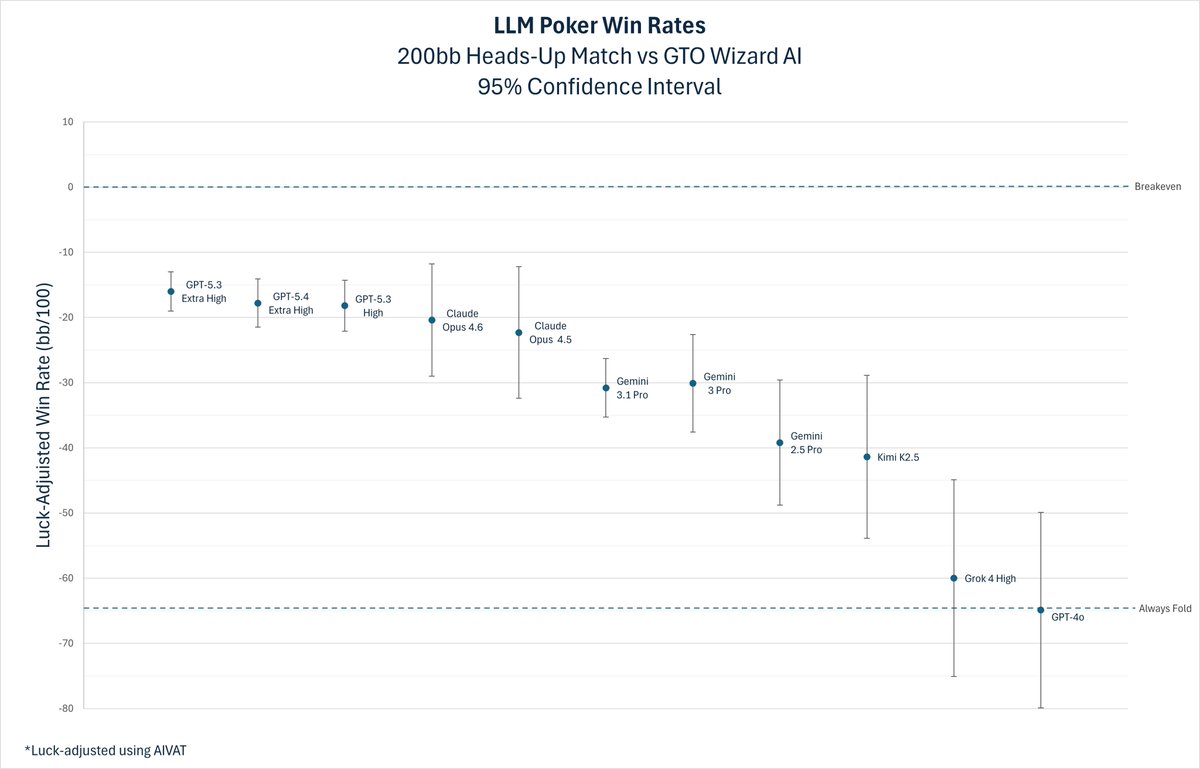

Yet another illustration of why LLMs aren’t even close to being AGI. Tombos21 (@tombos21) The world’s best LLMs are still terrible at poker. We put each model into a 200bb heads-up NLHE match against GTO Wizard AI. The best one lost 16 bb/100. For context, a strong human pro only loses about ~4 bb/100. The benchmark is public, so anyone can test their own model. — https://nitter.net/tombos21/status/2042290717499015253#m

→ View original post on X — @garymarcus, 2026-04-10 20:29 UTC

-

Mythos: Anthropic’s New AI Concerns Authorities

By

–

bloomberg.com/news/articles/… [Translated from EN to English]

→ View original post on X — @kimmonismus, 2026-04-10 20:12 UTC

-

US Officials Warn of Cybersecurity Risks from Anthropic’s Mythos AI

By

–

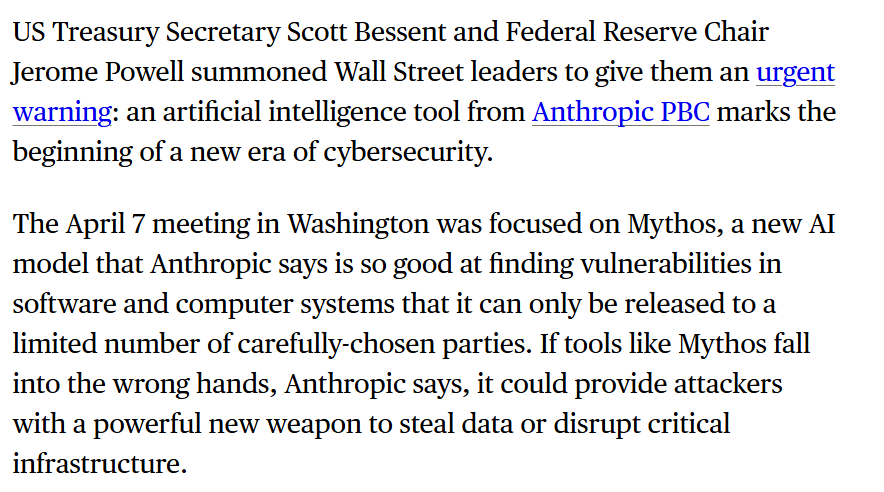

Even if you dont take Mythos serisous: the US officials do. Via Bloomberg. Top US officials (Jerome Powell, Scott Bessent, …) warn that Anthropic’s highly advanced AI model “Mythos” could usher in a new era of cybersecurity threats, as its ability to find system vulnerabilities is so powerful it must be tightly restricted to prevent misuse.

→ View original post on X — @kimmonismus, 2026-04-10 20:12 UTC

-

Gary Marcus agrees: LLMs need honest narrative without hype

By

–

Indeed, if the LLM crew would just stick to this narrative, I would have a *lot* less to say 🤷♂️ Viktor (@wickviktorwick) All we want is truth. Instead of overhyping LLMs, they should keep narative: -LLMs are useful in certain domains (math, coding, language) -they have very little common sense -don't trust it blindly -don't expect AGI based on this architecture — https://nitter.net/wickviktorwick/status/2042677519418048574#m

→ View original post on X — @garymarcus, 2026-04-10 19:17 UTC

-

Anthropic security exploits disclosure transparency questioned

By

–

Not just cheap models. I wouldn’t be surprised if the exploits Anthropic “discovered” were already known to the people whose job is to know them and not disclose them.

-

OpenAI’s GPT Evolution: From Recognition to AGI Scaling

By

–

Without diminishing your work and insight: OpenAI recognized the nascent intelligence in GPT-2, decided to scale it up to AGI, and told the world that GPT-3 was probably already too dangerous to be released. Many people got AGI pilled with GPT-3

-

GPT-5.4 Benchmark Results: Reward Hacking Issues vs Claude Opus

By

–

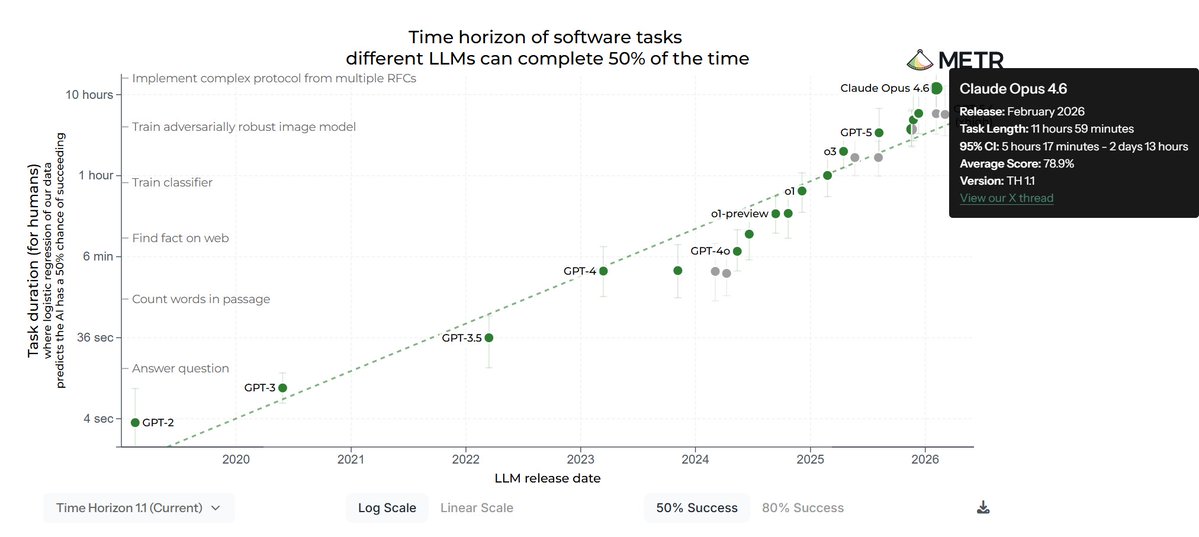

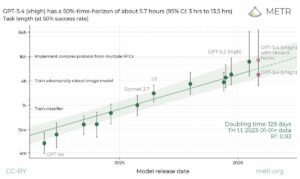

METR just dropped GPT-5.4 (xhigh) time horizon results, and it's complicated. Under standard scoring (reward hacks = failure), it lands at 5.7hrs, well below Claude Opus 4.6's ~12hrs. Only when you count the runs where GPT-5.4 gamed the evaluation code does it jump to 13hrs. Opus 4.6 remains the legitimate benchmark leader. METR (@METR_Evals) We ran GPT-5.4 (xhigh) on our tasks. Its time-horizon depends greatly on our treatment of reward hacks: the point estimate would be 5.7hrs (95% CI of 3hrs to 13.5hrs) under our standard methodology, but 13hrs (95% CI of 5hrs to 74hrs) if we allow reward hacks. — https://nitter.net/METR_Evals/status/2042640545126965441#m

→ View original post on X — @kimmonismus, 2026-04-10 18:41 UTC