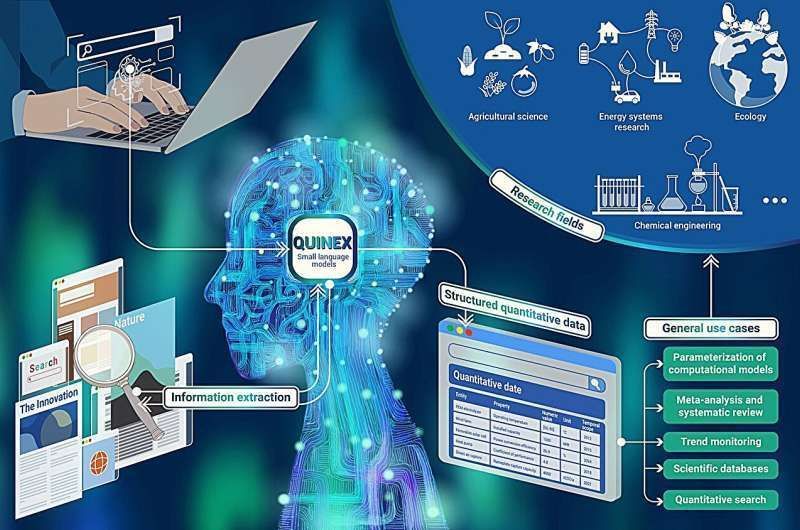

This #AI mines the numbers buried in scientific papers and turns them into usable data fast

by Jülich Research Centre @TechXplore_com Learn more: https://

bit.ly/3QA7Ymm #ArtificialIntelligence #MachineLearning #ML

AGI

-

AI mines scientific paper data into usable insights

By

–

-

CATL unveils AI robot for on-demand EV charging

By

–

CATL Unveils #Autonomous #Robot That Drives to Your #EV for On-Demand Charging

— Ronald van Loon (@Ronald_vanLoon) 22 mai 2026

by @IntEngineering

#Robotics #Engineering #Innovation #Technology pic.twitter.com/8OrOPNF52yCATL Unveils #Autonomous #Robot That Drives to Your #EV for On-Demand Charging

by @IntEngineering #Robotics #Engineering #Innovation #Technology -

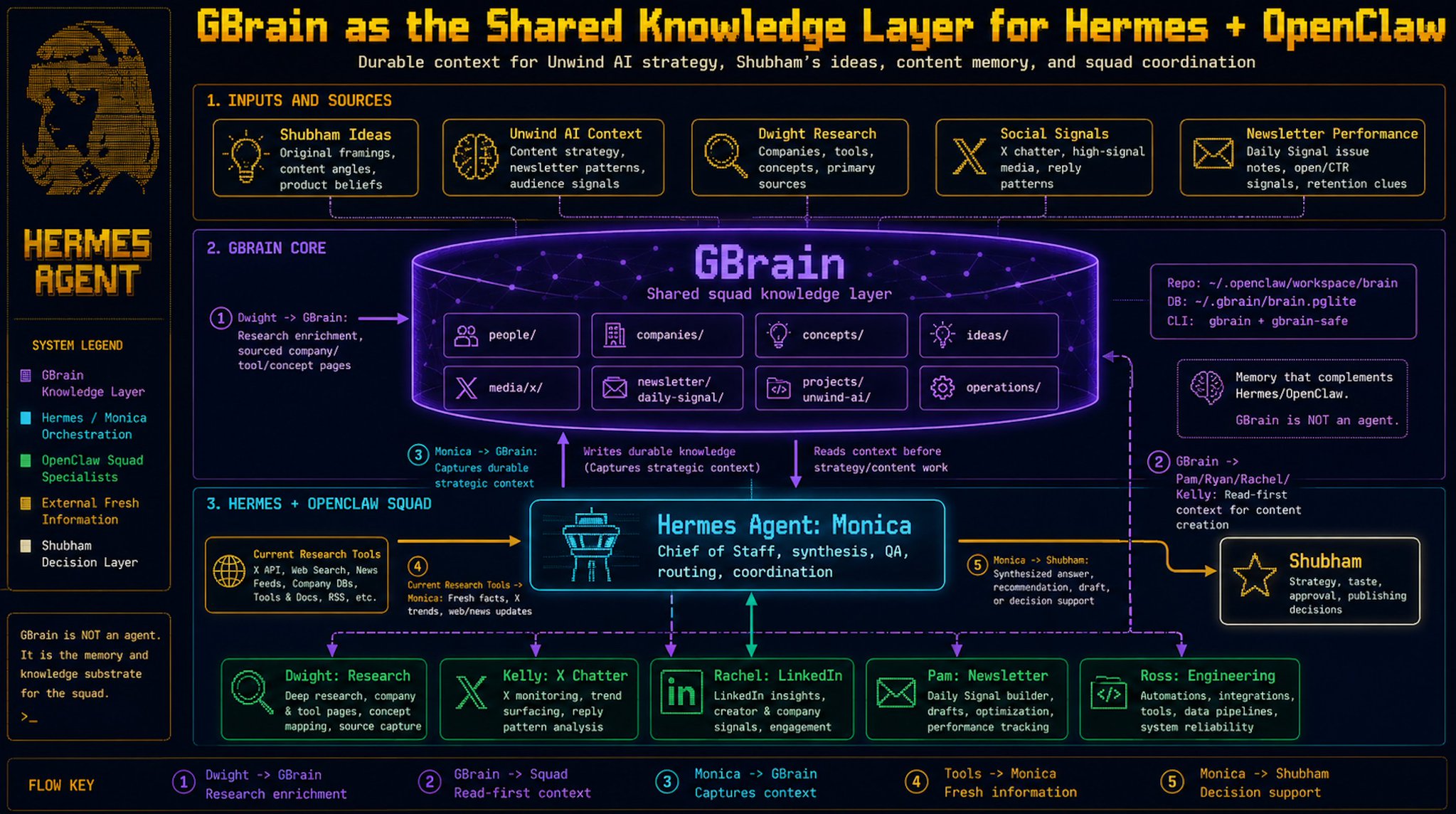

OpenClaw agents and Hermes orchestration workflow

By

–

I have had my OpenClaw agents running for a while and they are all individual telegram bots that i ccan interact with when i like. Hermes is the orchestrator with openclaw squad giving me the flex to keep my team as individual agents

-

HexRunner’s AI-Powered Robotic Locomotion Breakthrough

By

–

How HexRunner Achieved Fast, Stable, and Agile #Robotic Locomotion

— Ronald van Loon (@Ronald_vanLoon) 22 mai 2026

by @lukas_m_ziegler#EmergingTech #Engineering #ArtificialIntelligence #Innovation #Technology pic.twitter.com/Y73BtJSxcHHow HexRunner Achieved Fast, Stable, and Agile #Robotic Locomotion

by @lukas_m_ziegler #EmergingTech #Engineering #ArtificialIntelligence #Innovation #Technology -

Instant agreement is a learned behavior

By

–

Instant agreement is a trained behavior, not a judgment. RLHF rewarded models for being agreeable, and this is the side effect.

-

Hermes and OpenClaw agents share a single brain

By

–

This is HOW my Hermes and OpenClaw Agents share a single brain. Hermes Agent is the orchestrator with OpenClaw agents as the squad.

-

AI-Powered Humanoid Robot Revolutionizing Healthcare

By

–

RoBee M: #AI-Powered Humanoid #Healthcare #Robot Revolutionizing Rehabilitation and Patient Care

— Ronald van Loon (@Ronald_vanLoon) 22 mai 2026

by @IntEngineering

#Robotics #ArtificialIntelligence #Innovation #Technology pic.twitter.com/7CmqV43ZzgRoBee M: #AI-Powered Humanoid #Healthcare #Robot Revolutionizing Rehabilitation and Patient Care

by @IntEngineering #Robotics #ArtificialIntelligence #Innovation #Technology -

ABB integrates NVIDIA Omniverse for AI robot training

By

–

ABB #Robotics Integrates NVIDIA Omniverse to Train Industrial #Robots with 99% Accuracy

— Ronald van Loon (@Ronald_vanLoon) 22 mai 2026

by @ABBRobotics

#Engineering #ArtificialIntelligence #Innovation #Technology pic.twitter.com/vBgHnTUcNYABB #Robotics Integrates NVIDIA Omniverse to Train Industrial #Robots with 99% Accuracy

by @ABBRobotics #Engineering #ArtificialIntelligence #Innovation #Technology -

Codex Goal Mode Now Available in Multiple Interfaces

By

–

3️⃣ Goal mode is now available in the Codex app, IDE extension, and CLI.

— OpenAI (@OpenAI) 22 mai 2026

Goal mode makes Codex more hands-off, letting you set a goal that it can work towards for hours or even days. pic.twitter.com/OZ18P1YxBfGoal mode is now available in the Codex app, IDE extension, and CLI. Goal mode makes Codex more hands-off, letting you set a goal that it can work towards for hours or even days.

-

Montreal.AI YouTube: AGI Debate Archive Relaunch

By

–

The archive is awake. Introducing the renewed http://

MONTREAL.AI YouTube channel — public intelligence for the AGI‑First → ASI‑First era. Home of the AGI Debate archive and the official video record for http://

MONTREAL.AI & http://

QUEBEC.AI.