AGI Jobs will alter knowledge work at the substrate level—one of those rare inflection points where the very grammar of work is rewritten. #AIAgents #AGIJobs #Jobs

→ View original post on X — @ceobillionaire, 2026-04-06 14:47 UTC

Global AI News Aggregator

By

–

AGI Jobs will alter knowledge work at the substrate level—one of those rare inflection points where the very grammar of work is rewritten. #AIAgents #AGIJobs #Jobs

→ View original post on X — @ceobillionaire, 2026-04-06 14:47 UTC

By

–

JUST IN: Sam Altman says AI is advancing so fast that America needs a “new social contract”

→ View original post on X — @montreal_ai, 2026-04-06 14:46 UTC

By

–

I don't know what Sam Altman saw internally at OpenAI, but it seems that, according to their definition, AGI is here, and superintelligence is incredibly close.

— Chubby♨️ (@kimmonismus) 6 avril 2026

AI models that independently conduct scientific research and find novel solutions are already here, and their internal… https://t.co/2nyuqI814n pic.twitter.com/UwmVndPWVu

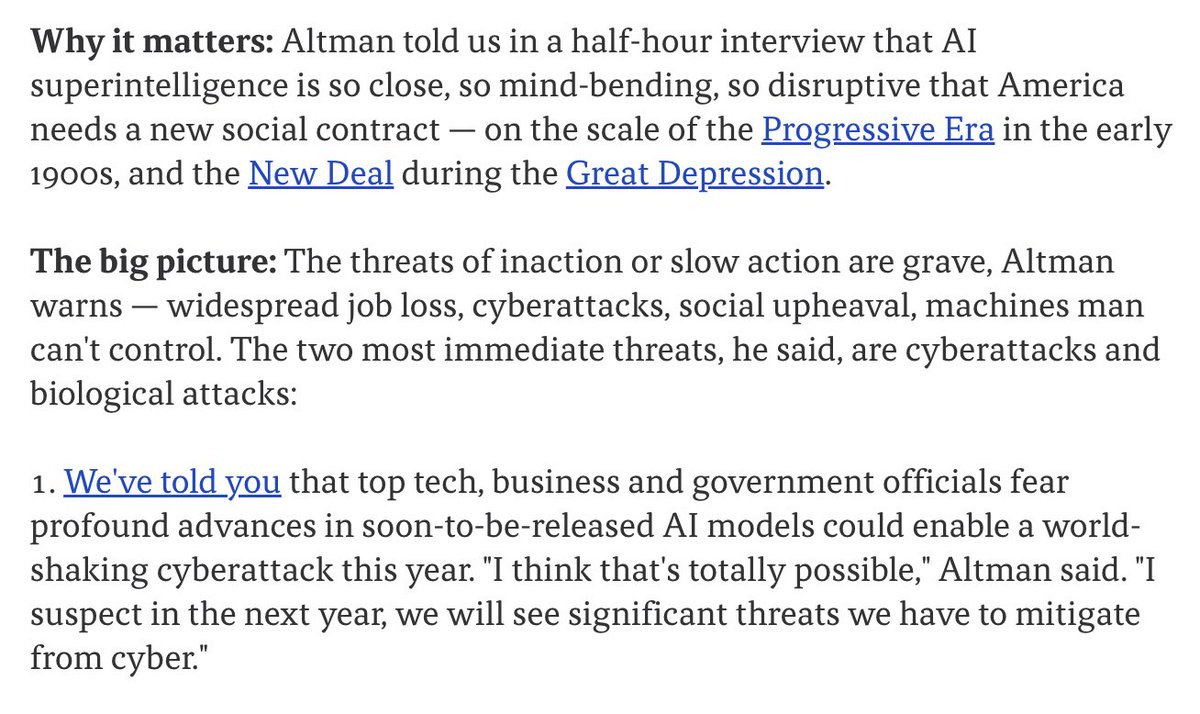

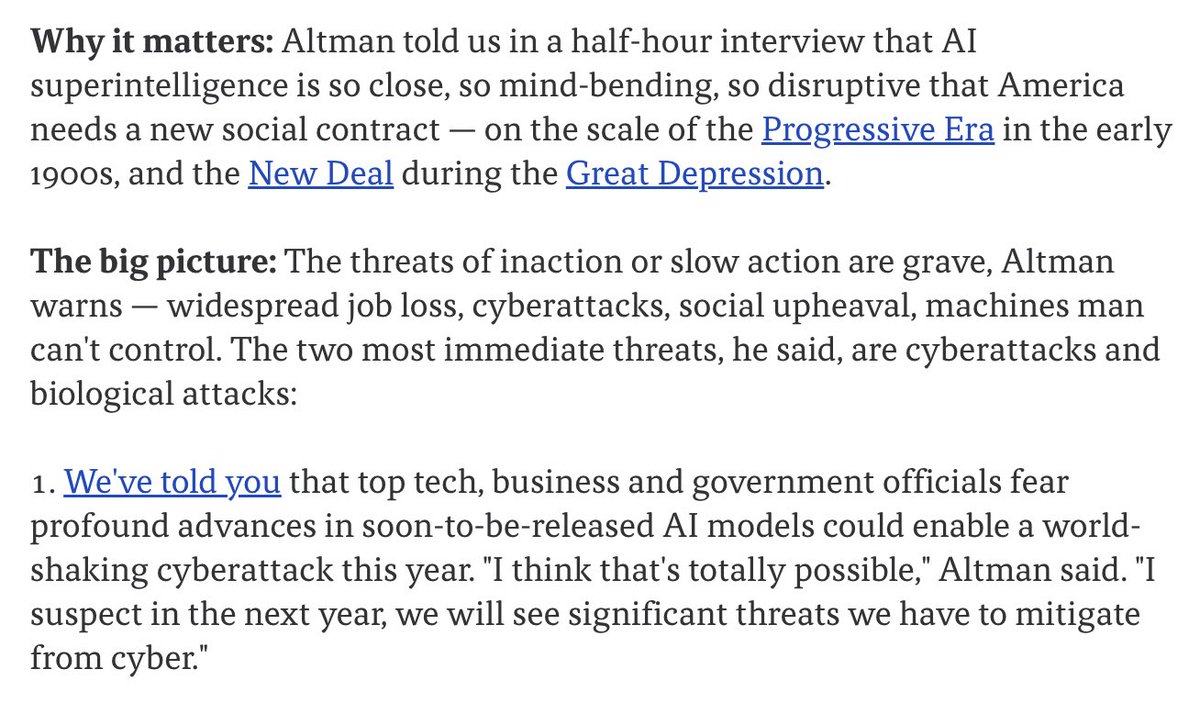

I don't know what Sam Altman saw internally at OpenAI, but it seems that, according to their definition, AGI is here, and superintelligence is incredibly close. AI models that independently conduct scientific research and find novel solutions are already here, and their internal model appears to surpass everything seen before. Chubby♨️ (@kimmonismus) Holy moly: Sam Altman told Axios in a half-hour interview that AI superintelligence is so close, so mind-bending, so disruptive that America needs a new social contract. – It's on the scale of the Progressive Era in the early 1900s, and the New Deal during the Great Depression. – Altman warns: widespread job loss, cyberattacks, social upheaval, machines man can't control – "soon-to-be-released AI models could enable a world-shaking cyberattack this year. "I think that's totally possible," Altman said. "I suspect in the next year, we will see significant threats we have to mitigate from cyber." — https://nitter.net/kimmonismus/status/2041126936097812598#m

→ View original post on X — @kimmonismus, 2026-04-06 13:48 UTC

By

–

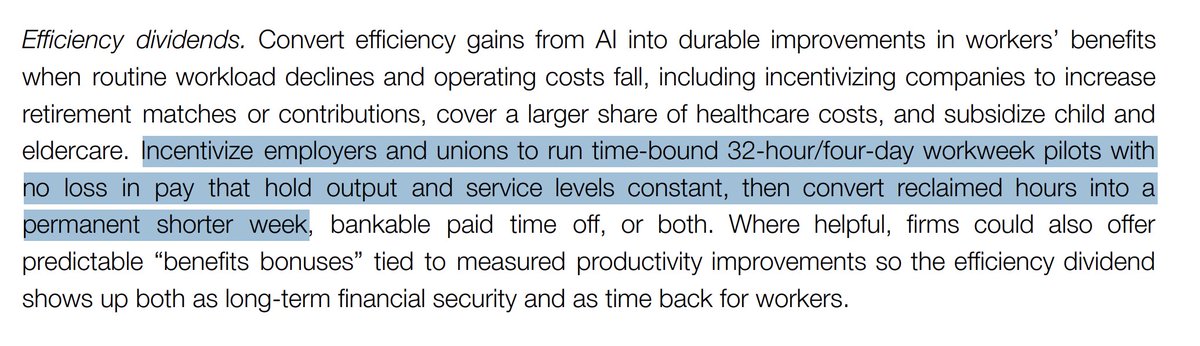

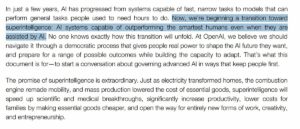

Update: OpenAI officially states they now transition into superintelligence: nitter.net/kimmonismus/status/204… Chubby♨️ (@kimmonismus) Looks like OpenAI reached Superintelligence. OpenAI: "Now, we’re beginning a transition toward superintelligence: AI systems capable of outperforming the smartest humans even when they are assisted by AI." OpenAI just published a 13-page policy blueprint for the "Intelligence Age"- proposing a Public Wealth Fund, 32-hour workweek pilots, portable benefits, a formal "Right to AI," and tax reforms to offset shrinking payroll revenue as automation scales. The document frames superintelligence not as a distant scenario *but an active transition requiring New Deal-level ambition*: new safety nets, containment playbooks for dangerous models, and international coordination modeled on aviation safety institutions. Here are OpenAI's suggestions (tl;dr): Open Economy: -Give workers a formal voice in AI deployment decisions -Microgrants and "startup-in-a-box" for AI-native entrepreneurs -Treat AI access as basic infrastructure (like electricity) -Shift tax base from payroll toward capital gains and corporate income -Public Wealth Fund — every citizen gets a stake in AI growth -Fast-track energy grid expansion via public-private partnerships -32-hour workweek pilots, better benefits from productivity gains -Auto-scaling safety nets triggered by displacement metrics -Portable benefits untied from employers -Invest in care economy as a transition path for displaced workers -Distributed AI-enabled labs to accelerate scientific discovery Resilient Society: -Safety tools for cyber, bio, and large-scale risks -AI trust stack — provenance, verification, audit logs -Competitive auditing market for frontier models -Containment playbooks for dangerous released models -Frontier AI companies adopt Public Benefit Corporation structures -Codified rules and auditing for government AI use -Democratic public input on AI alignment standards -Mandatory incident and near-miss reporting -International AI safety network for joint evaluations and crisis coordination Notably, OpenAI calls for stricter controls only on a narrow set of frontier models while keeping the broader ecosystem open, a clear attempt to position regulation as targeted, not industry-wide. They're backing it with up to $100K in fellowships and $1M in API credits for policy research, plus a new DC workshop opening in May. — https://nitter.net/kimmonismus/status/2041130939175284910#m

→ View original post on X — @kimmonismus, 2026-04-06 12:36 UTC

By

–

Looks like OpenAI reached Superintelligence. OpenAI: "Now, we’re beginning a transition toward superintelligence: AI systems capable of outperforming the smartest humans even when they are assisted by AI." OpenAI just published a 13-page policy blueprint for the "Intelligence Age"- proposing a Public Wealth Fund, 32-hour workweek pilots, portable benefits, a formal "Right to AI," and tax reforms to offset shrinking payroll revenue as automation scales. The document frames superintelligence not as a distant scenario *but an active transition requiring New Deal-level ambition*: new safety nets, containment playbooks for dangerous models, and international coordination modeled on aviation safety institutions. Here are OpenAI's suggestions (tl;dr): Open Economy: -Give workers a formal voice in AI deployment decisions -Microgrants and "startup-in-a-box" for AI-native entrepreneurs -Treat AI access as basic infrastructure (like electricity) -Shift tax base from payroll toward capital gains and corporate income -Public Wealth Fund — every citizen gets a stake in AI growth -Fast-track energy grid expansion via public-private partnerships -32-hour workweek pilots, better benefits from productivity gains -Auto-scaling safety nets triggered by displacement metrics -Portable benefits untied from employers -Invest in care economy as a transition path for displaced workers -Distributed AI-enabled labs to accelerate scientific discovery Resilient Society: -Safety tools for cyber, bio, and large-scale risks -AI trust stack — provenance, verification, audit logs -Competitive auditing market for frontier models -Containment playbooks for dangerous released models -Frontier AI companies adopt Public Benefit Corporation structures -Codified rules and auditing for government AI use -Democratic public input on AI alignment standards -Mandatory incident and near-miss reporting -International AI safety network for joint evaluations and crisis coordination Notably, OpenAI calls for stricter controls only on a narrow set of frontier models while keeping the broader ecosystem open, a clear attempt to position regulation as targeted, not industry-wide. They're backing it with up to $100K in fellowships and $1M in API credits for policy research, plus a new DC workshop opening in May. Chubby♨️ (@kimmonismus) Holy moly: Sam Altman told Axios in a half-hour interview that AI superintelligence is so close, so mind-bending, so disruptive that America needs a new social contract. – It's on the scale of the Progressive Era in the early 1900s, and the New Deal during the Great Depression. – Altman warns: widespread job loss, cyberattacks, social upheaval, machines man can't control – "soon-to-be-released AI models could enable a world-shaking cyberattack this year. "I think that's totally possible," Altman said. "I suspect in the next year, we will see significant threats we have to mitigate from cyber." — https://nitter.net/kimmonismus/status/2041126936097812598#m

→ View original post on X — @kimmonismus, 2026-04-06 12:28 UTC

By

–

Holy moly: Sam Altman told Axios in a half-hour interview that AI superintelligence is so close, so mind-bending, so disruptive that America needs a new social contract. – It's on the scale of the Progressive Era in the early 1900s, and the New Deal during the Great Depression. – Altman warns: widespread job loss, cyberattacks, social upheaval, machines man can't control – "soon-to-be-released AI models could enable a world-shaking cyberattack this year. "I think that's totally possible," Altman said. "I suspect in the next year, we will see significant threats we have to mitigate from cyber." Mike Allen (@mikeallen) 🚨🚨@sama tells me he feels such URGENCY about the power of coming AI models that @OpenAI is unveiling a New Deal for superintelligence – ideas to wake up DC He says AI will soon be so mindbending that we need a new social contract 👇Altman's top 6 ideas axios.com/2026/04/06/behind-… — https://nitter.net/mikeallen/status/2041099089031356468#m

→ View original post on X — @kimmonismus, 2026-04-06 12:12 UTC

By

–

Jack Dorsey says a company is a kind of mini AGI.

— vitrupo (@vitrupo) 6 avril 2026

It’s already an intelligence you can query directly.

But most companies are badly architected, lossy intelligences. pic.twitter.com/2yFL83NMt6

Jack Dorsey says a company is a kind of mini AGI. It's already an intelligence you can query directly. But most companies are badly architected, lossy intelligences. [Translated from EN to English]

→ View original post on X — @ceobillionaire, 2026-04-06 11:02 UTC

By

–

🚨🚨 @sama tells me he feels such URGENCY about the power of coming AI models that @OpenAI is unveiling a New Deal for superintelligence – ideas to wake up DC He says AI will soon be so mindbending that we need a new social contract 👇 Altman's top 6 ideas axios.com/2026/04/06/behind-… [Translated from EN to English]

→ View original post on X — @ceobillionaire, 2026-04-06 10:21 UTC

By

–

My bot can read the entire AI industry and synthestize it. You can't. At all. You are slop.

By

–

And my AI is way better than anything you have access to.