Our congrats to @harvey and the team including @nikogrupen

, @gabepereyra

, and @ItsJulioPereyra for open-sourcing Harvey's long horizon legal agent benchmark, which is specifically built to evaluate and improve agent capabilities for supporting legal work. Read their blog:

@snorkelai

-

Data Cleaning Infrastructure for AI Builders Discussed in Interview

By

–

-

AI Industry Insights: The Central Role of Data

By

–

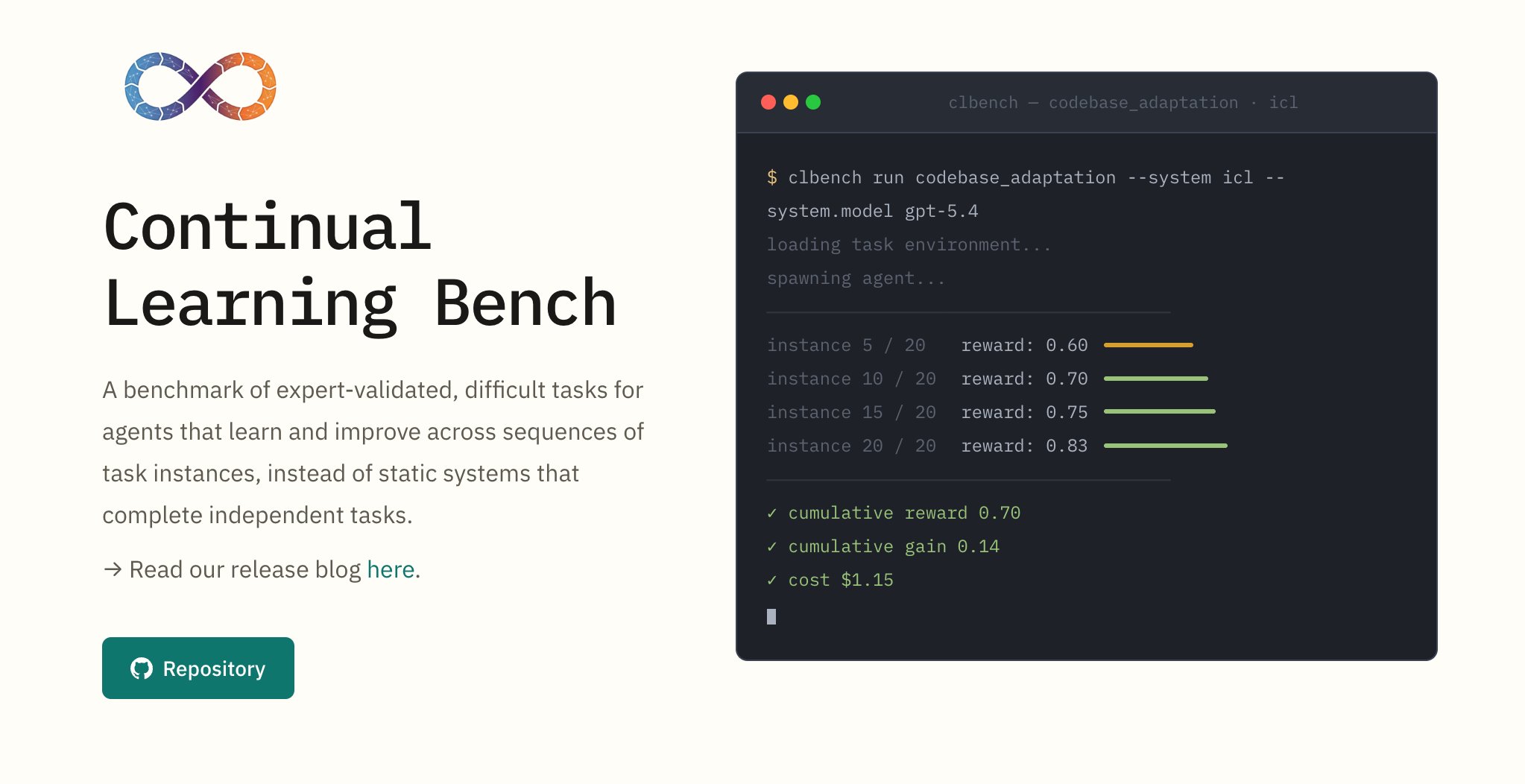

Continual Learning Bench 1.0 is out, co-authored by @UCBerkeley & @SnorkelAI

, funded through Open Benchmarks Grants. A new benchmark for measuring whether AI systems actually improve over time. -

Olmix Framework for Data Mixing Throughout LM Development

By

–

Our thanks to everyone who came out to hear @MayeeChen dive into her paper "Olmix: A Framework for Data Mixing Throughout LM Development." Replay ICYMI live: https://

youtube.com/watch?v=sFIPst

z0cTc

… -

Creating AI Benchmark from Real-World Engineering Problems

By

–

“We can create the best benchmark out there that should dictate model development for the next year,” says @alexgshaw of @LaudeInstitute. The goal: get top engineers to contribute the hardest real-world problems they’ve actually faced in their work.

— Snorkel AI (@SnorkelAI) 28 avril 2026

They’re actively looking for… pic.twitter.com/kIF0PukCAT“We can create the best benchmark out there that should dictate model development for the next year,” says @alexgshaw of @LaudeInstitute

. The goal: get top engineers to contribute the hardest real-world problems they’ve actually faced in their work. They’re actively looking for -

BigLaw Bench: Evaluating AI Legal Research Capabilities

By

–

We recently partnered with @harvey on BigLaw Bench: Research, evaluating how well models handle real end-to-end case law research, identifying the right authorities, applying legal reasoning, and delivering answers you can actually use. It also shows where things still break and

-

ICLR Brazil 2026 Wrap-up: Research Collaborations and Perspectives

By

–

That’s a wrap on @iclr_conf Brazil 2026 Great connecting with so many of you at the DFAI happy hour, conference, and workshops! Thanks to the many workshop folks and researchers we collaborated and presented with, and others who shared their work and perspectives on frontier

-

Open Benchmark Grants Initiative Funds Frontier AI Research

By

–

We're proud to have supported this work through Open Benchmark Grants, our initiative funding the next wave of frontier benchmarks!

-

Geospatial reasoning and AI future interview with Rezaur Rahman

By

–

Our thanks to Rezaur Rahman for stepping away from a busy NEXT to join Chris Sniffen for a great conversation on geospatial reasoning and the future of AI. Stay tuned for full interview!

-

RLVR Effectiveness in Low Data Compute Regimes Study

By

–

Our MLSys 2026 paper is live on arXiv: “Learning from Less: Measuring the Effectiveness of RLVR in Low Data and Compute Regimes.” @realjustinbauer @Walshe_tech @pham_derek @harit_v @ArminPCM @fredsala and @paroma_varma present a comprehensive empirical study of open-source SLMs

-

Snorkel Workshops on Data Quality and Agents at ICLR

By

–

Big week ahead at @iclr_conf Brazil🇧🇷

— Snorkel AI (@SnorkelAI) 20 avril 2026

Don’t miss where Snorkel will be from workshops with @fredsala and @vincentsunnchen on data quality and agents to a @DFAI_Community Gathering on Saturday.

Full lineup below👇 https://t.co/ISe7mjN7kOBig week ahead at @iclr_conf Brazil Don’t miss where Snorkel will be from workshops with @fredsala and @vincentsunnchen on data quality and agents to a @DFAI_Community Gathering on Saturday. Full lineup below