Everyone wants sexy AI demos. Almost nobody wants to do the dull engineering (Retrieval, evals, memory, governance, etc.) that makes them work. That’s the real enterprise AI gap. (My @InfoWorld column: https://

infoworld.com/article/415750

6/ai-has-to-be-dull-before-it-can-be-sexy.html

…)

@mjasay

-

Enterprise AI Success Requires Unglamorous Engineering Work

By

–

-

April’s Cruelty: Utah Defies T.S. Eliot’s Famous Quote

By

–

TS Eliot wrote that "April is the cruelest month," but so far it's treating Utah fine.

-

Tom’s Arrival Makes the Day Even Better

By

–

And now my day just got even better. Tom has entered the chat!

-

From Team Leader to Individual Contributor: Finding Joy in New Roles

By

–

Cool role! Though it must be bittersweet to go from leading a team to being an IC (I suspect it will be sweeter by the day. I love leading teams but being an IC has its own joys). Thanks for being someone I admire, if from afar.

-

AI Splits Enterprises Into Fast and Slow Teams

By

–

"Instead AI is splitting enterprises into fast-learning and slow-learning teams and is rewarding organizations that redesign work, govern risk, and turn lower software costs into more software, not less." – @mjasay infoworld.com/article/415157… [Translated from EN to English]

-

Richmond Alake teaches multi-agent systems at London ExCel Centre

By

–

London has many wonderful things. The ExCel Centre isn't one of them. BUT…watching @richmondalake teach developers how to build their first multi-agent system *is* awesome, no matter where it's taking place (or however awkwardly shaped the room 😬).

-

LiteLLM Supply Chain Attack: Bug Saved Developers from Credential Theft

By

–

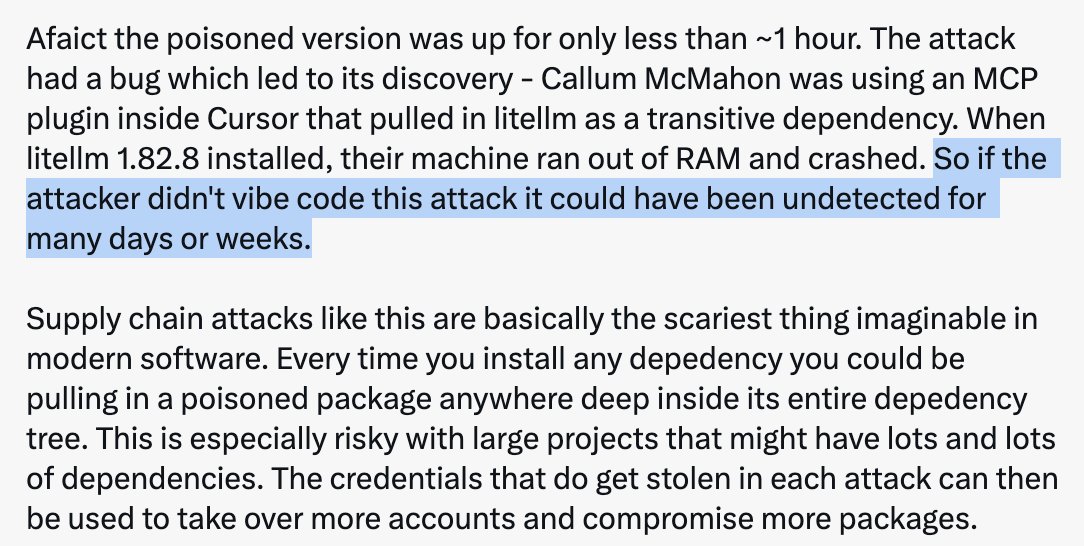

When vibe coding is an unalloyed good: your hacker vibe coded their way to a bug that limited the efficacy of their attack. 😅😮💨 (But still very serious.) Andrej Karpathy (@karpathy) Software horror: litellm PyPI supply chain attack. Simple `pip install litellm` was enough to exfiltrate SSH keys, AWS/GCP/Azure creds, Kubernetes configs, git credentials, env vars (all your API keys), shell history, crypto wallets, SSL private keys, CI/CD secrets, database passwords. LiteLLM itself has 97 million downloads per month which is already terrible, but much worse, the contagion spreads to any project that depends on litellm. For example, if you did `pip install dspy` (which depended on litellm>=1.64.0), you'd also be pwnd. Same for any other large project that depended on litellm. Afaict the poisoned version was up for only less than ~1 hour. The attack had a bug which led to its discovery – Callum McMahon was using an MCP plugin inside Cursor that pulled in litellm as a transitive dependency. When litellm 1.82.8 installed, their machine ran out of RAM and crashed. So if the attacker didn't vibe code this attack it could have been undetected for many days or weeks. Supply chain attacks like this are basically the scariest thing imaginable in modern software. Every time you install any depedency you could be pulling in a poisoned package anywhere deep inside its entire depedency tree. This is especially risky with large projects that might have lots and lots of dependencies. The credentials that do get stolen in each attack can then be used to take over more accounts and compromise more packages. Classical software engineering would have you believe that dependencies are good (we're building pyramids from bricks), but imo this has to be re-evaluated, and it's why I've been so growingly averse to them, preferring to use LLMs to "yoink" functionality when it's simple enough and possible. — https://nitter.net/karpathy/status/2036487306585268612#m

-

AI Adoption Divergence Between Financial Institutions

By

–

Was lucky to discuss AI adoption today with two technical leaders: one at a large hedge fund and the other a major retail bank. The bank lags the hedge fund, which isn't surprising. Different risk profile. What *was* surprising? How *wildly* divergent their AI adoption is.

-

Security vs Speed: Oracle’s AI Advantage for Enterprise Needs

By

–

Both care about security, but for the hedge fund, speed trumps many other considerations. For the bank, speed is always second to data security, customer privacy, etc. This is where a company like Oracle will win: the AI a dev wants with the safety/security their company requires

-

AI Agents Security Risks: Avalanche Metaphor for Permission Overload

By

–

I'm smiling but this was taken ~30 mins after I'd been buried in an avalanche (snapping a ski in half). IT doesn't pose the same bodily risks, but the way we're giving agents the same levels of access as humans is creating conditions ripe for a security "avalanche." Oso (@osoHQ) A metaphor from @mjasay on agent security: Overpermissioned humans = a buried weak snow layer. Add AI agents = avalanche. We've been ignoring the snowpack for years. — https://nitter.net/osoHQ/status/2036089236131029200#m