Matthew Gallagher used a suite of AI tools to create a telehealth company that generated $401 million in sales in its first full year. It's a great example of what I call The New Rules of Wealth. I'm launching a @MasterClass today so more people can learn how to use AI to rapidly launch and scale businesses. If you know someone who could benefit from this knowledge, feel free to send them this link: masterclass.com/erikb [Translated from EN to English]

@erikbryn

-

Enterprise AI Playbook: Key Lessons from 51 Successful Deployments

By

–

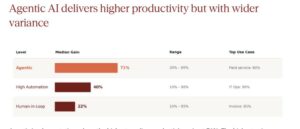

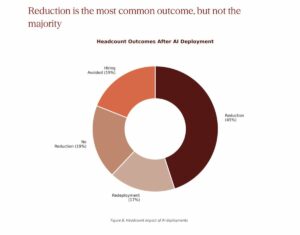

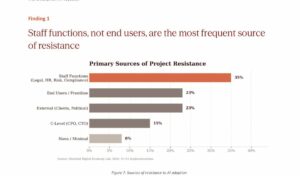

What do successful deployments of AI have in common? It was awesome working with Elisa Pereira and @AGraylin on this research. We studied 51 companies and summarized the results. Alvin has a nice summary below. Check out digitaleconomy.stanford.edu/… for the full report. Alvin Wang Graylin (@AGraylin) New Research💡: “The #Enterprise #AI Playbook — Lessons from 51 Successful Deployments” Excited to share new research from Stanford @DigEconLab, I co-authored with @erikbryn and Elisa Pereira . We spent 5 months interviewing executives across 41 organizations, 9 industries, and 7 countries — focusing exclusively on AI deployments that actually delivered measurable value. Not hype. Not predictions. What’s working right now, and why. A few findings that challenged even our assumptions: The hard part isn’t the AI. 77% of the toughest challenges were invisible costs — change management, data quality, process redesign. Technology was consistently described as the easiest part. Same use case, wildly different timelines. One company deployed AI customer support in weeks. Another took years. Same models. The difference was always the #organization — its #leadership, processes, and willingness to fail. #Agentic AI works — but most firms haven’t tried it yet. Only 20% of our cases were agentic, but they delivered 71% median gains vs. 40% for high-automation. This gap will widen fast. The model is increasingly a #commodity. For 42% of implementations, model choice was fully interchangeable. The durable advantage is in orchestration, data, and process — not the foundation model. With productivity increase, headcount #reduction is common (45%), but not the majority outcome. Redeployment, hiring avoidance, and acceleration strategies accounted for 55% of cases.🚨 The window for experimentation is closing. This is no longer a question of whether AI delivers value. It’s whether organizations can evolve fast enough to capture it — and whether leaders will take responsibility for smoothing the transition for workers and communities along the way. Full report (free): digitaleconomy.stanford.edu/… @StanfordHAI — https://nitter.net/AGraylin/status/2039729157676921185#m

-

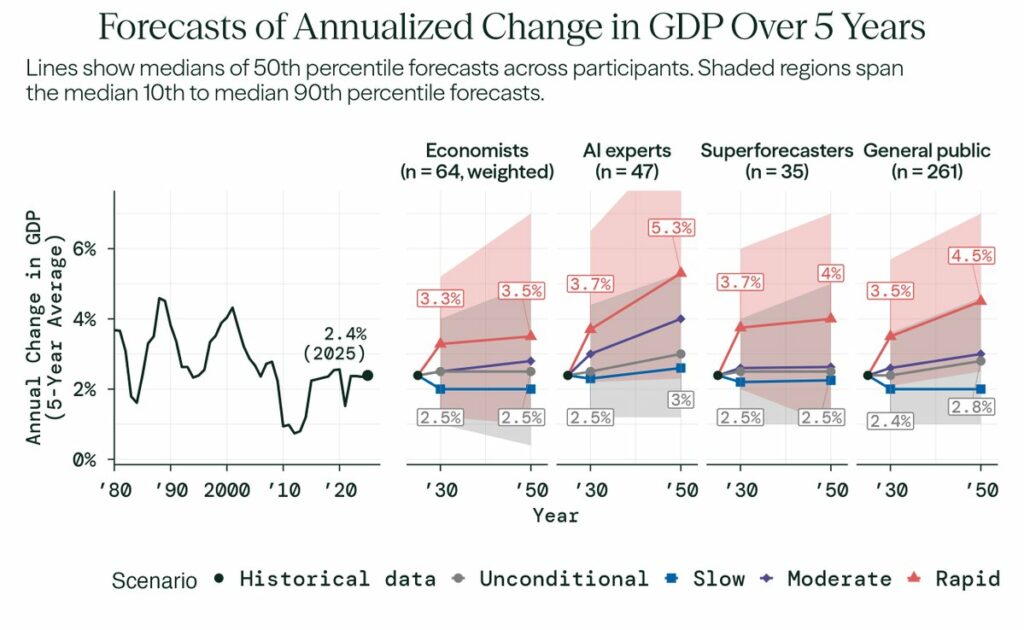

Experts underestimate AI’s potential economic growth impact

By

–

Fascinating work on how different groups of experts expect GDP to change in the coming years. Kudos to @connacher_ , @pawtrammell , @BasilHalperin of the Stanford @DigEconLab and the others who did this data collection and analysis. @PTetlock always does such amazing work. My take? Most of the experts surveyed (I was one of them) are not optimistic enough about growth. Forecasting Research Institute (@Research_FRI) We completed the most comprehensive study of how economists and AI experts think AI will affect the U.S. economy. They predict major AI progress—but no dramatic break from economic trends: GDP growth rates similar to today's and a moderate decline in labor force participation. However, when asked to consider what would happen in a world with extremely rapid progress in AI capabilities by 2030, they predict significant economic impacts by 2050: • Annualized GDP growth of 3.5% (compared to 2.4% in 2025) • A labor force participation rate of 55% (roughly 10 million fewer jobs) • 80% of wealth held by the top 10% (highest since 1939) 🧵 Here's what we found: — https://nitter.net/Research_FRI/status/2038965685431259520#m

-

Erik Brynjolfsson discusses AI’s impact on future of work

By

–

At the Hoover Institution's recent AI and Jobs event, Stanford @DigEconLab Director @ErikBryn shared his perspective on how AI's growing capabilities should shape the future of work. Watch the full panel discussion here: hoover.org/events/productivi…

-

Agentic AI Intelligence Explosion Through Socially Aggregated Cognition

By

–

Our new essay is out in Science: "Agentic AI and the Next Intelligence Explosion" For decades, the AI "singularity" has been imagined as a single, godlike mind bootstrapping itself to omniscience. In this piece with the inimitable Benjamin Bratton (@bratton) and Blaise Agüera y Arcas (@blaiseaguera), we argue this vision is wrong in its most fundamental assumption. Every prior intelligence explosion—primate sociality, human language, writing, institutions—wasn't an upgrade to individual cognitive hardware. It was the emergence of a new socially aggregated unit of cognition. AI is extending this sequence, not breaking from it. The evidence is already inside the models themselves. In recent work, we showed that frontier reasoning models like DeepSeek-R1 don't improve by "thinking longer"—they spontaneously simulate internal multi-agent debates, what we call a "society of thought" (lnkd.in/guNfRtXh). Reinforcement learning for accuracy alone causes models to rediscover what epistemology and cognitive science have long suggested: robust reasoning is a social process, even within a single mind. This opens a vast design space. A century of research on team composition, hierarchy, role differentiation, and structured disagreement has barely been brought to bear on AI reasoning. The toolkits of organizational science become blueprints for next-generation AI. Outside the model, we've entered the era of human-AI centaurs—composite actors that are neither purely human nor purely machine. Agents that fork, differentiate, recombine. Recursive societies of thought that expand when complexity demands and collapse when problems resolve. The scaling frontier isn't just bigger models. It's richer social systems—and the institutions to govern them. Just as human societies rely on persistent institutional templates (courtrooms, markets, bureaucracies), scalable AI ecosystems will need digital equivalents. The Founders would have recognized the logic: no single concentration of intelligence should regulate itself. The intelligence explosion is already here. Not as a singular ascending mind, but as a combinatorial society complexifying—intelligence growing like a city. The question is whether we'll build the social infrastructure worthy of what it's becoming. No mind is an island. Read it here in Science (science.org/doi/10.1126/scie…) or free on the arXiv (arxiv.org/abs/2603.20639)

-

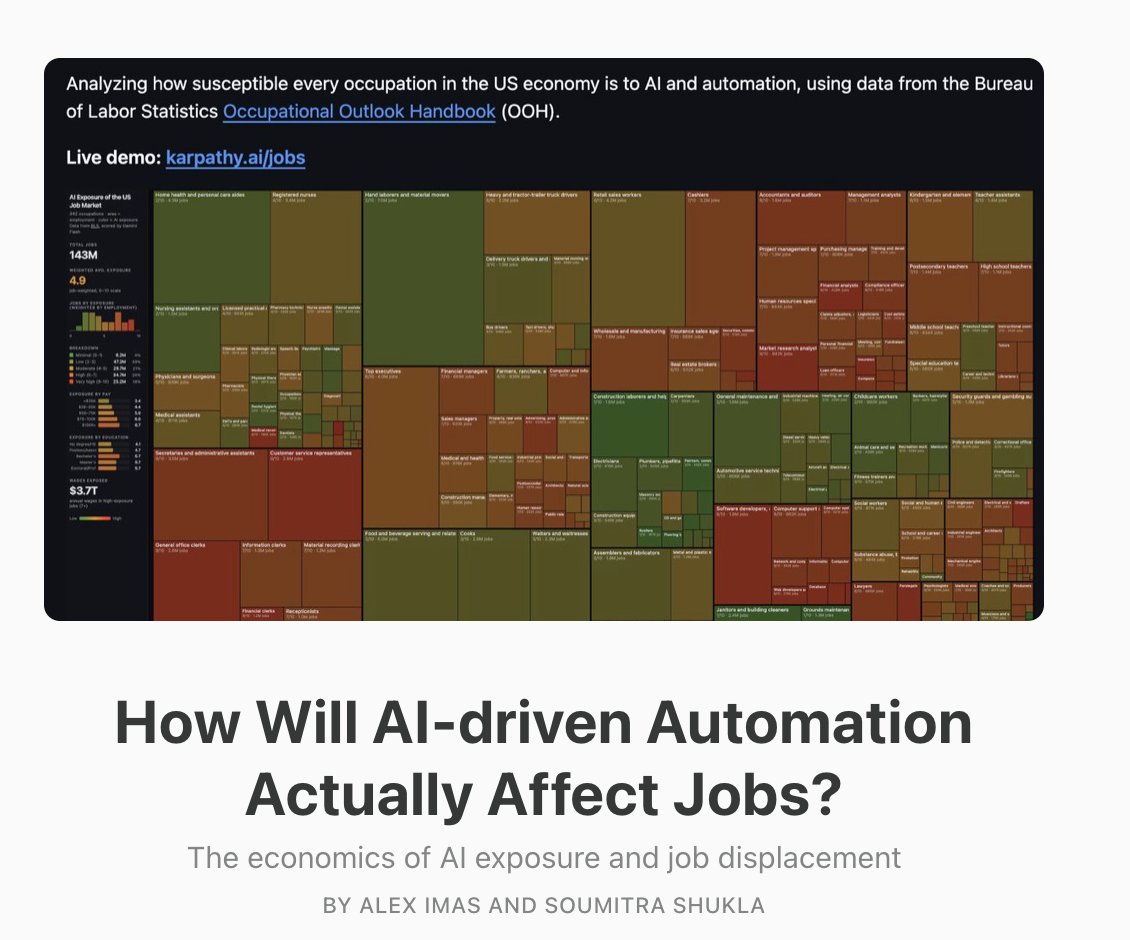

AI Automation Economics: Job Displacement vs Hiring Dynamics

By

–

After a brief hiatus, new post with @soumitrashukla9: "How Will AI-driven Automation Actually Affect Jobs? The economics of AI exposure and job displacement" There has been a lot of discussion in the media, X, substack, etc about AI driven displacement. We felt like it'd be worth working out the actual economics of when AI automation will actually lead to displacement, versus the exact opposite (more hiring, higher wages). A short summary🧵: AI "exposure" measures are not meant to predict displacement or job automation. Exposure can lead a job loss, or it can lead to more hiring and higher wages. It all depends on how 1) automated tasks interact with non-automated tasks (to what extent they're complements), 2) how consumer demand in that sector responds to prices (elasticity of consumer demand), and 3) the dimensionality of the job (the number of tasks a job has). One conclusion: we should be less worried about consultants and more worried about truckers and warehouse workers than we currently are. Link: substack.com/home/post/p-191…

-

Immigration Policy Critical for US AI Competitiveness Against China

By

–

If you really want the US to have an AI advantage over China, immigration policy is at least as important as chip controls. Attract the best and brightest. Keep them here. Don't make it easier for them to do AI work in China than the US. Talent is vital. Zephyr (@zephyr_z9) 40%-60% of the top researchers at frontier labs are non-US citizens If they get removed, then American labs will lose the race — https://nitter.net/zephyr_z9/status/2035759774017667291#m

-

AI Empowers Individuals to Accomplish Work of Entire Teams

By

–

In a remarkably short time, AI moved from something most people associated with sci-fi to something that is expanding what individuals can create and build. What’s changing is the scale at which a single person can operate. With the right human + machine combinations, people can now take on work that once required entire teams. We’re still early. I’ve been working on something that explores this shift. Stay in the loop: mstr.cl/erikbrynjolfssoncomi…