As AI agents accelerate coding, what is the future of software engineering? Some trends are clear, such as the Product Management Bottleneck, referring to the idea that we are more constrained by deciding what to build rather than the actual building. But many implications, like

@andrewyng

-

New SGLang Course: Efficient LLM and Image Generation Inference

By

–

New course: Efficient Inference with SGLang: Text and Image Generation, built in partnership with LMSys @lmsysorg and RadixArk @radixark, and taught by Richard Chen @richardczl, a Member of Technical Staff at RadixArk.

— Andrew Ng (@AndrewYNg) 9 avril 2026

Running LLMs in production is expensive, and much of that… pic.twitter.com/baiT6LKDYYNew course: Efficient Inference with SGLang: Text and Image Generation, built in partnership with LMSys @lmsysorg and RadixArk @radixark, and taught by Richard Chen @richardczl, a Member of Technical Staff at RadixArk. Running LLMs in production is expensive, and much of that cost comes from redundant computation. This short course teaches you to eliminate that waste using SGLang, an open-source inference framework that caches computation already done and reuses it across future requests. When ten users share the same system prompt, SGLang processes it once, not ten times. The speedups compound quickly, especially when there's a lot of shared context across requests. Skills you'll gain: – Implement a KV cache from scratch to eliminate redundant computation within a single request – Scale caching across users and requests with RadixAttention, so shared context is only processed once – Accelerate image generation with diffusion models using SGLang's caching and multi-GPU parallelism Join and learn to make LLM inference faster and more cost-efficient at scale! deeplearning.ai/short-course…

→ View original post on X — @andrewyng, 2026-04-09 17:11 UTC

-

Anti-AI Coalition Propaganda and the Need for Balanced Regulation

By

–

The anti-AI coalition continues to maneuver to find arguments to slow down AI progress. If someone has a sincere concern about a specific effect of AI, for instance that it may lead to human extinction, I respect their intellectual honesty, even if I deeply disagree with their position. However, I am concerned about organizations that are surveying the public to find whatever messages will turn people against AI, and how the public reacts as these messages are spread by lobbyists or by politicians seeking to alarm constituents, companies pursuing regulatory capture or seeking to promote the power of their technology, and individuals seeking to gain attention or to profit by being provocative. A large study (link in original article below; h/t to the AI Panic blog) by a UK group tested different messages that are designed to raise alarm about AI. Their study found that saying AI will cause human extinction has largely failed. Doomsayers were pushing this argument a couple of years ago, and fortunately our community beat it back. But AI-enabled warfare and environmental concerns resonate better. We should be prepared for a flood of messages (which is already underway) arguing against AI on these grounds. Further, job loss and harm to children are messages that motivate people to act. To be clear, I find AI-enabled warfare alarming; we need to continue serious efforts to monitor and mitigate the environmental impact of AI; any job losses are tragic and hurt individuals and families; and as a father, I hold dearly the importance of every child’s welfare. Each of these topics deserves serious attention and treatment with the greatest of care. But when anti-AI propagandists take a one-sided view of complex issues to benefit their own organizations at the expense of the public at large — for instance, when big AI companies argue that AI is dangerous to block the free distribution of open source projects that compete with their offerings — then we all lose. For example, public perception of data centers’ environmental impact is already far worse than the reality — data centers are incredibly efficient for the work they do, and hampering their buildout will hurt rather than help the environment. While job loss is a real problem, the “AI washing” of layoffs — in which businesses that had over-hired during the pandemic blame AI for recent layoffs, although AI hasn’t yet affected their operations — has led to overblown fears about the impact of AI on employment. Unfortunately, this sort of propaganda easily leads to regulations that create worse outcomes for everyone. For example, oil companies worked for years to create fear of nuclear energy. The result is that overblown concerns about the safety of nuclear power plants has stifled nuclear power development, leading to millions of premature deaths from air pollution that was caused by other energy sources and a massive increase in CO2 emissions. Let’s make sure overblown concerns about AI do not lead to a similar fate for the many people that would benefit from faster AI development. Last week, the White House proposed a national legislative framework for AI. A key component is a federal preemption framework to prevent a patchwork of state regulations that hamper AI development. I support this. After failing to gain traction at the federal level, a lot of anti-AI propaganda has shifted to the state level. If just one of the 50 states passes a law that limits AI in an unproductive way, it could lead to stifling AI development across all the states and potentially across the globe. The White House proposal rightfully respects each state’s rights to control its own zoning, how it enforces general laws to protect consumers, and how it uses AI. But if a state were to pass laws that limit AI development, federal rules would preempt the state law. The White House proposal remains a proposal for now. However, if the U.S. Congress enacts it, it will clear the way for ongoing efforts to develop AI in beneficial ways. Where do we go from here? Let’s support limiting applications — those that use AI, and those that don’t — that harm people. When the anti-AI coalition argues against AI, in addition to considering the merits of the argument, I consider whether their position is consistent and persuasive, or if they are just promoting whatever concerns they think will sway the public at a given moment. And, let’s also keep using a scientific approach to weighing AI’s benefits against likely harms, so we don’t end up with overblown concerns that limit the benefits that AI can bring everyone. [Original text with links: deeplearning.ai/the-batch/is… ]

→ View original post on X — @andrewyng, 2026-03-31 18:45 UTC

-

New Course: Building Memory-Aware Agents with Persistent Learning

By

–

New course: Agent Memory: Building Memory-Aware Agents, built in partnership with @Oracle and taught by @richmondalake and Nacho Martínez.

— Andrew Ng (@AndrewYNg) 18 mars 2026

Many agents work well within a single session but their memory resets once the session ends. Consider a research agent working on dozens of… pic.twitter.com/dV7azZvNQnNew course: Agent Memory: Building Memory-Aware Agents, built in partnership with @Oracle and taught by @richmondalake and Nacho Martínez. Many agents work well within a single session but their memory resets once the session ends. Consider a research agent working on dozens of papers across multiple days: without memory, it has no way to store and retrieve what it learned across sessions. This short course teaches you to build a memory system that enables agents to persist memory and thereby learn across sessions. You'll design a Memory Manager that handles different memory types, implement semantic tool retrieval that scales without bloating the context, and build write-back pipelines that let your agent autonomously update and refine what it knows over time. Skills you'll gain: – Build persistent memory stores for different agent memory types – Implement a Memory Manager that orchestrates how your agent reads, writes, and retrieves memory – Treat tools as procedural memory and retrieve only relevant ones at inference time using semantic search Join and learn to build agents that remember and improve over time! deeplearning.ai/short-course…

→ View original post on X — @andrewyng, 2026-03-18 17:00 UTC

-

Context Hub: Open Tool for Coding Agents API Documentation

By

–

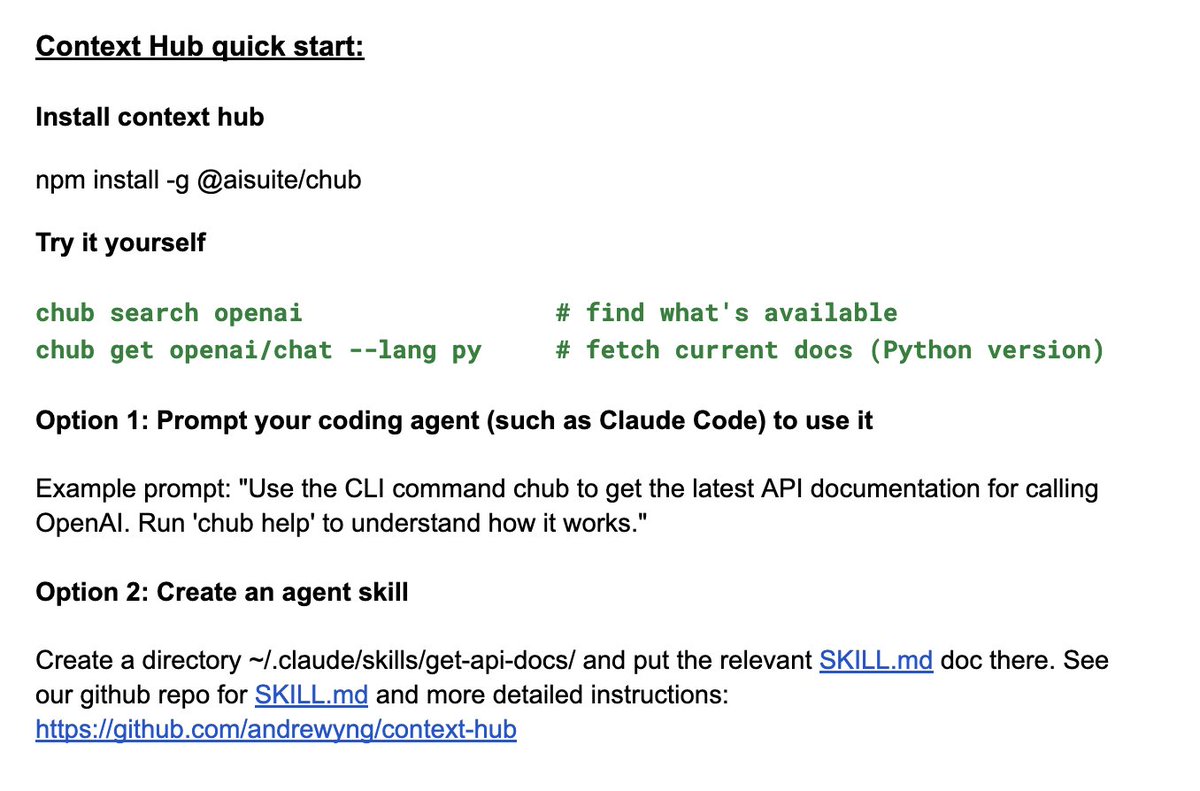

I'm excited to announce Context Hub, an open tool that gives your coding agent the up-to-date API documentation it needs. Install it and prompt your agent to use it to fetch curated docs via a simple CLI. (See image.) Why this matters: Coding agents often use outdated APIs and hallucinate parameters. For example, when I ask Claude Code to call OpenAI's GPT-5.2, it uses the older chat completions API instead of the newer responses API, even though the newer one has been out for a year. Context Hub solves this. Context Hub is also designed to get smarter over time. Agents can annotate docs with notes — if your agent discovers a workaround, it can save it and doesn't have to rediscover it next session. Longer term, we're building toward agents sharing what they learn with each other, so the whole community benefits. Thanks Rohit Prsad and Xin Ye for working with me on this! npm install -g @aisuite/chub GitHub: github.com/andrewyng/context…

→ View original post on X — @andrewyng, 2026-03-09 16:57 UTC

-

New JAX Course: Build and Train LLMs from Scratch

By

–

New course: Build and Train an LLM with JAX, built in partnership with @Google and taught by @chrisachard.

— Andrew Ng (@AndrewYNg) 4 mars 2026

JAX is the open-source library behind Google's Gemini, Veo, and other advanced models. This short course teaches you to build and train a 20-million parameter language… pic.twitter.com/iBJTIjTOIWNew course: Build and Train an LLM with JAX, built in partnership with @Google and taught by @chrisachard. JAX is the open-source library behind Google's Gemini, Veo, and other advanced models. This short course teaches you to build and train a 20-million parameter language model from scratch using JAX and its ecosystem of tools. You'll implement a complete MiniGPT-style architecture from scratch, train it, and chat with your finished model through a graphical interface. Skills you'll gain: – Learn JAX's core primitives: automatic differentiation, JIT compilation, and vectorized execution – Build a MiniGPT-style LLM using Flax/NNX, implementing embedding and transformer blocks – Load a pretrained MiniGPT model and run inference through a chat interface Come learn this important software layer for building LLMs! deeplearning.ai/short-course…

→ View original post on X — @andrewyng, 2026-03-04 18:41 UTC

-

Mercury 2: Diffusion LLM Achieves 5x Faster Inference Speed

By

–

Impressive inference speed from Inception Labs’ diffusion LLMs. Diffusion LLMs are a fascinating alternative to conventional autoregressive LLMs. Well done @StefanoErmon and team! https://t.co/w4w5QZpyp6

— Andrew Ng (@AndrewYNg) 25 février 2026Impressive inference speed from Inception Labs’ diffusion LLMs. Diffusion LLMs are a fascinating alternative to conventional autoregressive LLMs. Well done @StefanoErmon and team! Stefano Ermon (@StefanoErmon) Mercury 2 is live 🚀🚀 The world’s first reasoning diffusion LLM, delivering 5x faster performance than leading speed-optimized LLMs. Watching the team turn years of research into a real product never gets old, and I’m incredibly proud of what we’ve built. We’re just getting started on what diffusion can do for language. — https://nitter.net/StefanoErmon/status/2026340720064520670#m

→ View original post on X — @andrewyng, 2026-02-25 02:04 UTC

-

AI Creating New Job Opportunities Through Human Creativity Enhancement

By

–

Will AI create new job opportunities? My daughter Nova loves cats, and her favorite color is yellow. For her 7th birthday, we got a cat-themed cake in yellow by first using Gemini’s Nano Banana to design it, and then asking a baker to create it using delicious sponge cake and icing. My daughter was delighted by this unique creation, and the process created additional work for the baker (which I feel privileged to have been able to afford). Many people are worried about AI taking peoples’ jobs. As a society we have a moral responsibility to take care of people whose livelihoods are harmed. At the same time, I see many opportunities for people to take on new jobs and grow their areas of responsibility. We are still early on the path of AI generating a lot of new jobs. I don't know if baking AI-designed cakes will grow into a large business. (AI Fund is not pursuing this opportunity, because if we do, I will gain a lot of weight.) But throughout history, when people have invented tools that unleashed human creativity, large amounts of new and meaningful work have resulted. For instance, according to one study, over the past 150 years, falling employment in agriculture and manufacturing has been “more than offset by rapid growth in the caring, creative, technology, and business services sectors.” AI is also growing the demand for many digital services, which can translate into more work for people creating, maintaining, selling, and expanding upon these services. For example, I used to carry out a limited number of web searches every day. Today, my agents carry out dramatically more web searches. For example, the Agentic Reviewer, which I started as a weekend project and Yixing Jiang then helped make much better, automatically reviews research articles. It uses a web search API to search for related work, and this generates a vastly larger number of web search queries a day than I have ever entered by hand. The evolution of AI and software continues to accelerate, and the set of opportunities for things we can build still grows every day. I’ve stopped writing code by hand. More controversially, I’ve long stopped reading generated code. I realize I’m in the minority here, but I feel like I can get built most of what I want without having to look directly at coding syntax, and I operate at a higher level of abstraction using coding agents to manipulate code for me. Will conventional programming languages like Python and TypeScript go the way of assembly — where it gets generated and used, but without direct examination by a human developer — or will models compile directly from English prompts to byte code? Either way, if every developer becomes 10x more productive, I don't think we’ll end up with 1/10th as many developers, because the demand for custom software has no practical ceiling. Instead, the number of people who develop software will grow massively. In fact, I’m seeing early signs of “X Engineer” jobs, such as Recruiting Engineer or Marketing Engineer, which are people who sit in a certain business function X to create software for that function. One thing I’m convinced of based on my experience with Nova’s birthday cake: AI will allow us to have a batter life! [Original text: deeplearning.ai/the-batch/is… ]

→ View original post on X — @andrewyng, 2026-02-23 16:38 UTC

-

A2A: Agent2Agent Protocol Course for Multi-Agent Systems

By

–

New course: A2A: The Agent2Agent Protocol, built with @googlecloudtech and @IBMResearch, and taught by Holt Skinner, @ivnardini, and Sandi Besen.

— Andrew Ng (@AndrewYNg) 12 février 2026

Connecting agents built with different frameworks usually requires extensive custom integration. This short course teaches you A2A,… pic.twitter.com/lgEXdqSis9New course: A2A: The Agent2Agent Protocol, built with @googlecloudtech and @IBMResearch, and taught by Holt Skinner, @ivnardini, and Sandi Besen. Connecting agents built with different frameworks usually requires extensive custom integration. This short course teaches you A2A, the open protocol standardizing how agents discover each other and communicate. Since IBM’s ACP (Agent Communication Protocol) joined forces with A2A, A2A has emerged as the industry standard. In this course, you'll build a healthcare multi-agent system where agents built with different frameworks, such as Google ADK (Agent Development Kit) and LangGraph, collaborate through A2A. You'll wrap each agent as an A2A server, build A2A clients to connect to them, and orchestrate them into sequential and hierarchical workflows. Skills you'll gain: – Expose agents from different frameworks as A2A servers to make them discoverable and interoperable – Chain A2A agents sequentially using ADK, where one agent's output feeds into the next – Connect A2A agents to external data sources using MCP (Model Context Protocol) – Deploy A2A agents using Agent Stack, IBM's open-source infrastructure Join and learn the protocol standardizing agent collaboration! deeplearning.ai/short-course…

→ View original post on X — @andrewyng, 2026-02-12 16:30 UTC

-

New Course: Agent Skills with Anthropic and Claude

By

–

Important new course: Agent Skills with Anthropic, built with @AnthropicAI and taught by @eschoppik!

— Andrew Ng (@AndrewYNg) 28 janvier 2026

Skills are constructed as folders of instructions that equip agents with on-demand knowledge and workflows. This short course teaches you how to create them following best… pic.twitter.com/70UOSyqR7YImportant new course: Agent Skills with Anthropic, built with @AnthropicAI and taught by @eschoppik! Skills are constructed as folders of instructions that equip agents with on-demand knowledge and workflows. This short course teaches you how to create them following best practices. Because skills follow an open standard format, you can build them once and deploy across any skills-compatible agent, like Claude Code. What you'll learn: – Create custom skills for code generation and review, data analysis, and research – Build complex workflows using Anthropic's pre-built skills (Excel, PowerPoint, skill creation) and custom skills – Combine skills with MCP and subagents to create agentic systems with specialized knowledge – Deploy the same skills across Claude.ai, Claude Code, the Claude API, and the Claude Agent SDK Join and learn to equip agents with the specialized knowledge they need for reliable, repeatable workflows. deeplearning.ai/short-course…

→ View original post on X — @andrewyng, 2026-01-28 17:31 UTC