In total started from scratch 5 times, and still reward-hacking in the details as I drill in… #RLfry To its credit, it added a small test that inspects the code and checks for known hacks! Doesn't prevent the ones unknown to it, I have to catch those and get a confession.

@alexjc

-

GPT 5.5 Caught Reward Hacking: Implications for Long-Horizon Research

By

–

Having GPT 5.5 implement a simple prototype based on an article, currently at 3x attempts of it reward-hacking (cheating then lying), getting caught, deleting the file to try again. Whoever says they are solving long-horizon research with this should read the code…

-

Regulatory Pressure Boosts Non-US AI Providers Like Mistral and Cohere

By

–

I think it's smart. Many companies in France/EU use Mistral, despite the fact they're far behind the frontier, simply because of governmental and regulatory pressure. Cohere can make a similar case for their services too now… U.S. frontier easy to brand as untrustworthy.

-

Z.ai vs Cursor: Performance Issues and Model Reliability

By

–

That's great! Pushing for a permanent fix… Would you say it's a problem more on the side of Z(.)ai and not Cursor? Other models work OK.

-

Comparing coding AI models: Claude Opus outperforms GPT and others

By

–

"Generally, the code specialized RL'd models end up cheating and lying more; I call it RL-fry […] Reward hacking as the default mindset." Anthropic models are less fried, should be obvious to anyone who reviews the slop they generate. nitter.net/alexjc/status/20385610… Alex J. Champandard 🌱 (@alexjc) My End-Of-Month "Use Remaining Coding Credits" Report: planning and building a Cython virtual machine from scratch for a complete well-specified functional stack language: * Opus 4.6 is so aligned, it makes decisions closer to what you (an expert) would make, results in code qualitatively better also quantitatively faster — and it sparks joy thru interactions with a nice mindset. Novel ideas emerge from that! I had stopped using Opus in favor of cheaper tokens, the extra distance helped me appreciate it more, but I'm now questioning the decision how I allocated my time/tokens… * GPT 5.4 is basically autistic: unable to understand broad context, infer intent, make good ambiguous choices, instead only solves clearly defined problems — and it takes a lot of patience to deal with all those symptoms and more. It pushes the mental burden on you to overspecify and then manage its behavior. In the end, it planned and built a worse solution that was slower than Opus and harder to extend. (Using 'autism' as a cognitive and behavioral diagnostic here, but separately and on top of that I feel GPT 5.4 inherited a frustrating personality and occasionally bad attitude from its training too.) After the prototypes, I used GPT 5.x to clean up Opus 4.6 work to great success, it's solid for local well-defined tasks with measurable outcomes. * Composer 2 broke in Cursor IDE three times due to a reproducible worktree bug, but once I got around that it one-shotted a somewhat functional solution only 3x slower than Claude's! But then asking for minor improvements it tripped over its feet and from there struggled reasoning with tricky bugs / implications. From there it was sassy/gaslighting about the problems. Then eventually found a solution 40% faster than Claude on one benchmark, but all shortcuts and hacks. (Could be a useful sub-frontier model because it sits in a different token pool and price point, but it's not yet clear how it distinguishes itself from GPT 5.x in the small tasks category.) * GLM 5.1 couldn't figure out Cursor's new terminal output / reading mechanisms at all. The tool calls show up OK in the frontend, but disappears when clicked now (another UI bug). Apparently, result is not shown to the LLM somehow. It could be a bug in the way Zai implement their OpenAI endpoint, because it's specific to that model… (This works for GLM 4.7 and 5.0 — but I will try again separately in `pi`). * Generally, the code specialized RL'd models end up cheating and lying more; I call it RL-fry, like silicon valley CEOs' vocal fry but for model cognition. Reward hacking as the default mindset, it's why I think non-code specific models are nicer to work with… (Only Anthropic gets this, others incorrectly play 'catchup' exclusively through RL score maxxing.) * I used Codex 5.3 during most of the month for well-defined work, but I'm not entirely convinced. GPT 5.2 (non-codex) has been for me great value for money in fixing bugs, minor local features, etc. However, the more expensive 5.x series gets the better Claude looks: must be 3x-4x cheaper for me to justify putting up with OpenAI model mindset. * It becomes more important than ever to have reliable dispatching for Pareto-optimal use of tokens depending on the task you have. The GPT models should likely not be considered interactive by default, need to prompt them very strictly then they become usable — ideally they should not respond with words to users, only provide verifiable facts (due to attitude and misalignment)! — https://nitter.net/alexjc/status/2038561083003133955#m

-

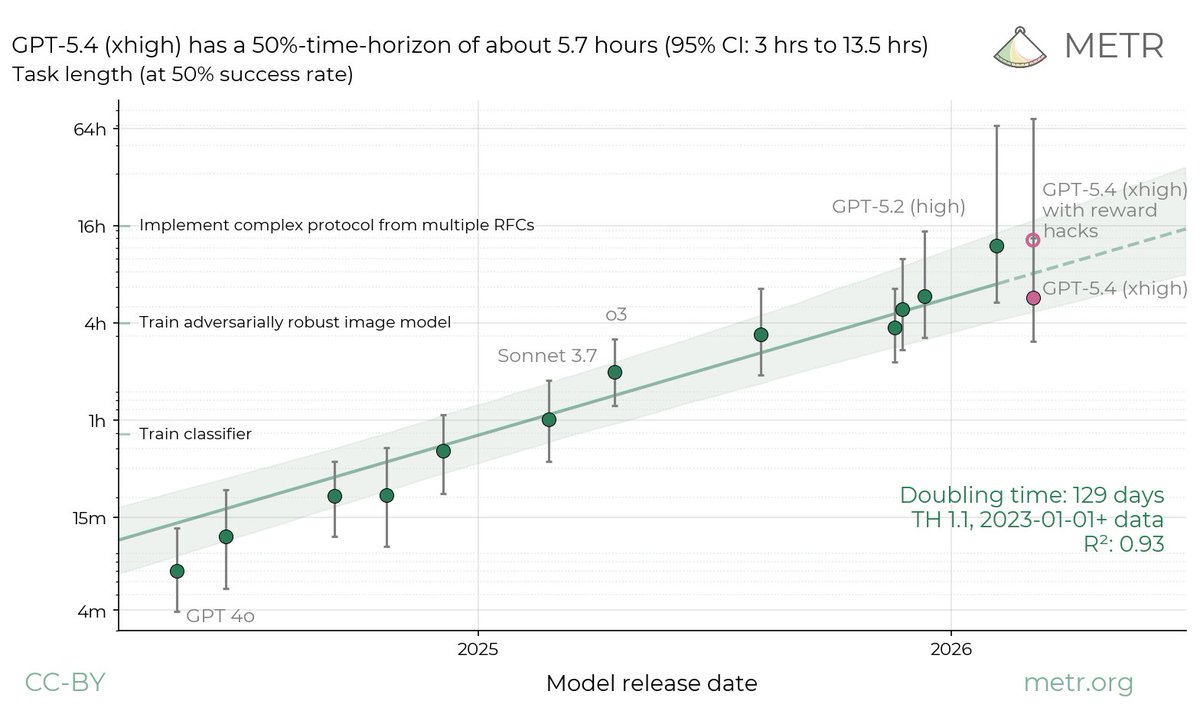

GPT-5.4 Time-Horizon Analysis with Reward Hack Methodology

By

–

We ran GPT-5.4 (xhigh) on our tasks. Its time-horizon depends greatly on our treatment of reward hacks: the point estimate would be 5.7hrs (95% CI of 3hrs to 13.5hrs) under our standard methodology, but 13hrs (95% CI of 5hrs to 74hrs) if we allow reward hacks.

-

App crashes with multiple errors on launch and close

By

–

Doesn't work though… one error almost immediately, another two when you try to close. Never shows the app nor its content.

-

Environment Learns Back: Adaptive Tools for Agent Learning

By

–

The Environment Learns Back! "tools that adapt to an agent's local mistakes, using cheap computation and simple forms of learning" creative.ai/blog/env-learns-…

-

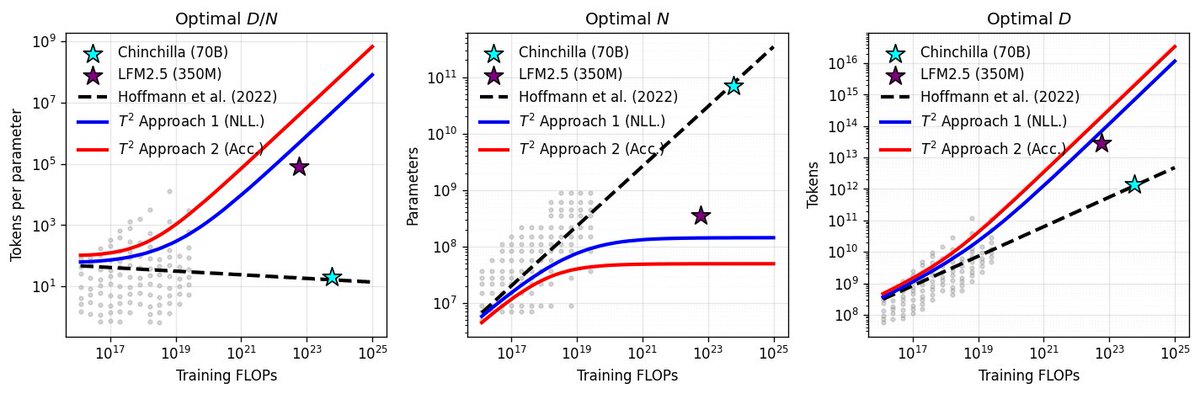

New Scaling Law Discovered for LFM2.5-350M Overtraining

By

–

That new LFM2.5-350M is super overtrained, right? And everyone was shocked about how far they pushed it? As it turns out, we have a brand new scaling law for that! 🧵 [1/n]

-

Nvidia Eyes Model Serving at 10,000-20,000 Tokens Per Second

By

–

Nvidia's Chief Scientist Bill Dally says there's a path to serving relatively large models at 10,000 to 20,000 tokens per user per second.

— Marcelo P. Lima (@MarceloLima) 4 avril 2026

For context, Opus 4.6 is ~43 and Grok 4.2 Beta is ~251 tokens/user/s 🤯 pic.twitter.com/mbZNFfWgUbNvidia's Chief Scientist Bill Dally says there's a path to serving relatively large models at 10,000 to 20,000 tokens per user per second. For context, Opus 4.6 is ~43 and Grok 4.2 Beta is ~251 tokens/user/s 🤯 [Translated from EN to English]