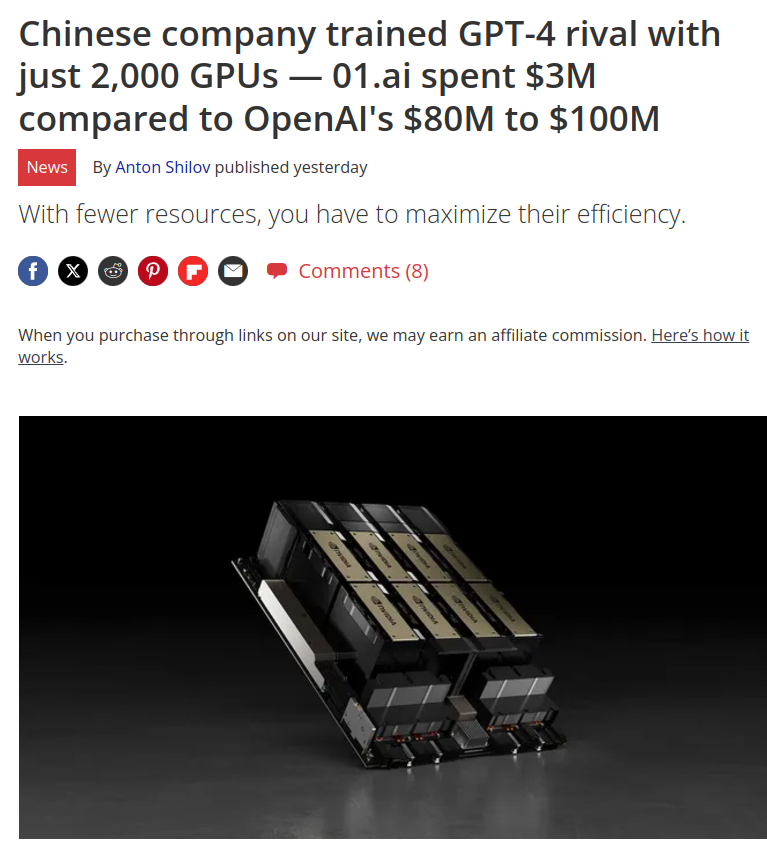

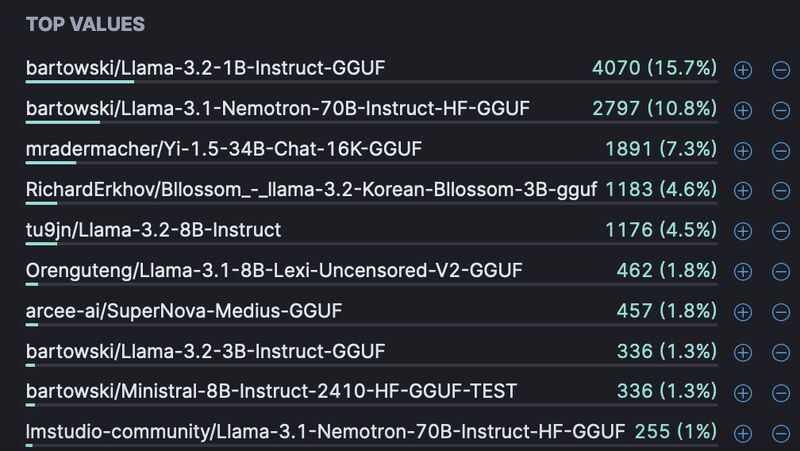

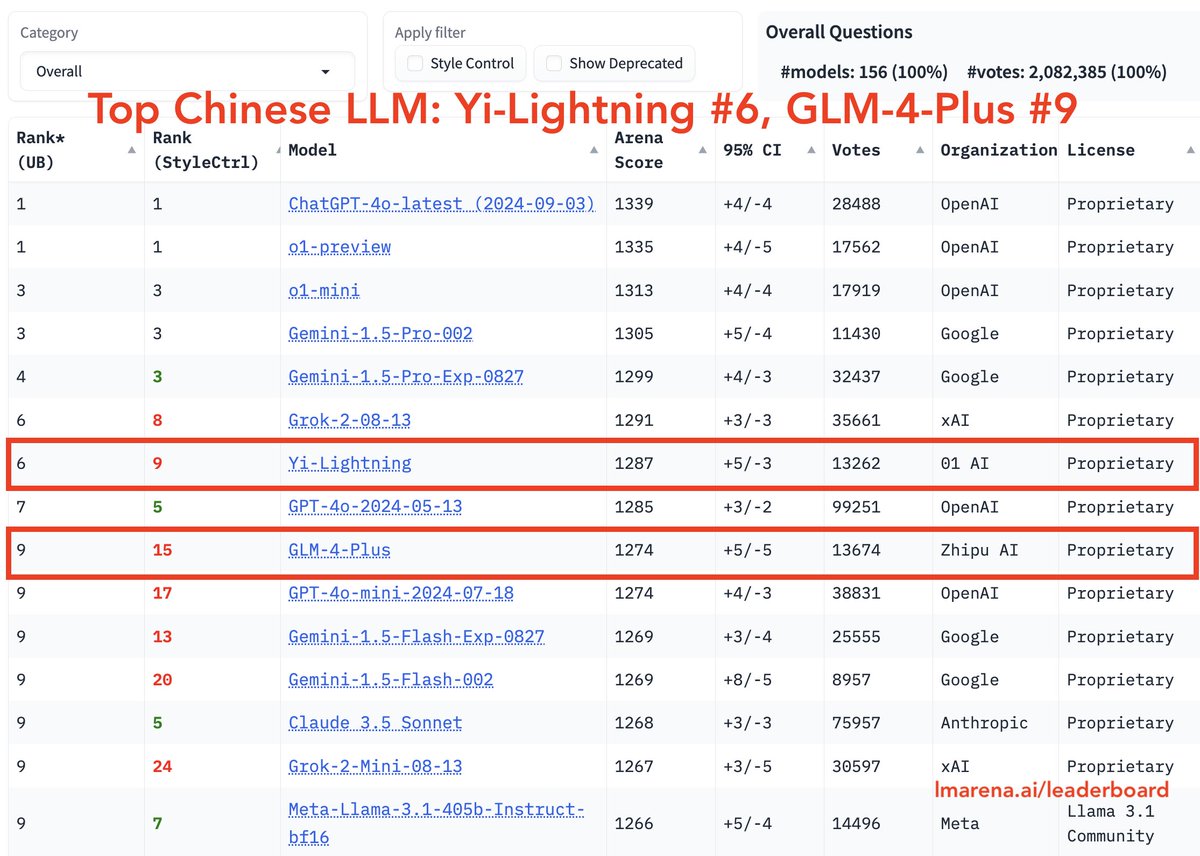

We are proud to present the latest model ⚡️Yi-Lightning ⚡️ now #6 in the world, higher than the original GPT-4o released 5 months ago. Also humbled that @01AI_Yi is ranked #3 LLM player on @lmarena_ai Chatbot Arena — open after OpenAI, Google and tie with xAI to serve other broader parts of the world under our vision "Make AGI Accessible and Beneficial to Everyone" 💪 Arena.ai (@arena) Big News from Chatbot Arena! @01AI_YI's latest model Yi-Lightning has been extensively tested in Arena, collecting over 13K community votes! Yi-Lightning has climbed to #6 in the Overall rankings (#9 in Style Control), matching top models like Grok-2. It delivers robust performance in technical areas like Math, Hard Prompts, and Coding. Huge congrats to @01AI_YI! Meanwhile, GLM-4-Plus by Zhipu AI (@ChatGLM) has also entered the top 10, marking a strong surge for Chinese LLMs. They're quickly becoming highly competitive. Stay tuned for more! More analysis below👇 — https://nitter.net/arena/status/1846245604890116457#m

→ View original post on X — @01ai_yi, 2024-10-15 23:55 UTC