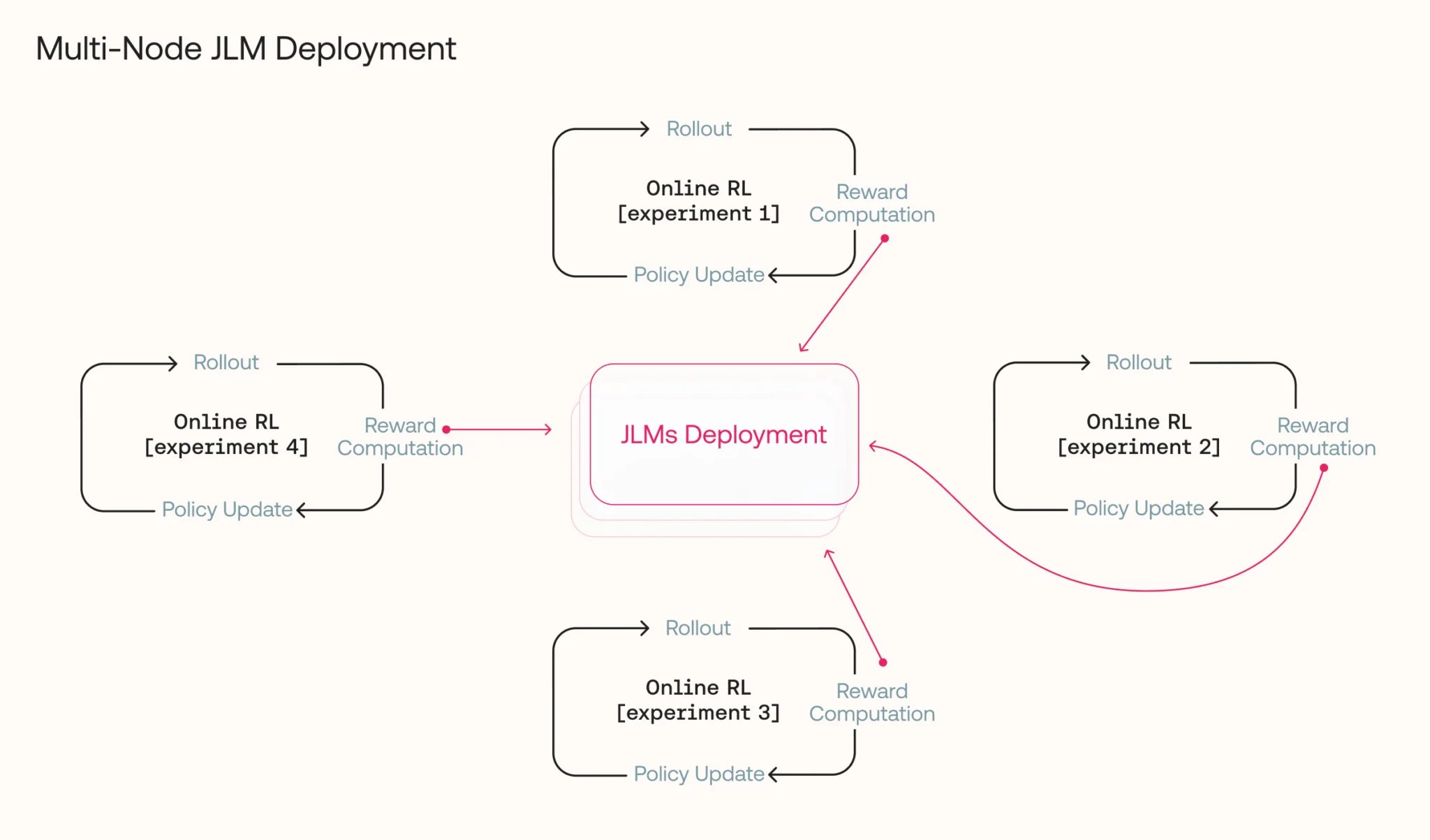

2/5 The setup: JLMs are used only during the reward phase of Online RL, sitting idle the rest of each step. To avoid GPU waste, we shared JLM deployments across multiple training jobs. This improved utilization but exposed JLMs to unpredictable traffic bursts from multiple

JLM Deployment Sharing Across Multiple Training Jobs for GPU Optimization

By

–