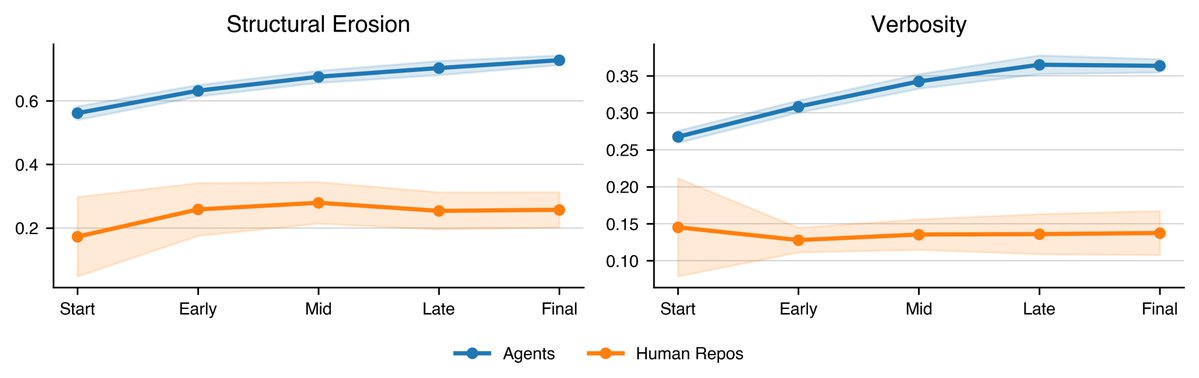

Congrats on the release 🚀 Proud to support research like this that moves the needle on evals and real-world agent performance. Gabe Orlanski (@GOrlanski) We found that agents generate progressively worse code with each iteration. Real developers do not. SlopCodeBench is the only eval that faithfully measures quality degradation on iterative, long-horizon coding tasks. arxiv.org/abs/2603.24755 scbench.ai 🧵 — https://nitter.net/GOrlanski/status/2037560777356238881#m

→ View original post on X — @snorkelai, 2026-03-27 18:57 UTC