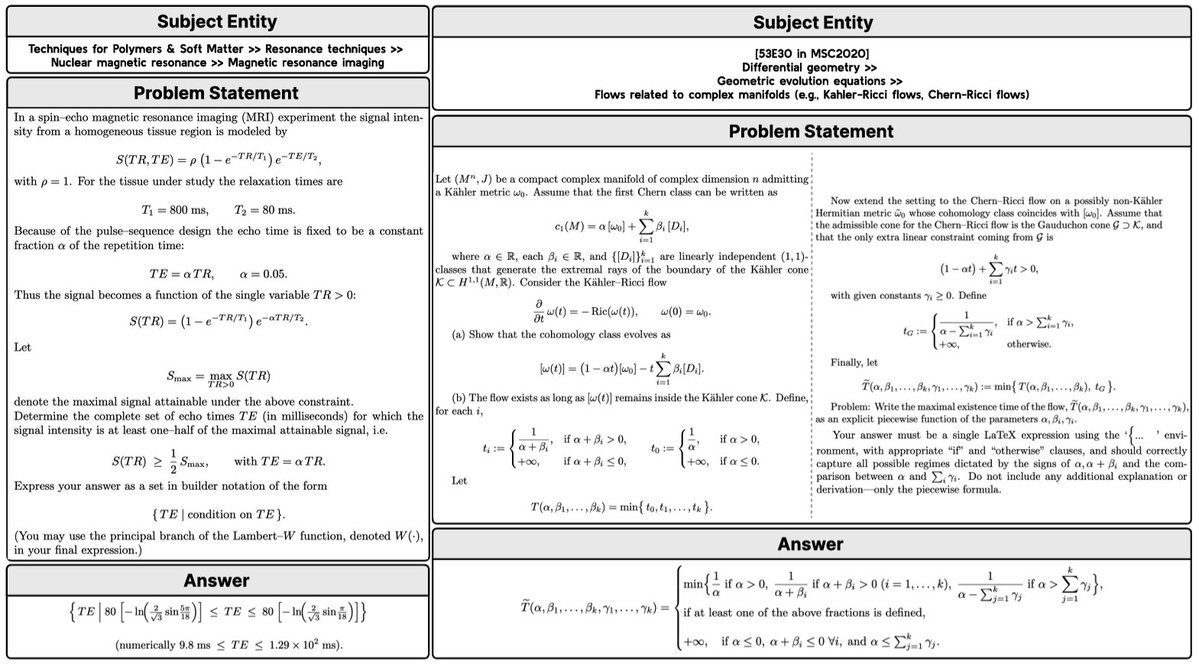

🧮New work from @AIatMeta & @LTIatCMU! LM reasoning benchmarks mostly use simple answers like numbers (AIME) or multiple-choice options (GPQA). But for complex mathematical objects, performance drops sharply. We propose a set of solutions to solve this: arxiv.org/abs/2603.18886

New LM Reasoning Benchmarks for Complex Mathematical Objects

By

–