New preprint!

— Jason Wei (@_jasonwei) 4 novembre 2022

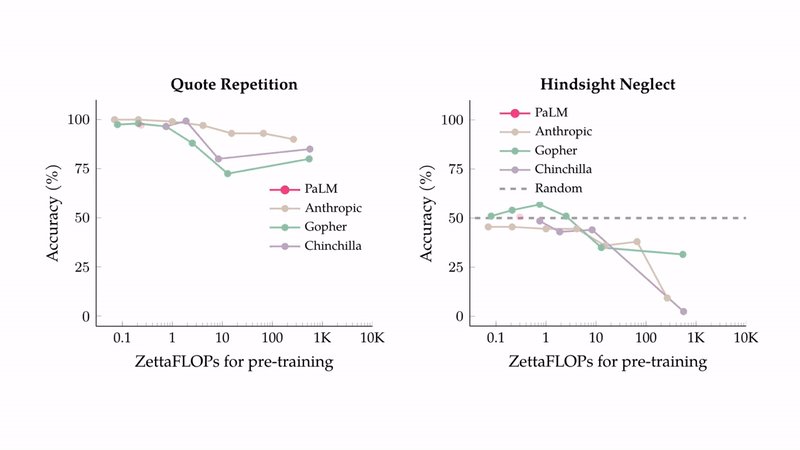

By evaluating 5x larger language models, inverse scaling can become “U-shaped scaling”, which means that performance increases sharply after decreasing.

https://t.co/bZQndKqlB6

These two tasks here are Third Prize winners from the Inverse Scaling Prize. pic.twitter.com/8d3pu8DDrk

New preprint! By evaluating 5x larger language models, inverse scaling can become “U-shaped scaling”, which means that performance increases sharply after decreasing. https://

arxiv.org/abs/2211.02011 These two tasks here are Third Prize winners from the Inverse Scaling Prize.