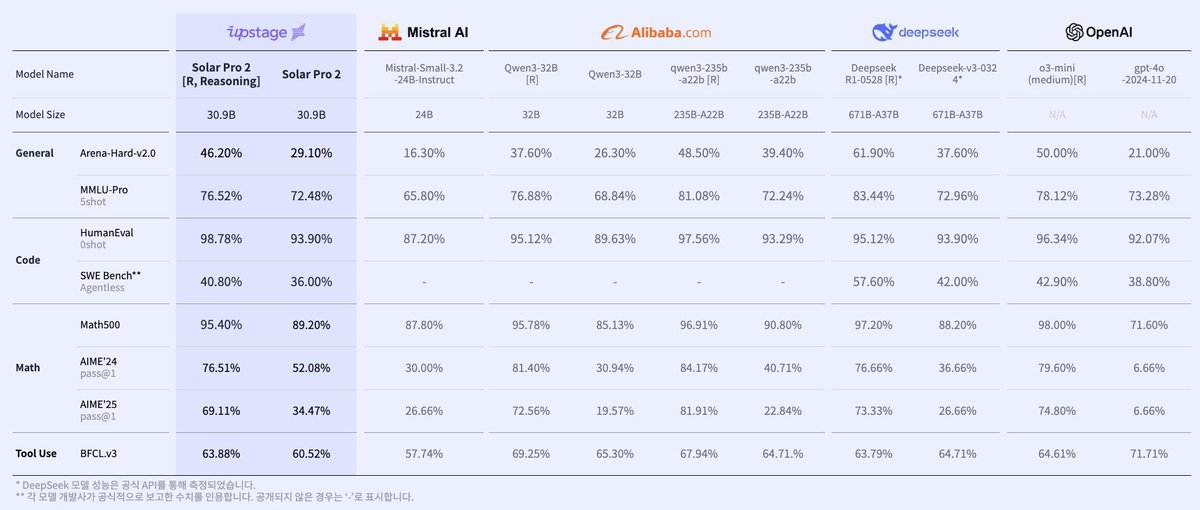

Finally, @upstageai is proud to announce the launch of its #Frontier model #SolarPro2. We sincerely thank everyone who used our solar-pro2-preview and provided valuable feedback. Leveraging your input, we have incorporated newly developed learning methodologies, enhanced data curation techniques for improved data quality, general reasoning data synthesis methods, and verifiable reward technologies to create this advanced model. The Solar-Pro2 model demonstrates exceptional performance on high-difficulty reasoning benchmarks, including MMLU-Pro, Math500, AIME, and SWE-Bench. Moreover, it excels in Arena-Hard-Auto, a rigorous evaluation framework adapted from LMSYS’s LLMArena, which focuses exclusively on extremely challenging problems. Arena-Hard-Auto is not a simple multiple-choice test—it evaluates answers against those generated by top-tier models, akin to lengthy essay or subjective exams. This benchmark is so demanding that non-Frontier models often score only 10–20%, making it nearly impossible to publish results. Solar-Pro2 (reasoning) achieves a 46% score on Arena-Hard-Auto and 71% on Ko-Arena-Hard-v0.1, surpassing even the highest-quality Korean answers. Solar-Pro2 is now available for immediate use at chat.upstage.ai/ and via API (model name: solapr-pro2). To encourage broader adoption, we offer a TRY-SOLAR-PRO2 $50 credit code (valid until August 31).

Upstage Launches Solar-Pro2 Frontier Model with Advanced Reasoning

By

–