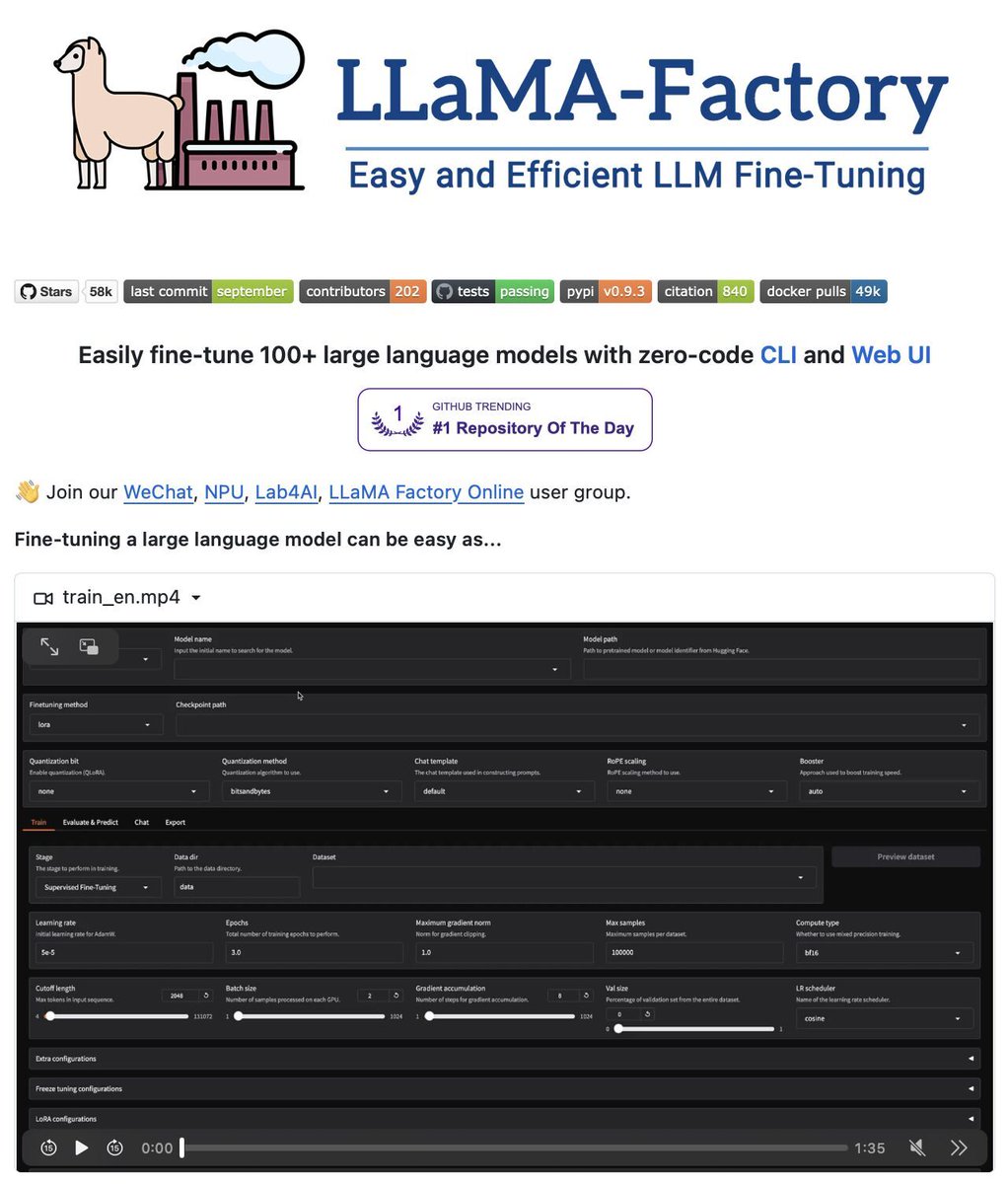

Fine-Tune 100+ LLMs without writing a single line of code! LLaMA-Factory lets you train and fine-tune open-source LLMs and VLMs without writing any code. Here's why it's a game changer for fine-tuning: • Fine-tune 100+ LLMs/VLMs with built-in templates (LLaMA, Gemma, Qwen, Mistral, DeepSeek, and more). • Zero-code CLI & Web UI for training, inference, merging, and evaluation. • Supports full-tuning, LoRA, QLoRA, freeze-tuning, PPO/DPO, OFT, reward modeling, and multi-modal fine-tuning. • Speeds up training/inference with FlashAttention-2, RoPE scaling, Liger Kernel, and vLLM backend. • Integrates experiment tracking via LlamaBoard, TensorBoard, Weights & Biases, MLflow, and SwanLab. It's 100% Open Source Link to the Github Repo in the comments!

→ View original post on X — @sumanth_077, 2026-04-02 13:49 UTC

Leave a Reply