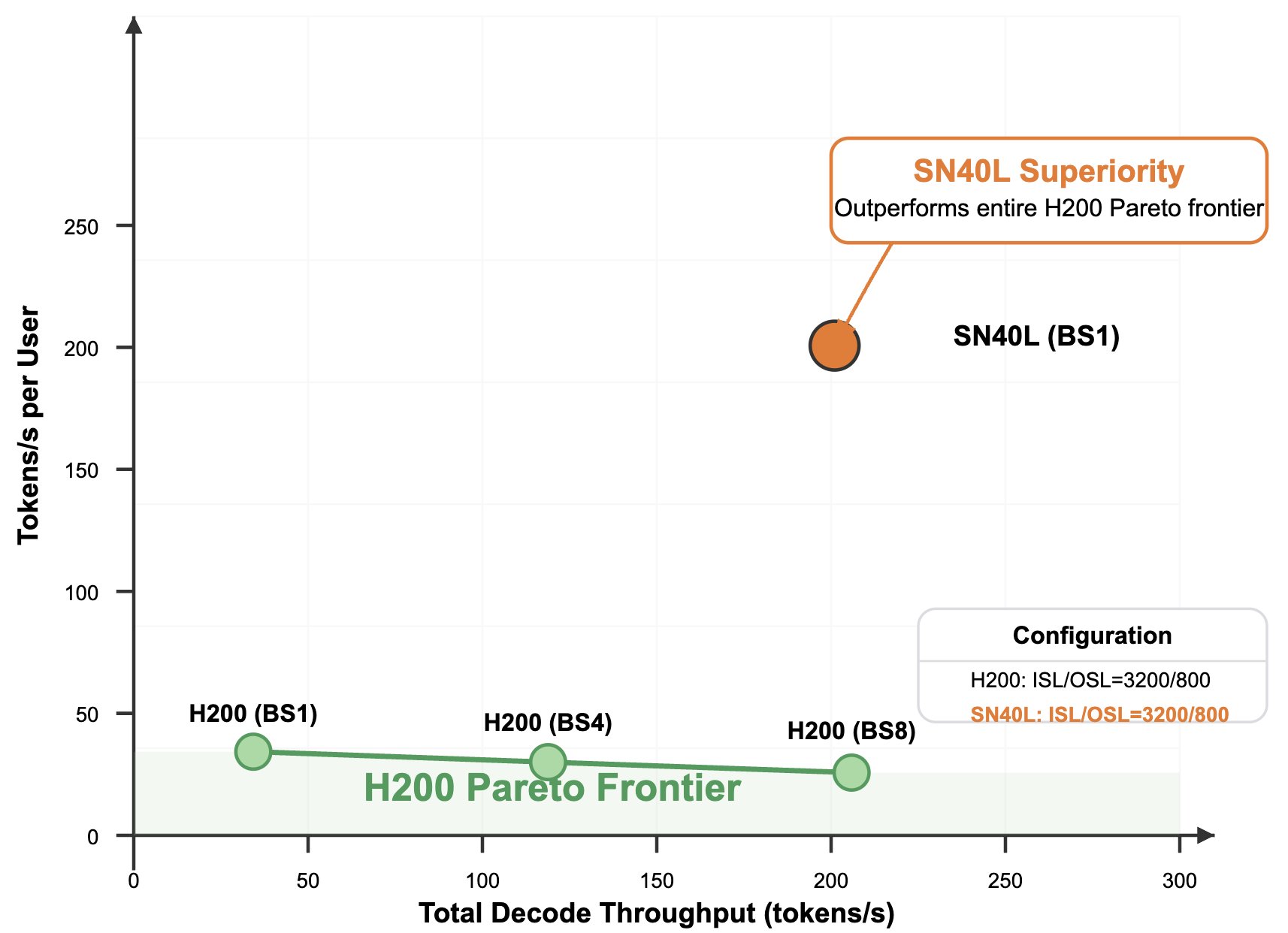

SN40L crushes H200 in real-world #AI inference! We measured @deepseek_ai

's-R1 with SGLang 0.4.2 on 1 node of H200, & guess what – SN40L completely smashes H200's Pareto frontier: 5.7x faster (201 tps vs 35 tps) Reasoning model (30s vs 171s to generate 6k tokens)

SN40L Outperforms H200 in DeepSeek-R1 AI Inference Testing

By

–