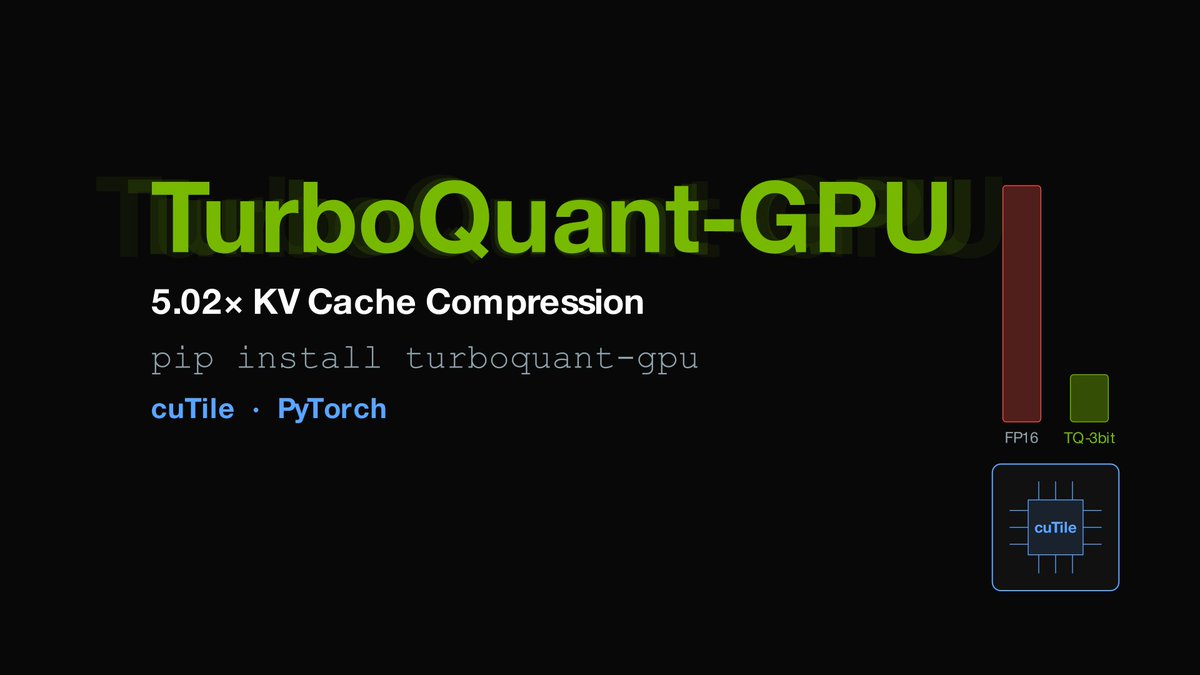

pip install turboquant-gpu 5.02x KV cache compression for ANY GPU (RTX, H100, A100, B200) – works over @huggingface transformers – dead-simple API: compress + generate in 3 lines – 3-bit Lloyd-Max fused KV compression (0.98 cosine similarity) – outperforms MXFP4 (3.76x) and NVFP4 (3.56x) on compression Ran Mistral-7B: 1,408 KB → 275 KB KV cache (5.02x) Quickstart: github.com/DevTechJr/turboqu… Written in cuTile (CUDA 12, 13) with PyTorch fallbacks

→ View original post on X — @huggingface, 2026-04-05 19:30 UTC