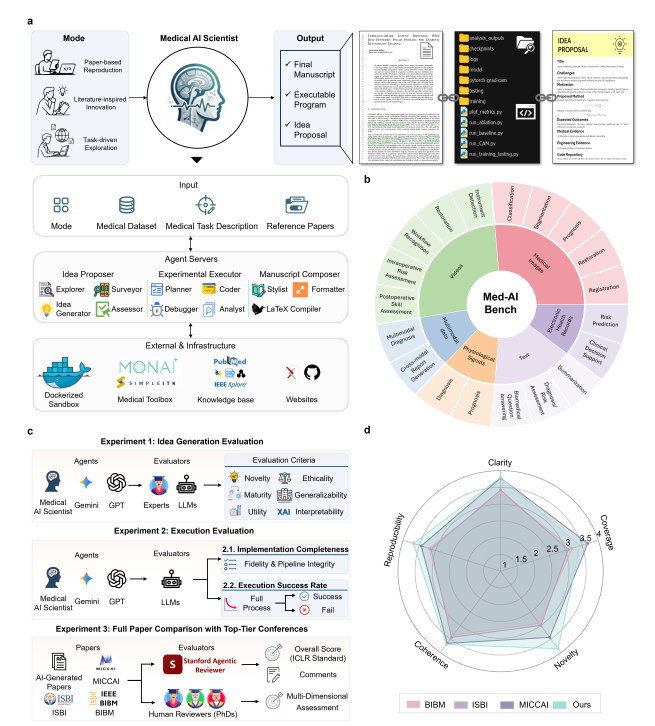

This is a solid, innovative step toward AI-augmented scientific workflows in medicine. It seems better at structured medical tasks than generic LLMs, with manuscripts that can fool experts in blind tests (and also fool conferences – although that seems relatively easy these days). It demonstrates progress in autonomous agents for research pipelines. However, it's not yet a revolutionary "AI scientist" replacing human researchers or immediately flooding journals with validated breakthroughs. Real impact will depend on extensive external validation and whether it’s a black box or provides mechanistic interpretation. Can we stop it with the dramatic pronouncements describing legitimate papers which are clearly AI-written? Ihtesham Ali (@ihtesham2005) 🚨BREAKING: Stanford and Microsoft just built an AI scientist that writes medical research papers that actually pass peer review. Not summaries. Not drafts. Full papers reviewed and accepted by real scientists. This is not a demo and this is not a prototype. A peer-reviewed conference just accepted a paper that no human wrote, and most people have absolutely no idea it happened. The system is called Medical AI Scientist and it works in three stages that run completely on their own. First, it reads medical literature, identifies real clinical gaps, and generates a research hypothesis grounded in actual disease evidence, not a hallucination and not a generic idea pulled from thin air. Then it writes the code, runs the experiment inside a secure environment, catches its own errors, and fixes them without any human stepping in. Then it writes the full paper, including the introduction, methods, results, figures, ethics statement, citations, and LaTeX formatting, from start to finish, autonomously. They tested it against GPT-5 and Gemini 2.5 Pro across 171 real medical research cases covering 19 clinical tasks, and the results were not close. Medical AI Scientist successfully completed experiments 91 to 93 percent of the time. GPT-5 managed 60 to 75 percent. Gemini 2.5 Pro collapsed somewhere between 40 and 53 percent. Then they ran the part that genuinely broke my brain. Ten independent medical experts with over five years of first-author publishing experience reviewed the AI-generated papers side by side with real human papers from MICCAI, ISBI, and BIBM, the top conferences in medical imaging, and nobody knew which was which. The AI papers scored competitively on novelty, clarity, coherence, and reproducibility across the board, and one paper was accepted at a peer-reviewed conference after a full review process. Here is what nobody is saying out loud. Medical research has a brutal bottleneck where ideas pile up, experiments take months, papers take even longer, and patients wait the entire time. That problem just got a serious solution, and the implications for healthcare are enormous. — https://nitter.net/ihtesham2005/status/2039009949276319824#m

→ View original post on X — @sallyeaves, 2026-04-01 06:55 UTC