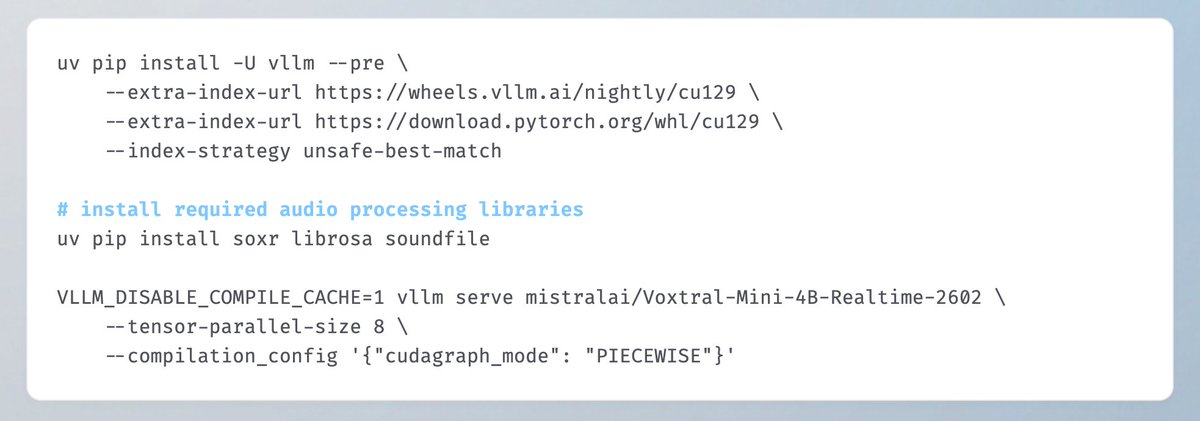

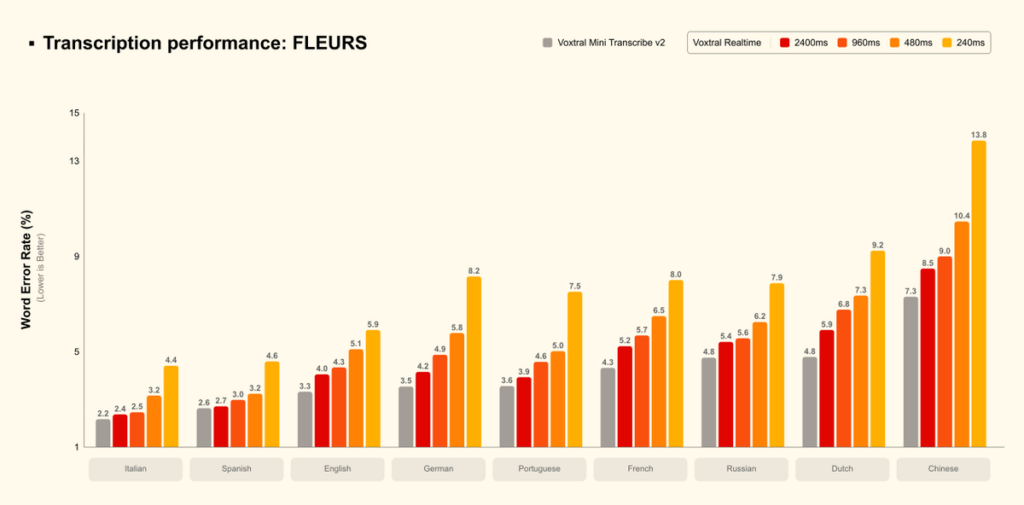

Congrats to @MistralAI on releasing Voxtral Mini 4B Realtime! 🎉 Day-0 support in vLLM! A 4B streaming ASR model achieving <500ms latency while matching offline model accuracy, supporting 13 languages. vLLM's new Realtime API `/v1/realtime` provides audio streaming – optimized for voice assistants, live subtitles, and meeting transcription! Thanks to the close collaboration between the vLLM community and @MistralAI for making this production-grade support possible 🤝 📑Model & Usage: huggingface.co/mistralai/Vox… Mistral AI (@MistralAI) Voxtral Realtime is built for voice agents and live applications. Its natively streaming architecture delivers latency configurable to sub-200ms. And at 480ms, it stays within 1-2% WER of our offline model. We release the model as open weights under Apache 2.0. — https://nitter.net/MistralAI/status/2019068828257333466#m

→ View original post on X — @guillaumelample, 2026-02-04 17:51 UTC